- Private Preview

- Lakehouse Tiered Storage

Get started with Lakehouse tiered storage

Note

This feature is currently in private preview. If you want to try it out or have any questions, submit a ticket to the support team.

Introduction

This guide provides a comprehensive walkthrough on enabling Lakehouse tiered storage on StreamNative Cloud. You will learn how to offload data to Lakehouse products, perform streaming reads, and execute batch reads seamlessly.

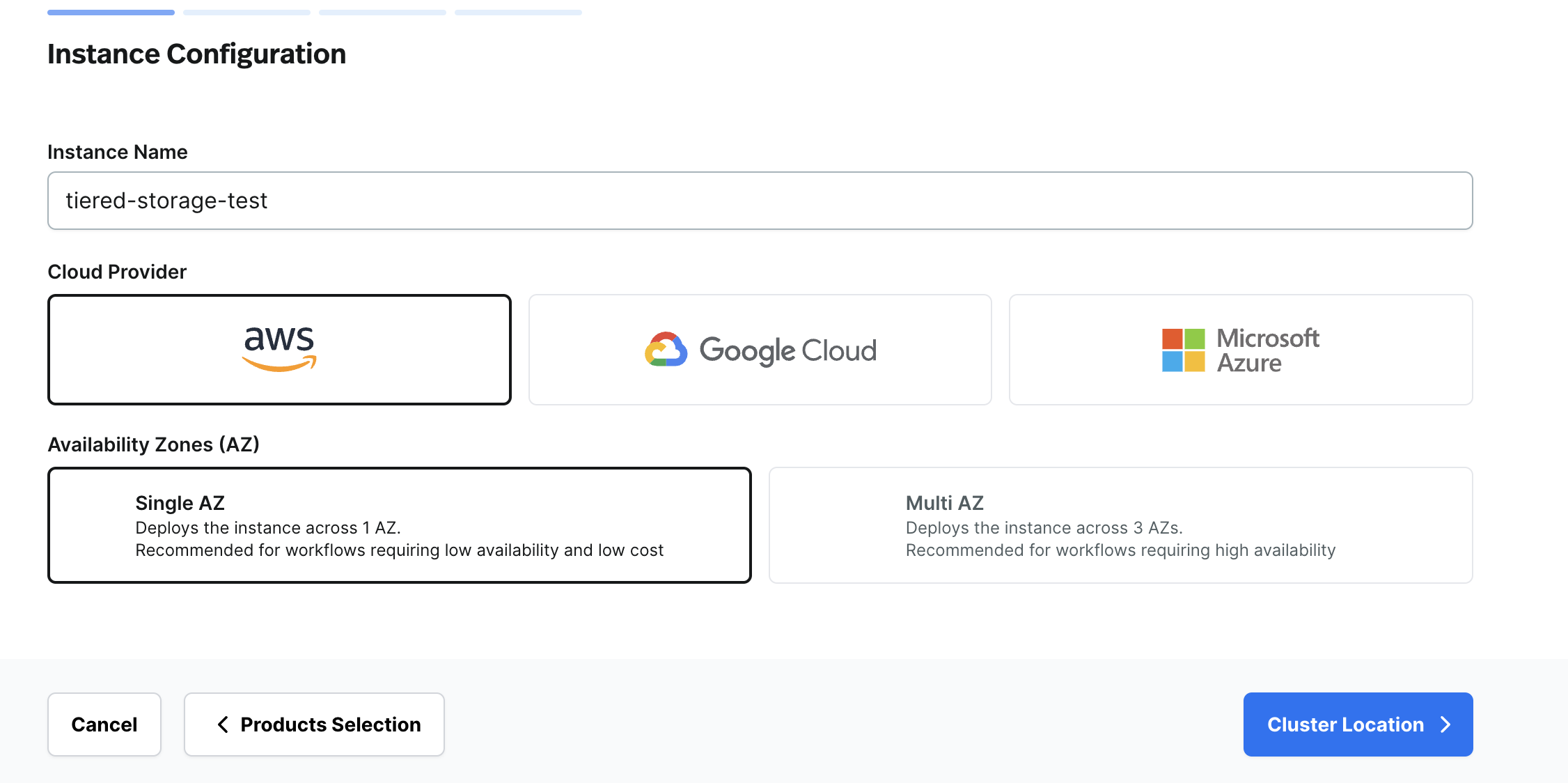

Step 1: Enable Lakehouse Tiered Storage on StreamNative Cloud

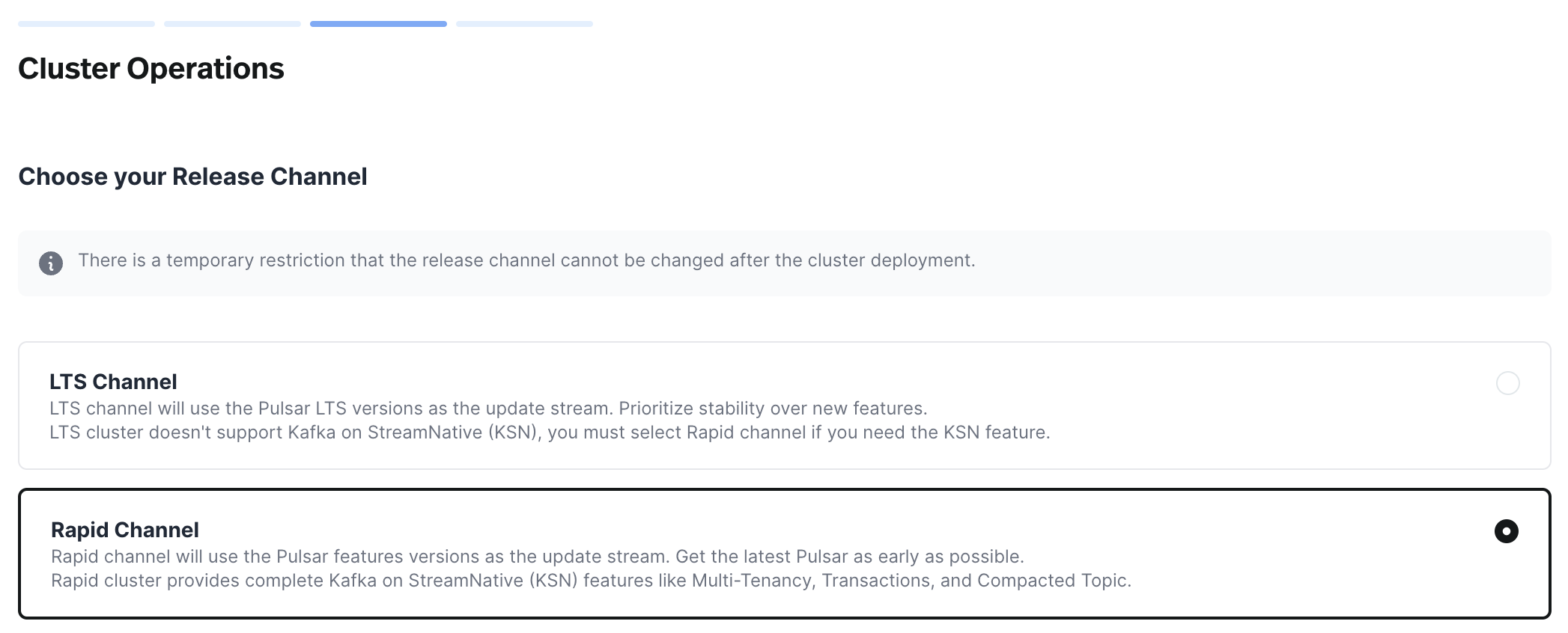

Lakehouse tiered storage is a new feature that requires manual activation, and only available in the Rapid release channel. Follow these steps to enable it:

- Contact the StreamNative support team to enable the Lakehouse tiered storage feature for your account.

- When creating a new Pulsar cluster, ensure to select the Rapid release channel.

- For existing Pulsar clusters, contact the StreamNative support team to switch to the Rapid release channel.

Lakehouse tiered storage currently supports Delta Lake with open formats. By default, data is offloaded to a Delta Lake table. Data storage is supported on AWS S3 and GCP GCS based on your cloud provider.

Upon completing the steps above, Lakehouse tiered storage will be available on your Pulsar cluster. However, it requires manual activation on the namespace level by setting the offload threshold.

Example:

bin/pulsar-admin namespaces set-offload-threshold public/default --size 0

To disable offloading, set the threshold to -1:

bin/pulsar-admin namespaces set-offload-threshold public/default --size -1 --time -1

Step 2: Offload Data to Lakehouse

Once Lakehouse tiered storage is enabled for your namespace or cluster, data can be offloaded automatically by producing messages to your topic. Currently, the feature supports AVRO schema and Pulsar primitive schema.

For testing, use pulsar perf to produce messages to a topic:

bin/pulsar-perf produce -r 1000 -u pulsar://localhost:6650 persistent://public/default/test-topic

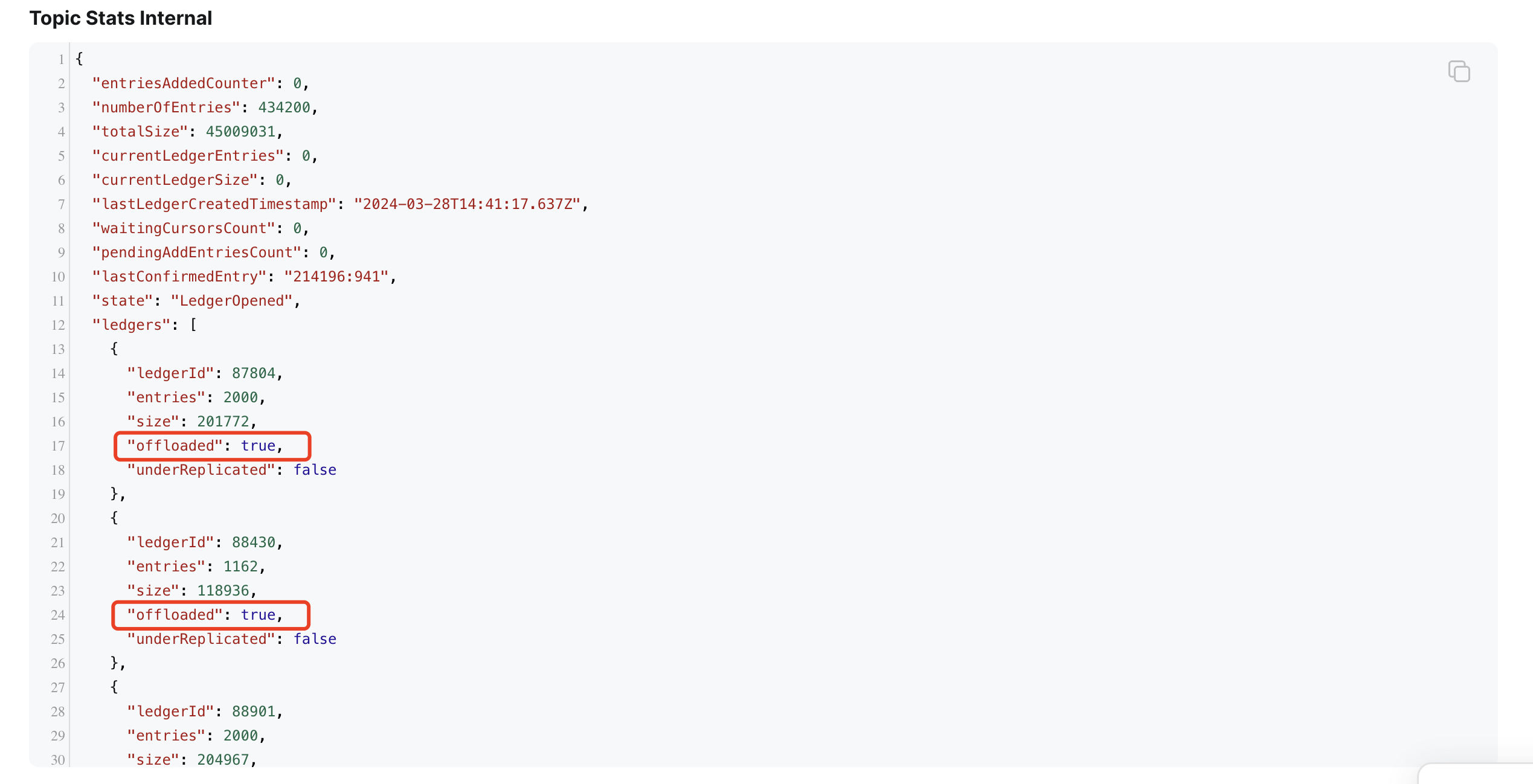

Check if data has been offloaded by examining topic internal stats using pulsar-admin or StreamNative Cloud console:

bin/pulsar-admin topics stats-internal persistent://public/default/test-topic

Step 3: Read Data from Lakehouse

Once data is offloaded to Lakehouse, you can read it using Pulsar reader/consumer API or the Lakehouse product API.

Streaming Read from Lakehouse

Utilize the Pulsar reader/consumer API to access data from Lakehouse. Create a reader/consumer using the Pulsar client to read data seamlessly.

For testing, consume messages from a topic using pulsar perf:

bin/pulsar-perf consume -ss test_sub -sp Earliest -u pulsar://localhost:6650 persistent://public/default/test-topic

Batch Read from Lakehouse

Hosted Cluster

Coming soon.

BYOC Cluster

The S3 or GCS bucket is owned by the user, and the data is stored in the bucket. You can use Spark SQL, Athena, Trino, or other tools to read Delta table data from the bucket.

Disable Lakehouse Tiered Storage

If you want to disable the Lakehouse tiered storage feature, set the offload threshold to -1 using pulsar-admin:

bin/pulsar-admin namespaces set-offload-threshold public/default --size -1 --time -1

After disabling Lakehouse tiered storage, data will no longer be offloaded. Ensure not to delete offload configurations or Lakehouse tables to prevent data loss.

Limitations

Framework limitations include:

- Schema evolution does not support deletion

- Supported schema types vary for different offloaders

- AVRO and Pulsar primitive schema (Delta offloader)

- All the schema types for HDFS offloader

- Inability to configure different drivers for distinct namespaces/topics

Delta-specific limitations:

- Avoid using Spark for small file compaction to prevent interference with delta streaming reads. Separate support for the compaction feature is available.

Notice

In Lakehouse tiered storage, the RawReader is utilized to retrieve messages from Pulsar topics and write them to the Lakehouse. An essential component of this process is the offload cursor, which marks the progress of the offloading operation. It's crucial to note that advancing the offload cursor prematurely can lead to data loss.

When considering the configuration of Time-to-Live (TTL) settings at the namespace or topic level, there are two key options to adhere to:

- Do Not Configure TTL at Namespace or Topic Level:

- This option ensures that no TTL constraints are imposed at the namespace or topic level, allowing data to persist without automatic expiration based on time.

- Configure TTL at Namespace or Topic Level:

- If TTL settings are implemented at the namespace or topic level, it is imperative to ensure that the TTL value is greater than the retention policy set for the topic or namespace.

By carefully considering these options and aligning TTL configurations with retention policies, organizations can effectively manage data lifecycle and retention within the Lakehouse tiered storage environment, safeguarding against unintended data loss scenarios.

Demo

Watch a demo video demonstrating data offloading and reading from Lakehouse.