This guide describes how to prepare a Databricks Unity Catalog for use with StreamNative Ursa as a Delta Lake catalog on Microsoft Azure.Documentation Index

Fetch the complete documentation index at: https://docs.streamnative.io/llms.txt

Use this file to discover all available pages before exploring further.

Prerequisites

- An Azure subscription with permissions to create storage accounts and Access Connectors

- A Databricks workspace on Azure

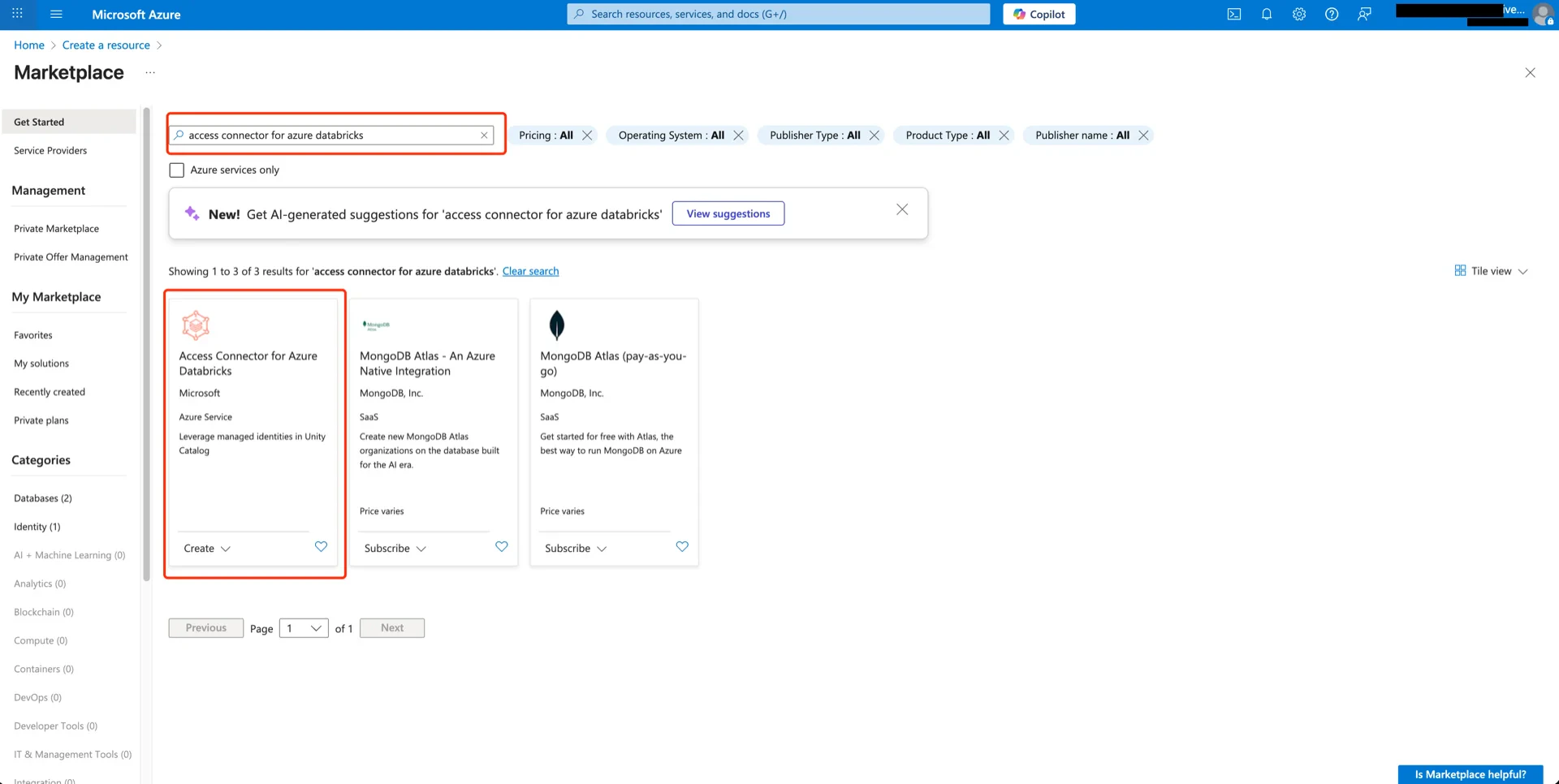

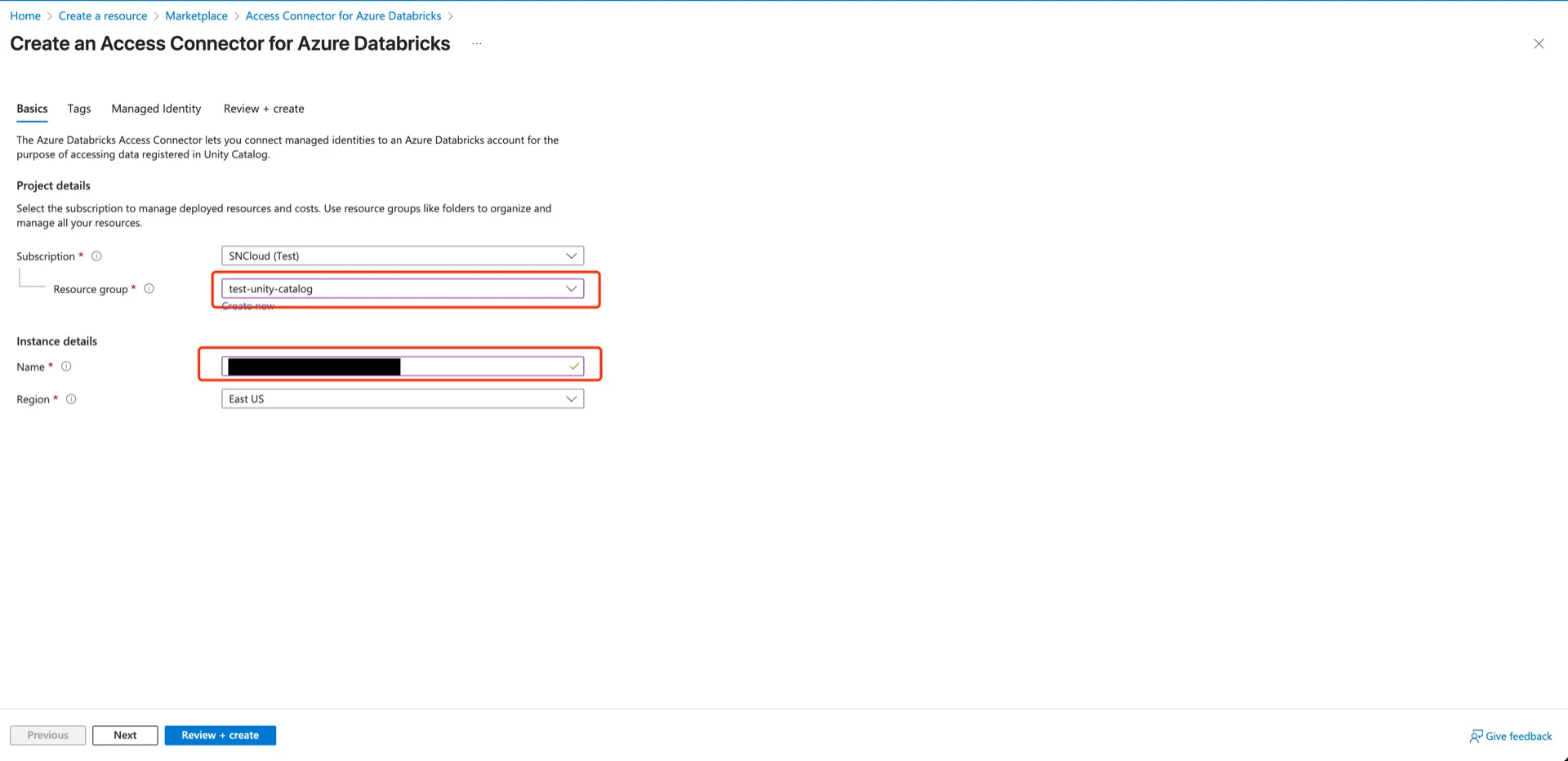

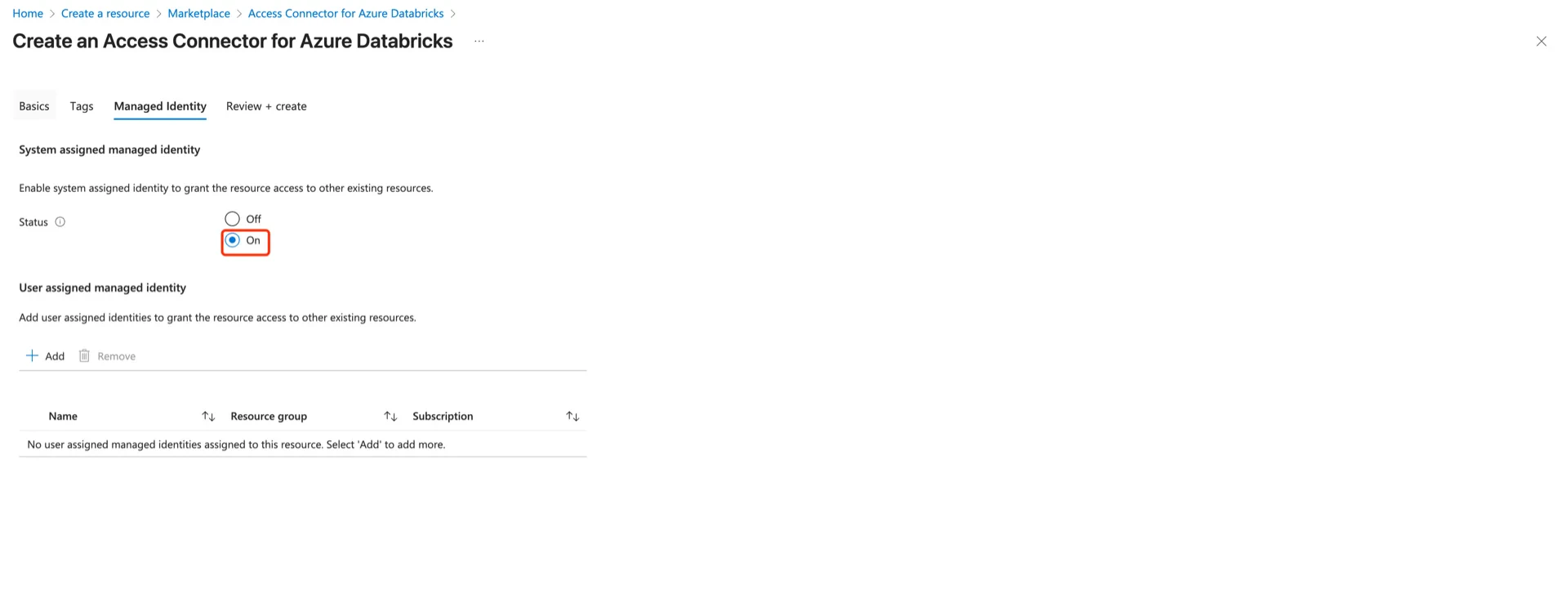

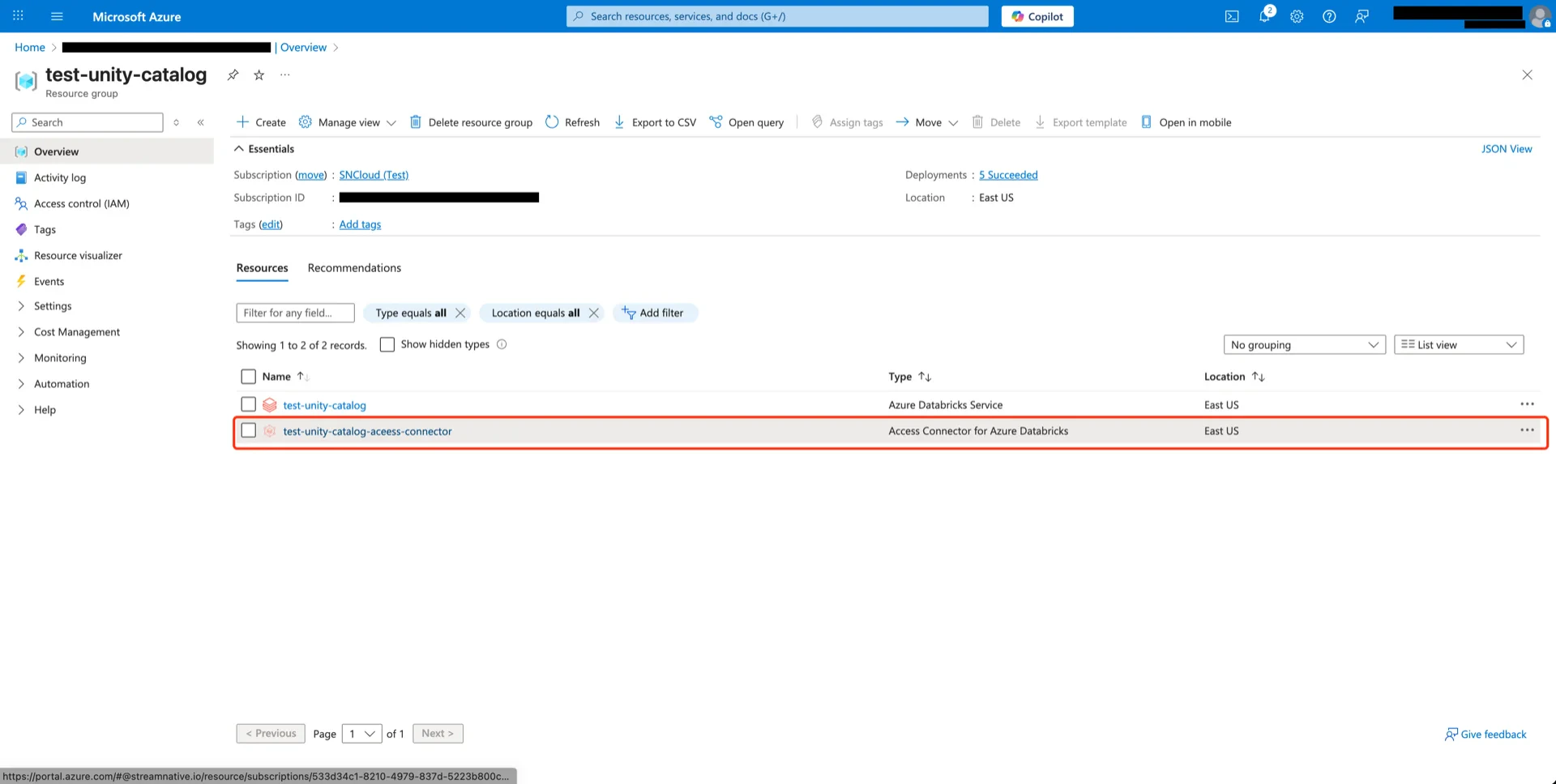

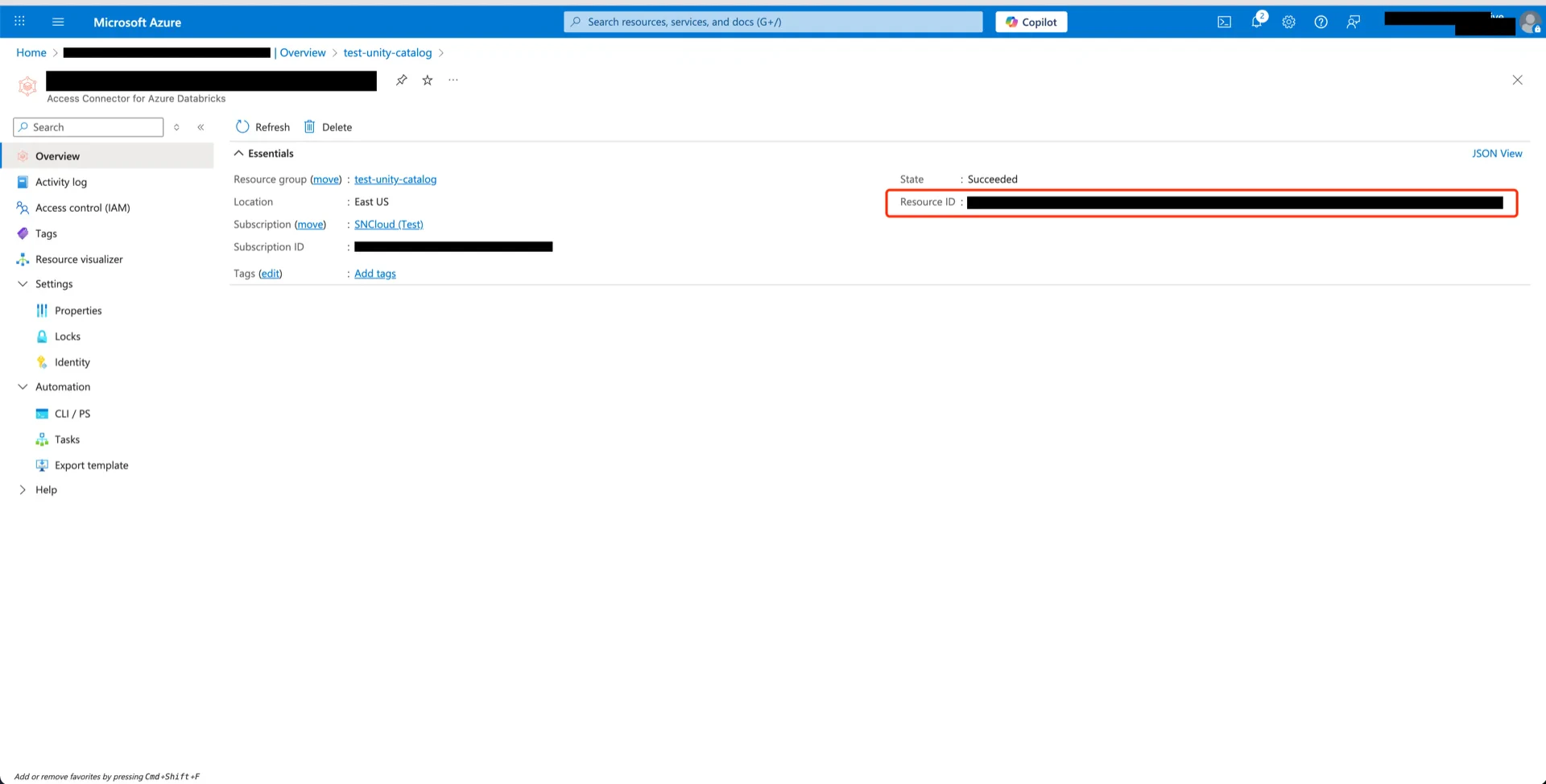

1. Create an Access Connector for Azure Databricks

In the Azure Marketplace, search for Access Connector for Azure Databricks and click Create.

unity-catalog-access-connector), and click Next.

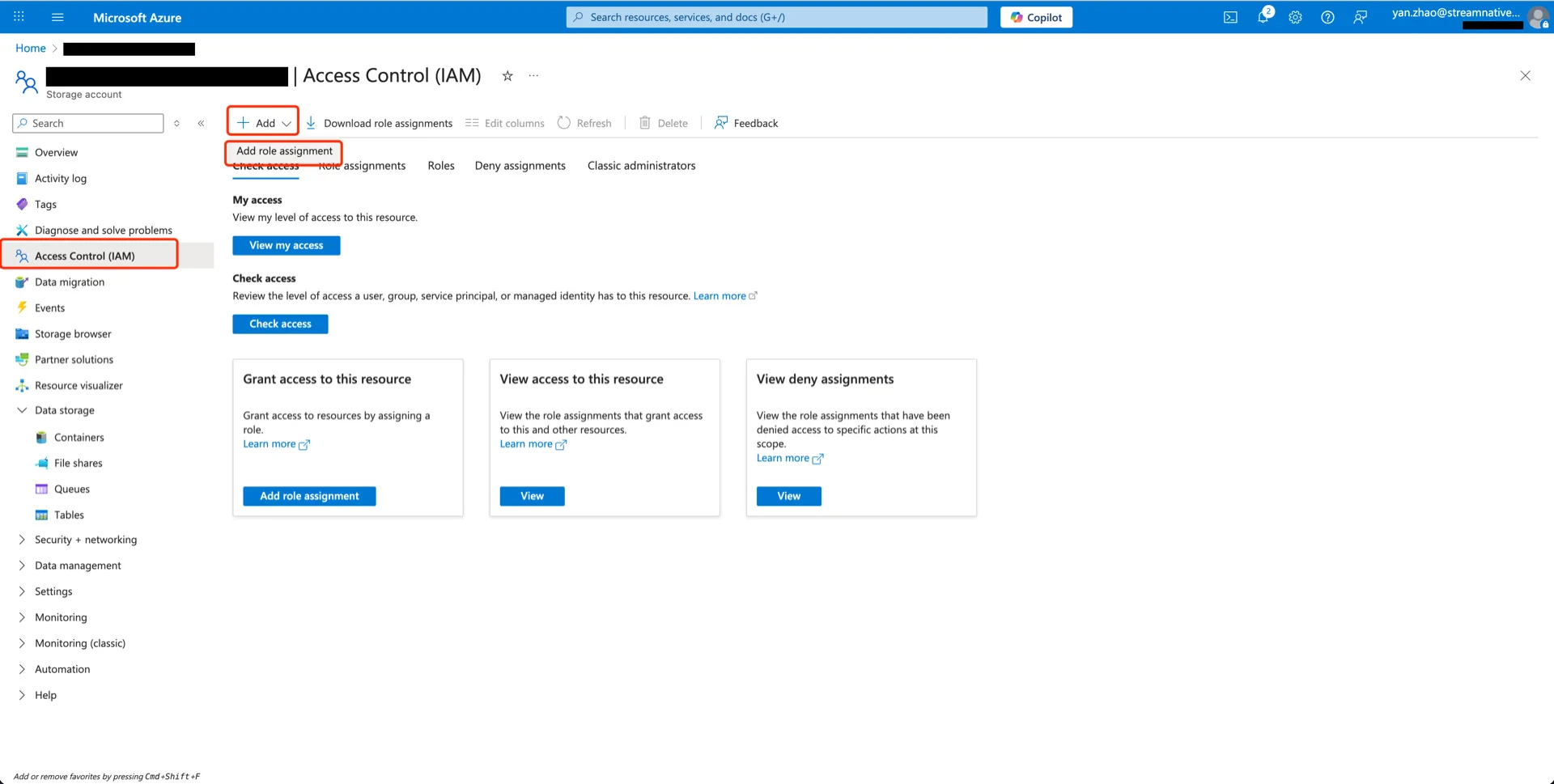

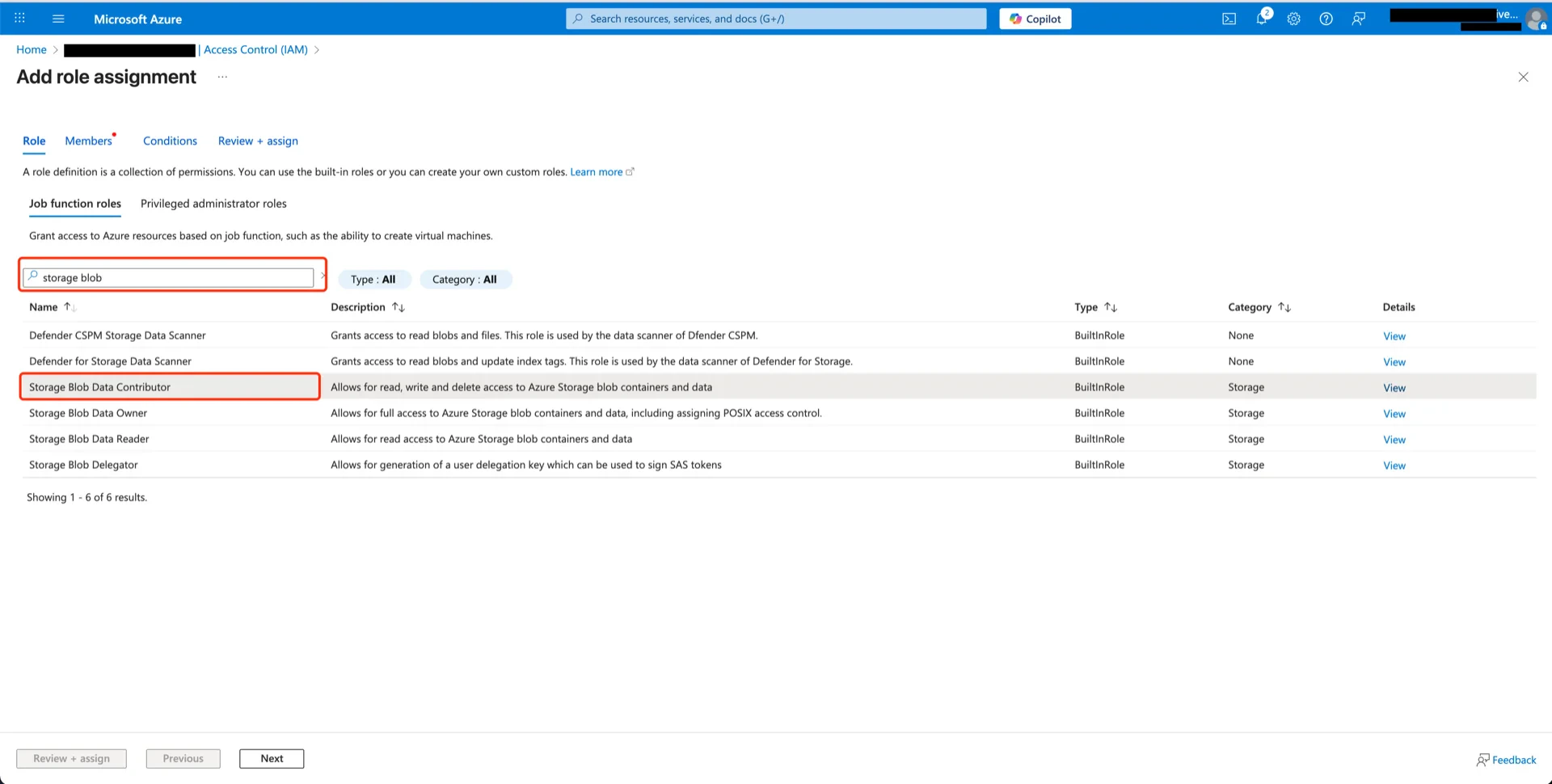

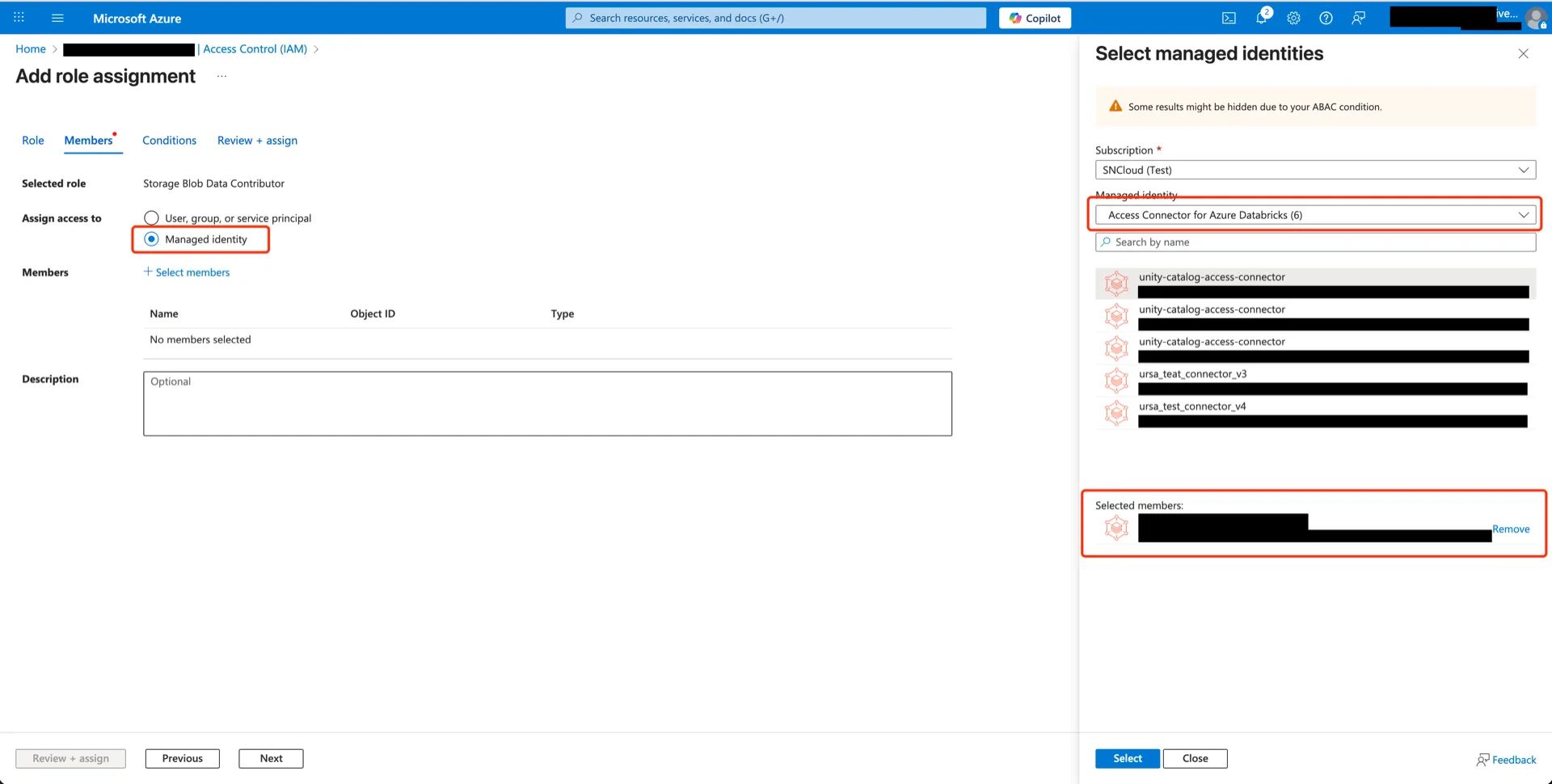

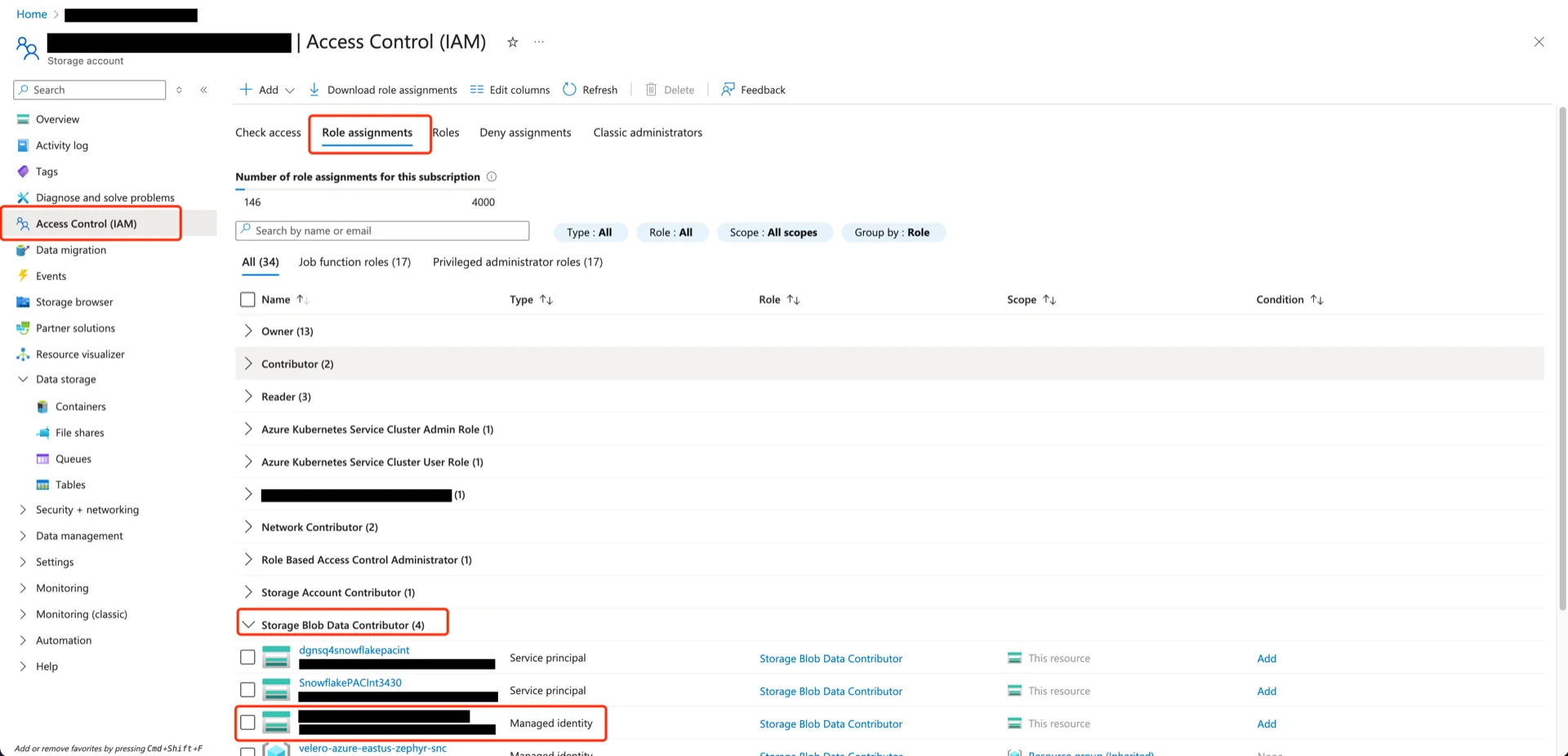

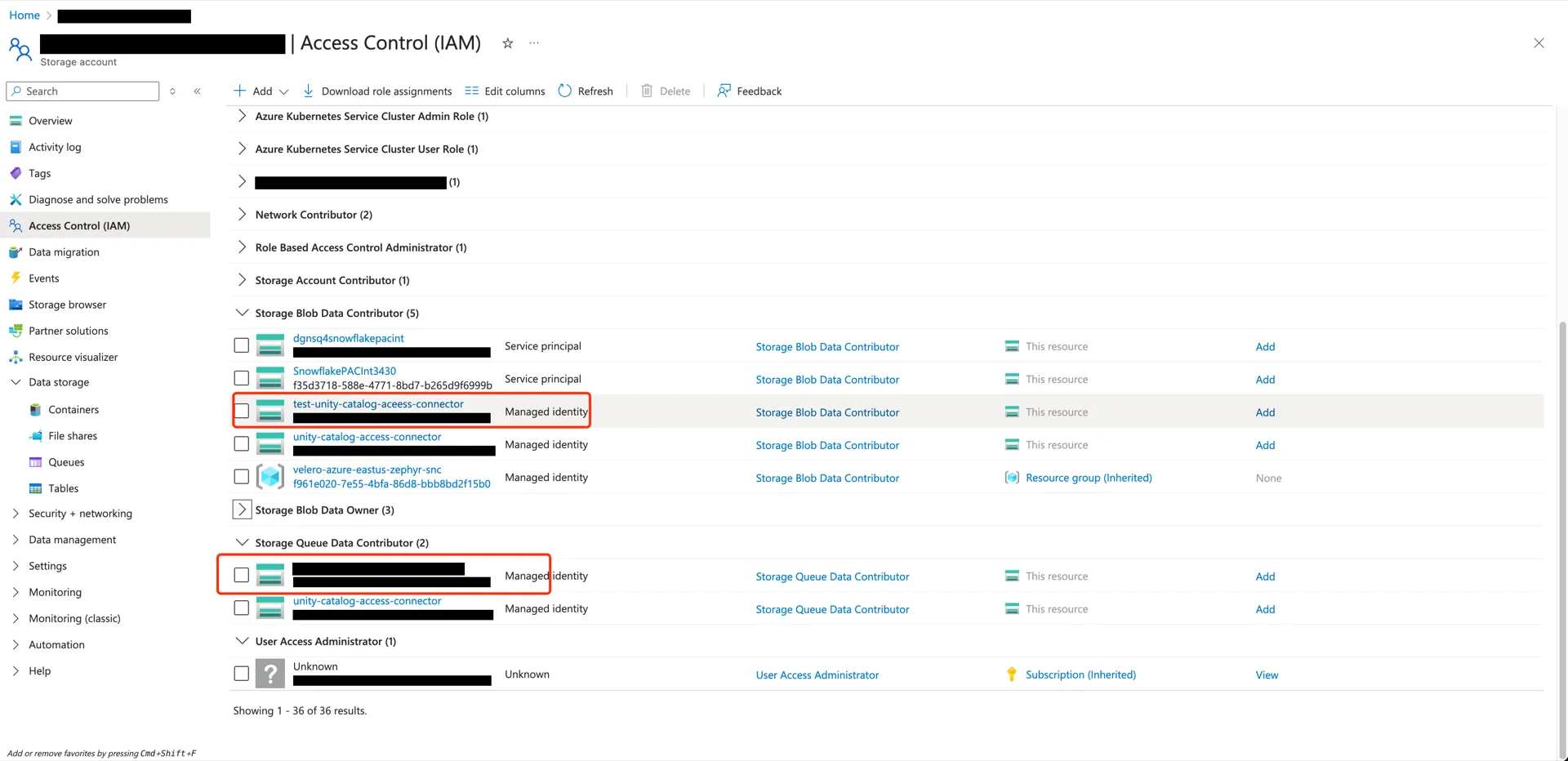

2. Grant Storage Blob Data Contributor to the Connector

Open the storage account that will hold the Delta tables, navigate to Access Control (IAM) -> Add -> Add role assignment.

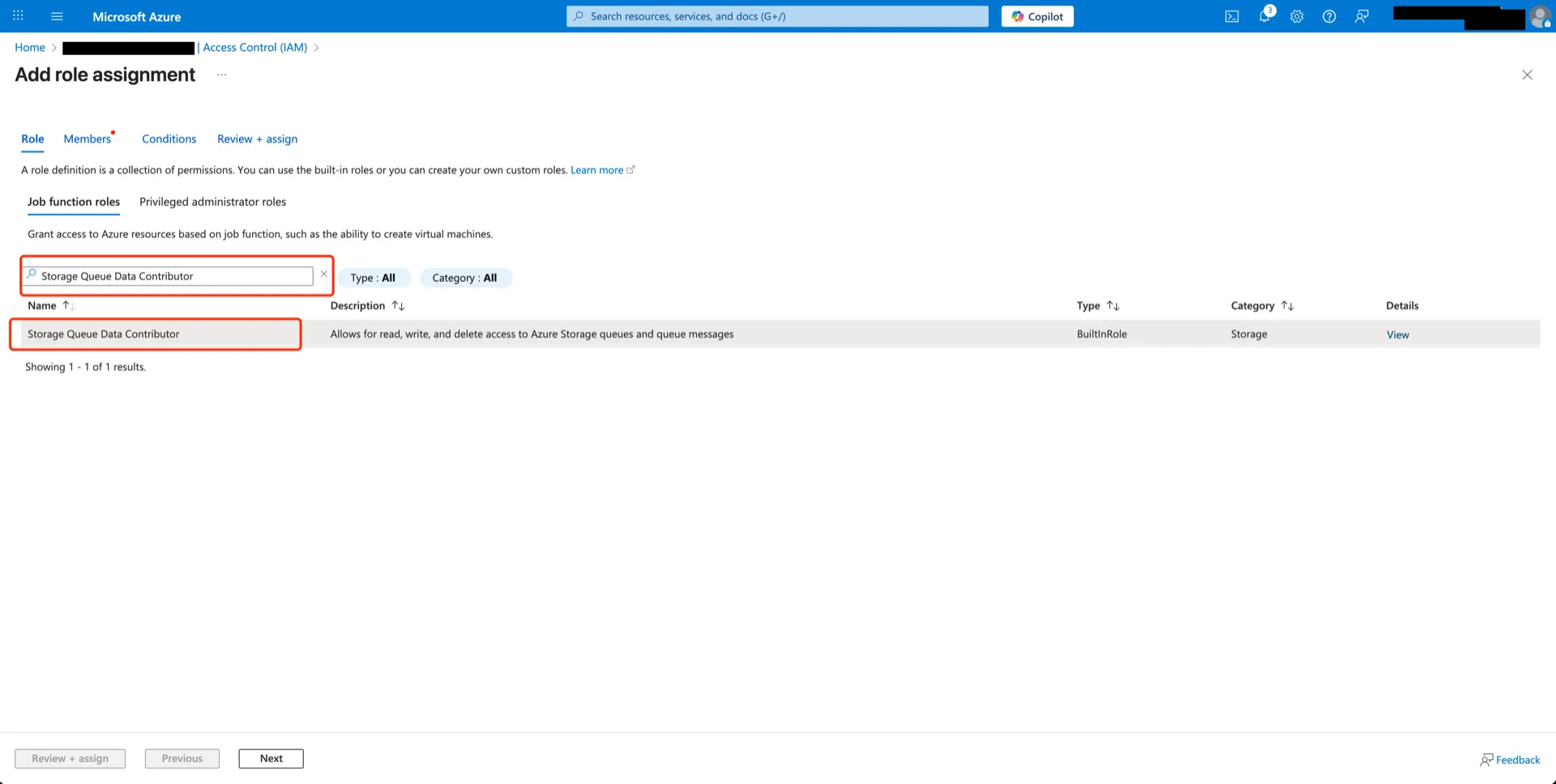

3. Grant Storage Queue Data Contributor to the Connector

Repeat the process from step 2 with the Storage Queue Data Contributor role.

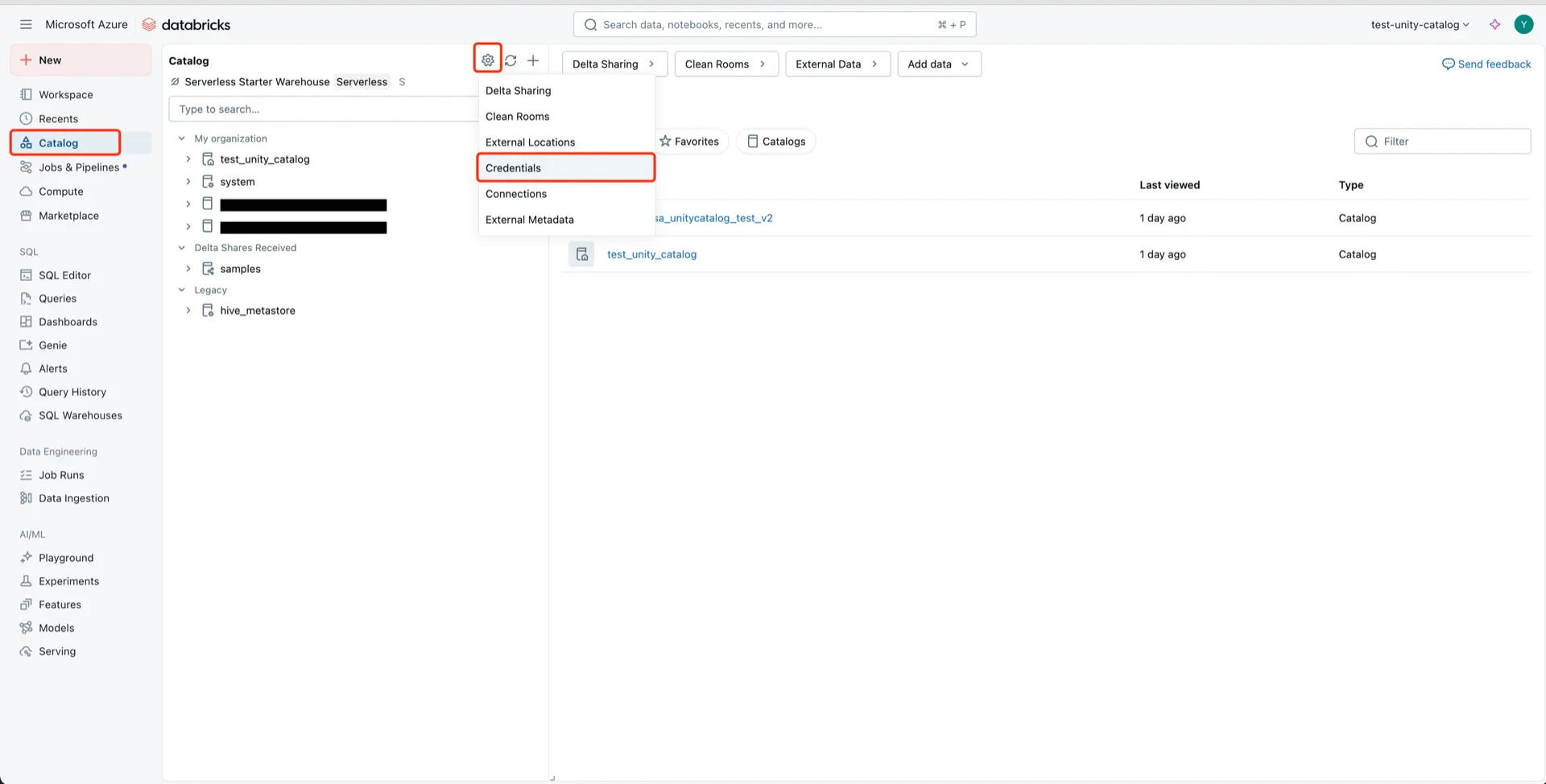

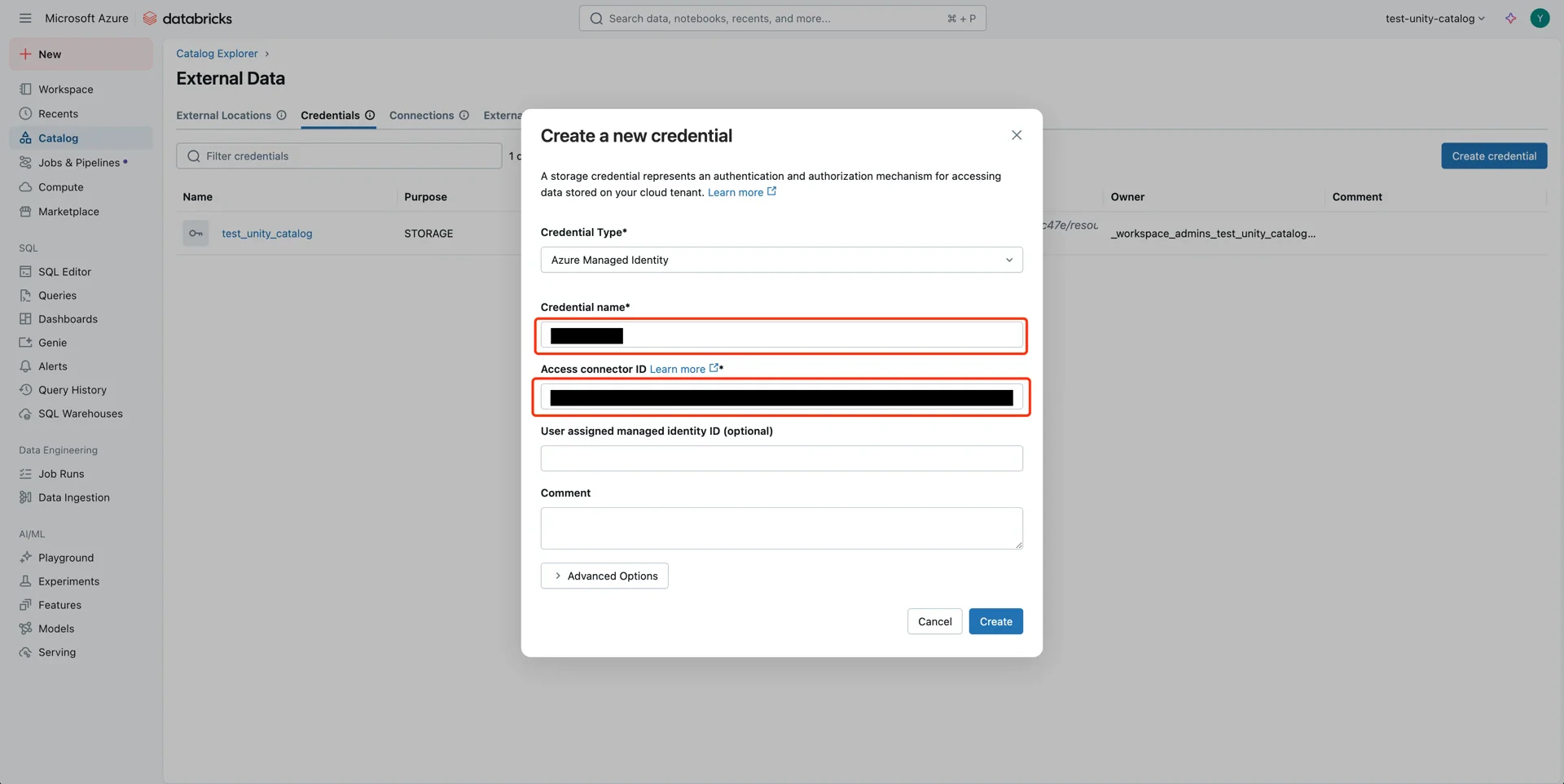

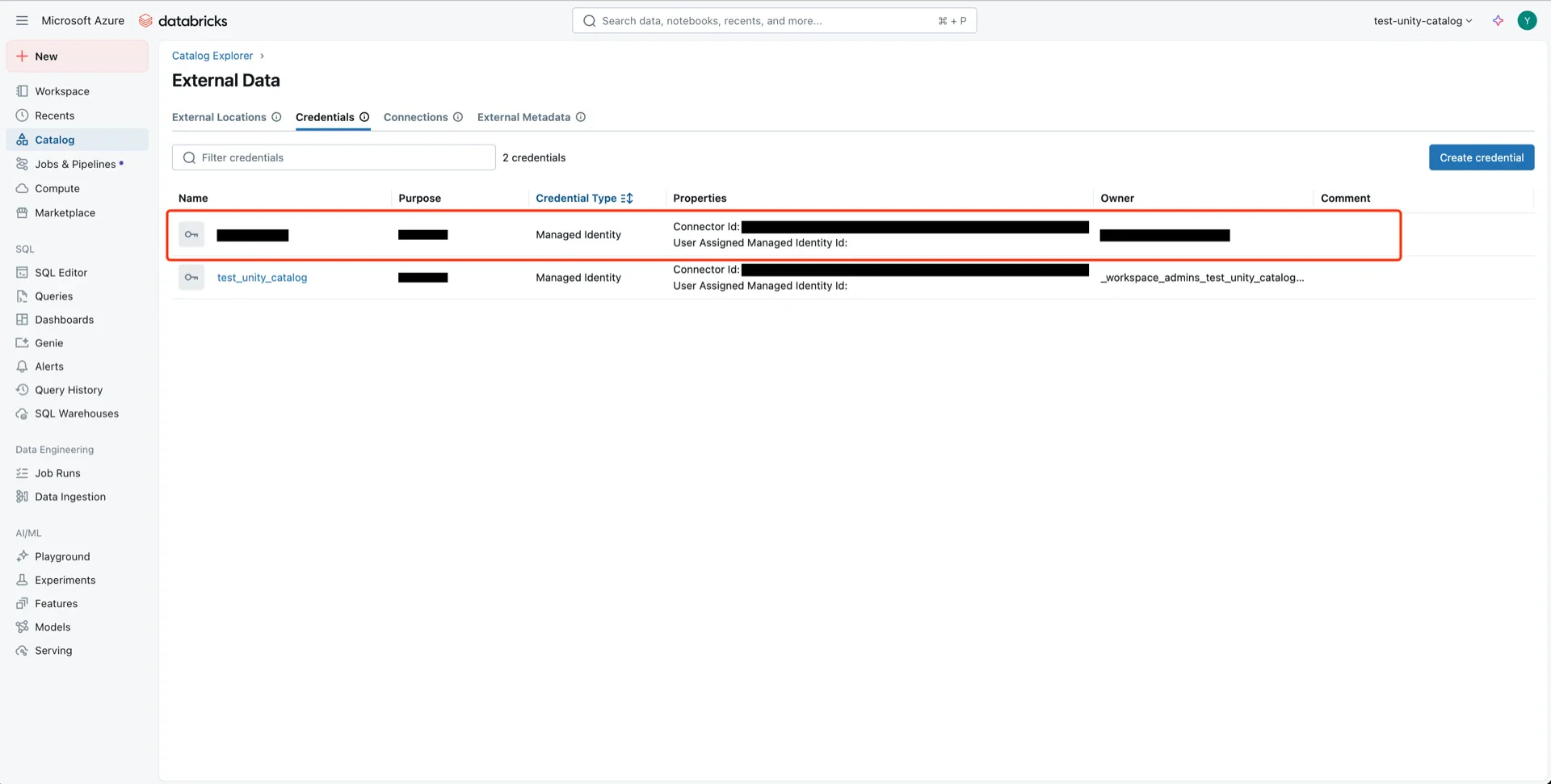

4. Create a Storage Credential in Unity Catalog

In the Databricks Catalog console, navigate to Catalog -> Settings -> Credentials.

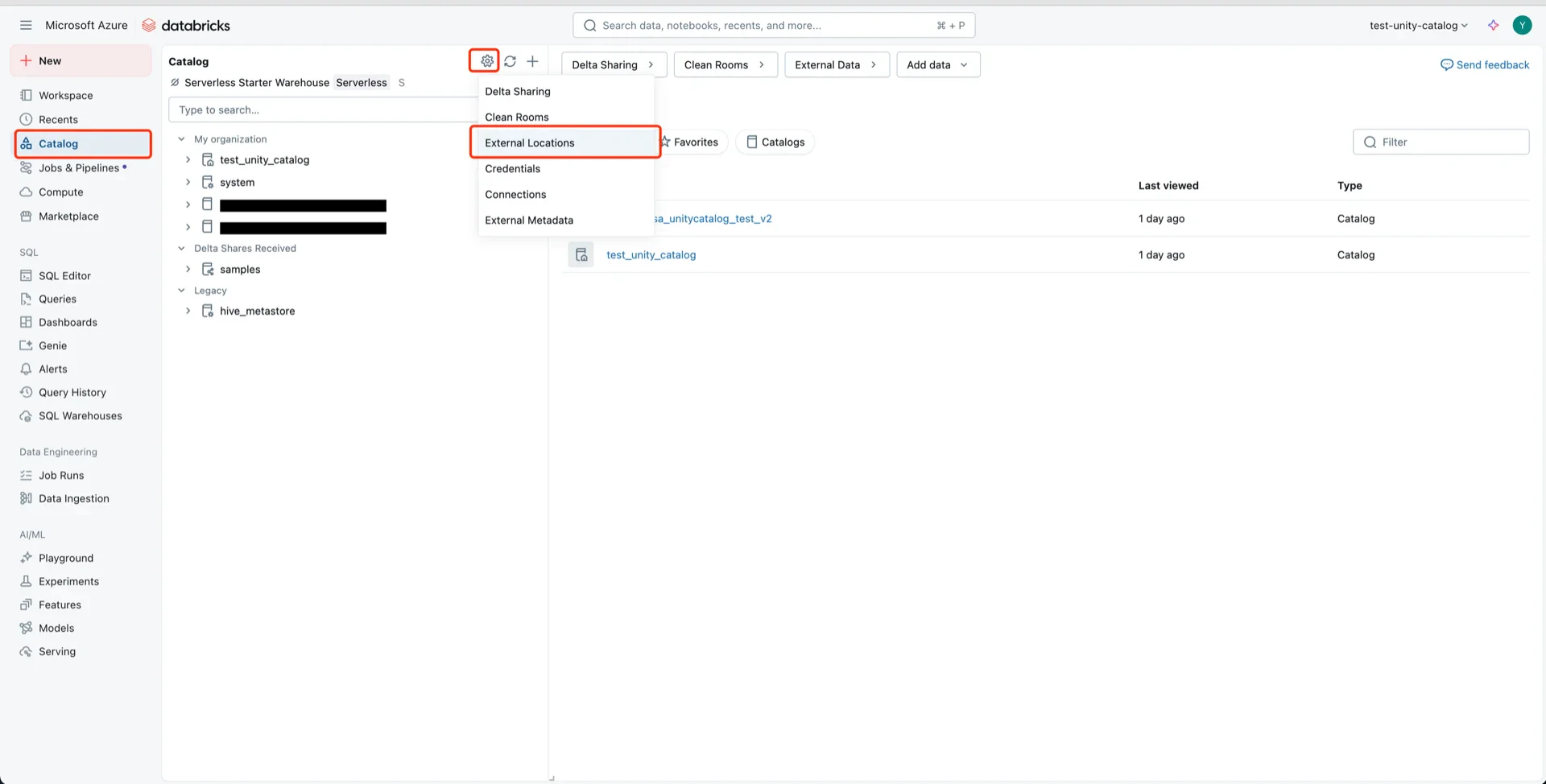

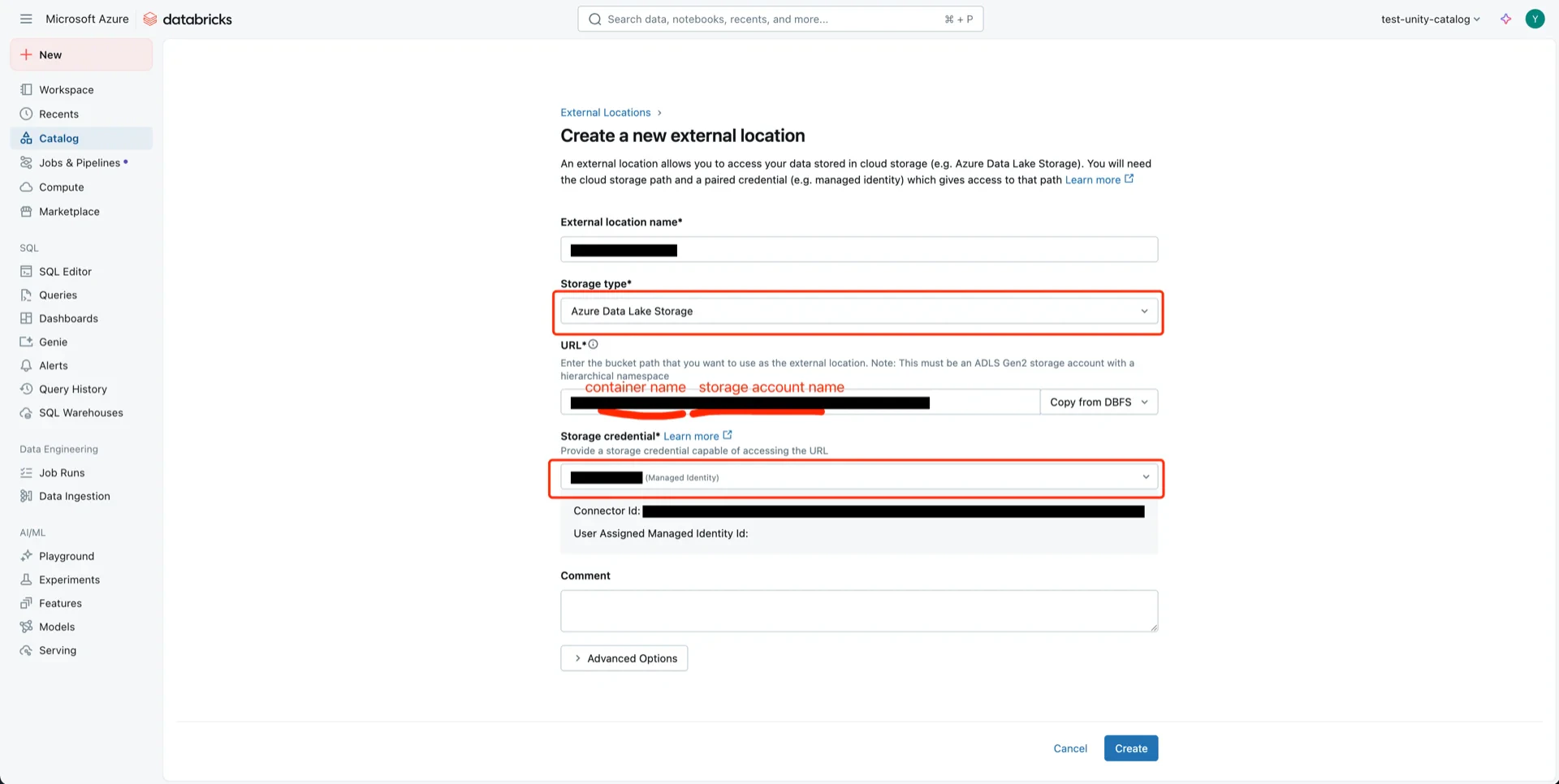

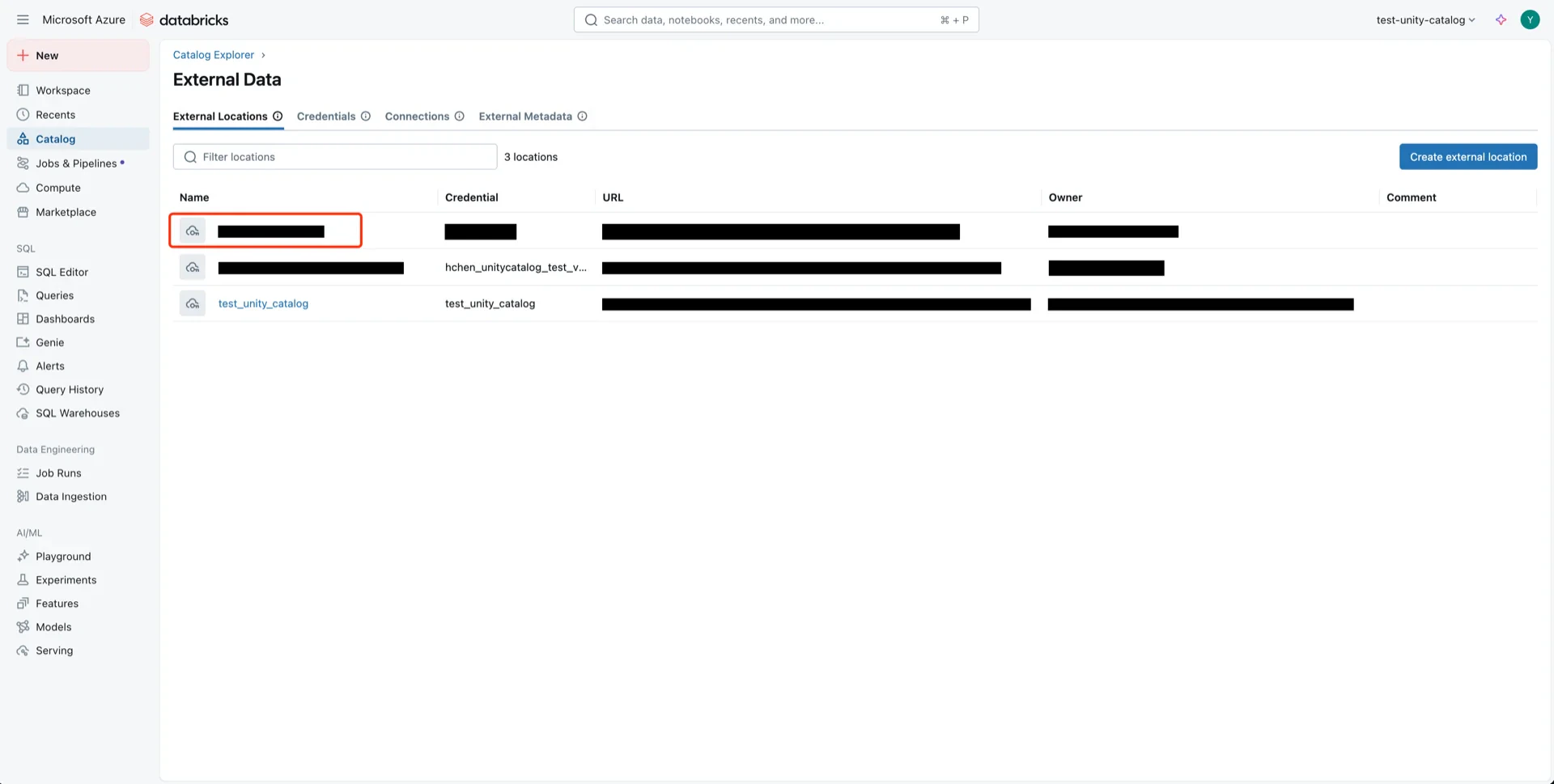

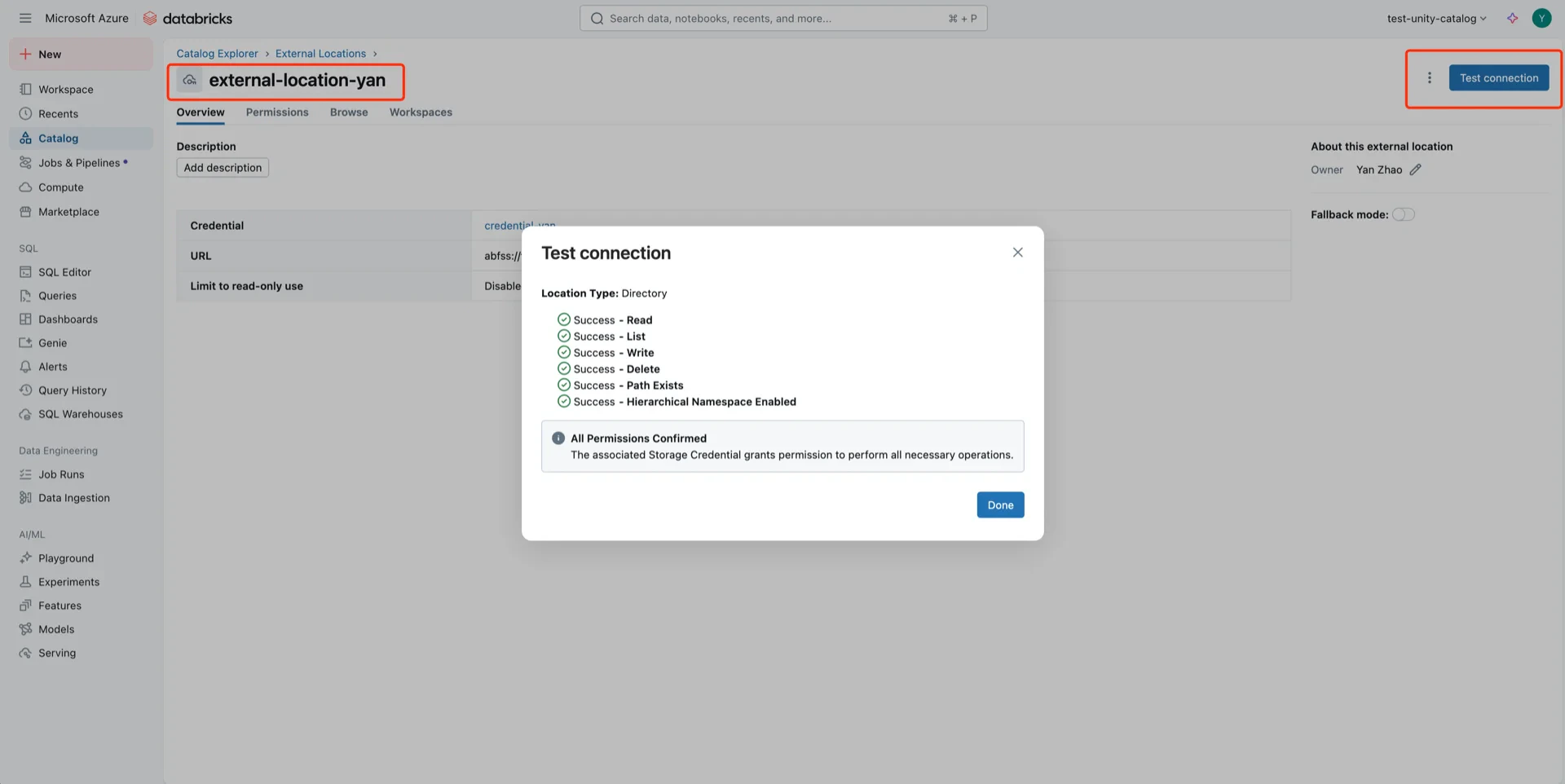

5. Create an External Location

In the Databricks Catalog console, create a new external location.

- Storage type: Azure Data Lake Storage

- URL:

abfss://<container>@<storage-account>.dfs.core.windows.net - Storage credential: the credential created in step 4

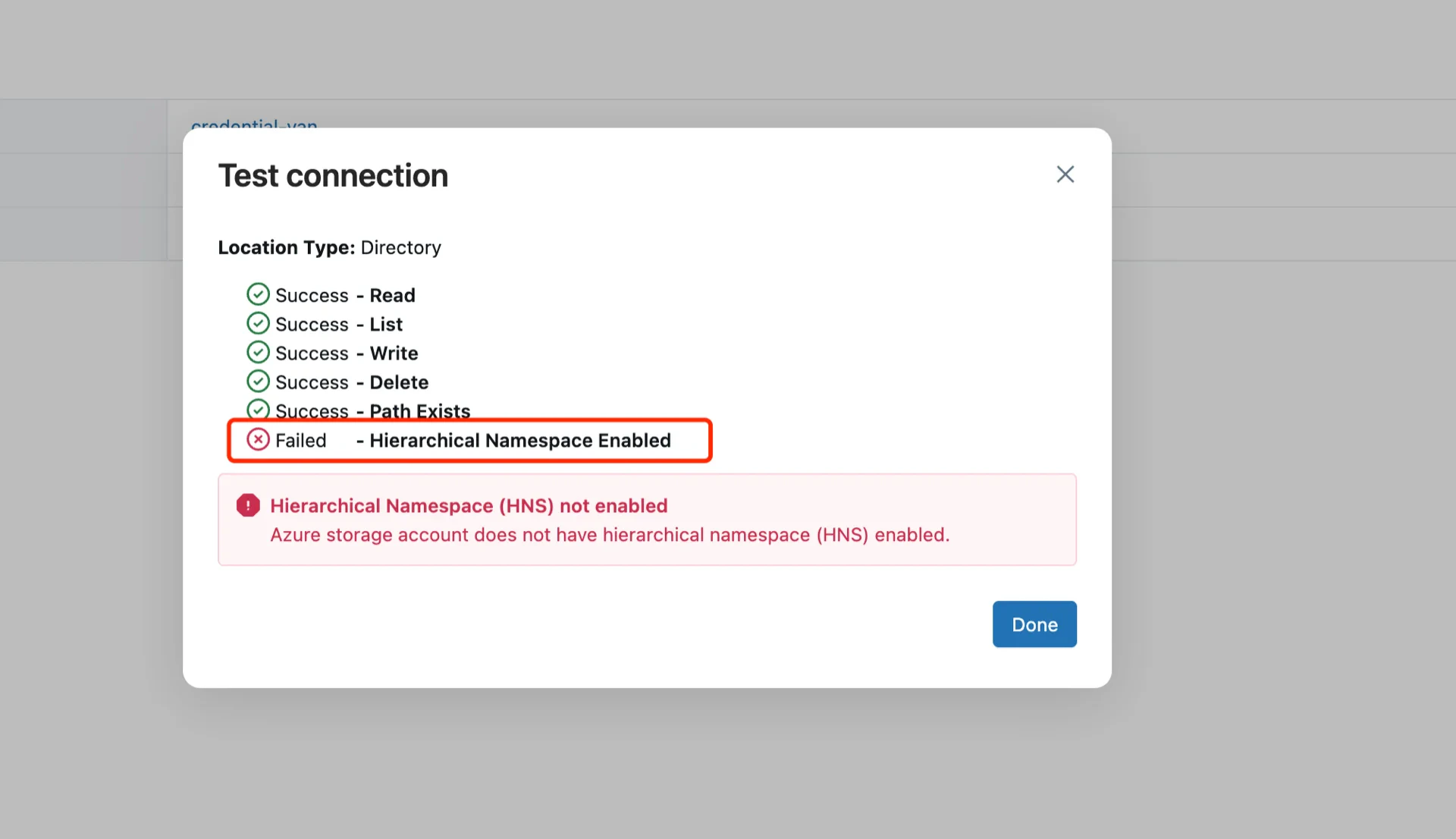

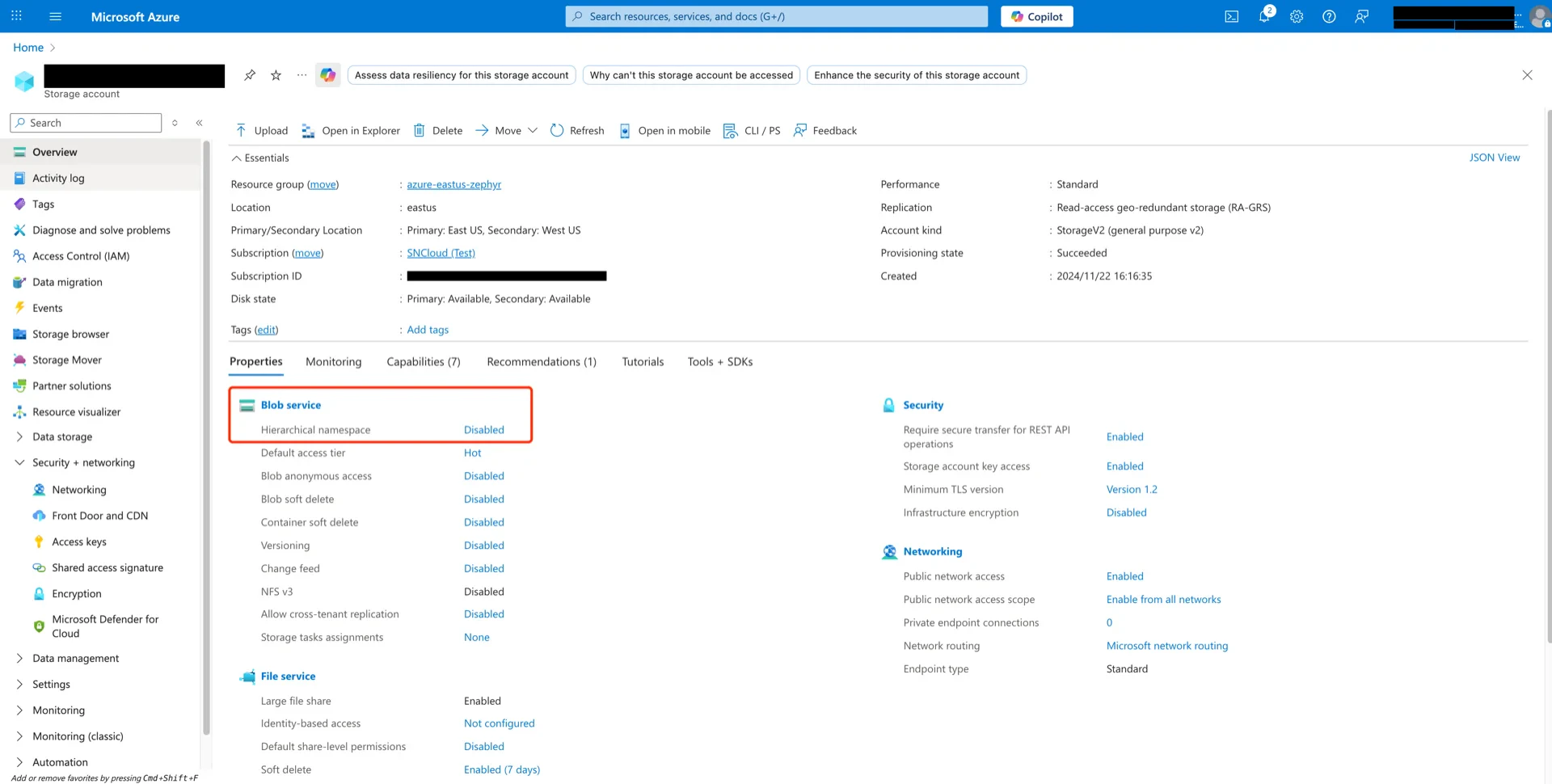

Troubleshooting: If the test fails with a Hierarchical Namespace Enabled error, ensure that Hierarchical namespace is enabled on the storage account.

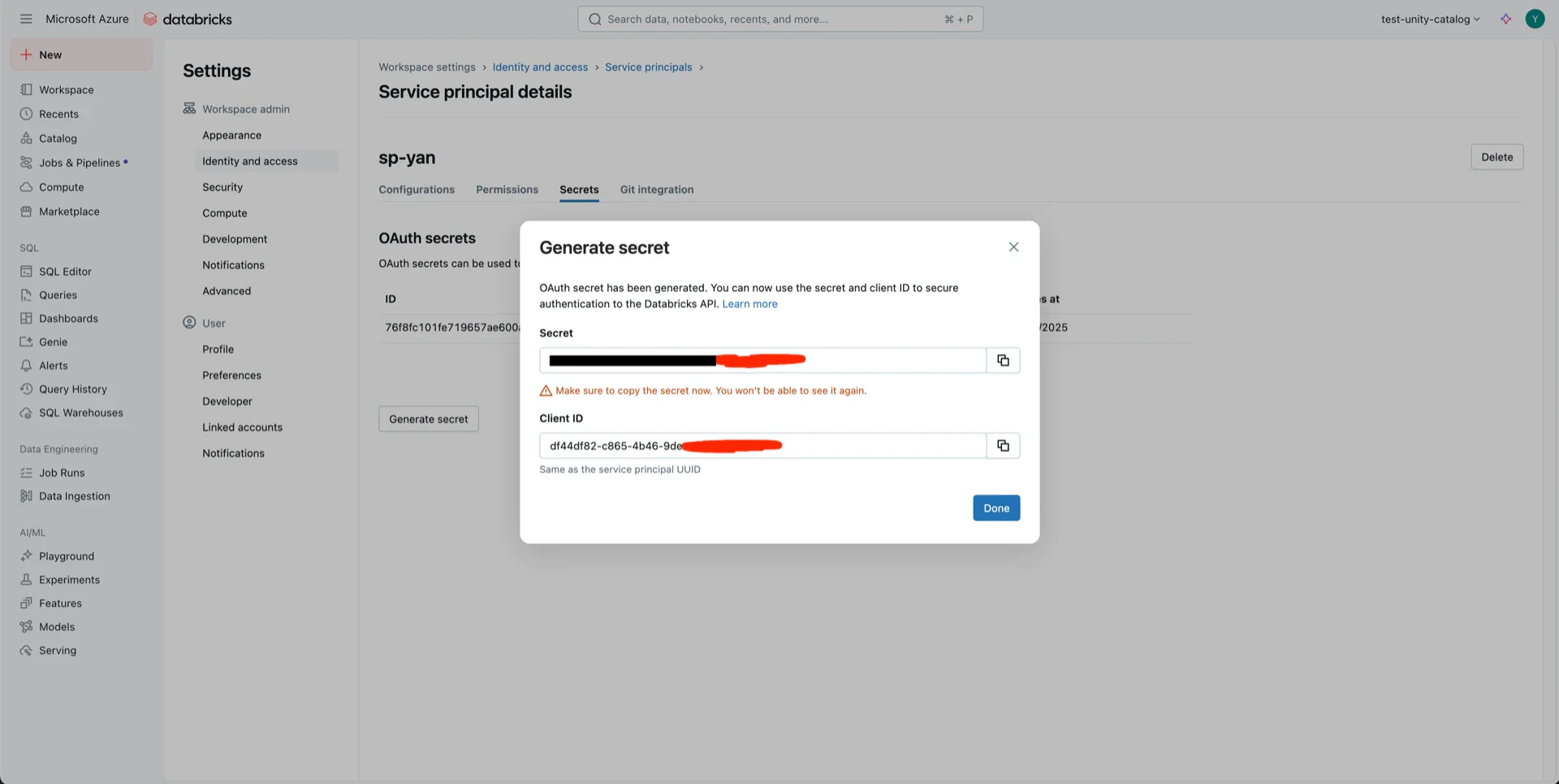

6. Create a Service Principal

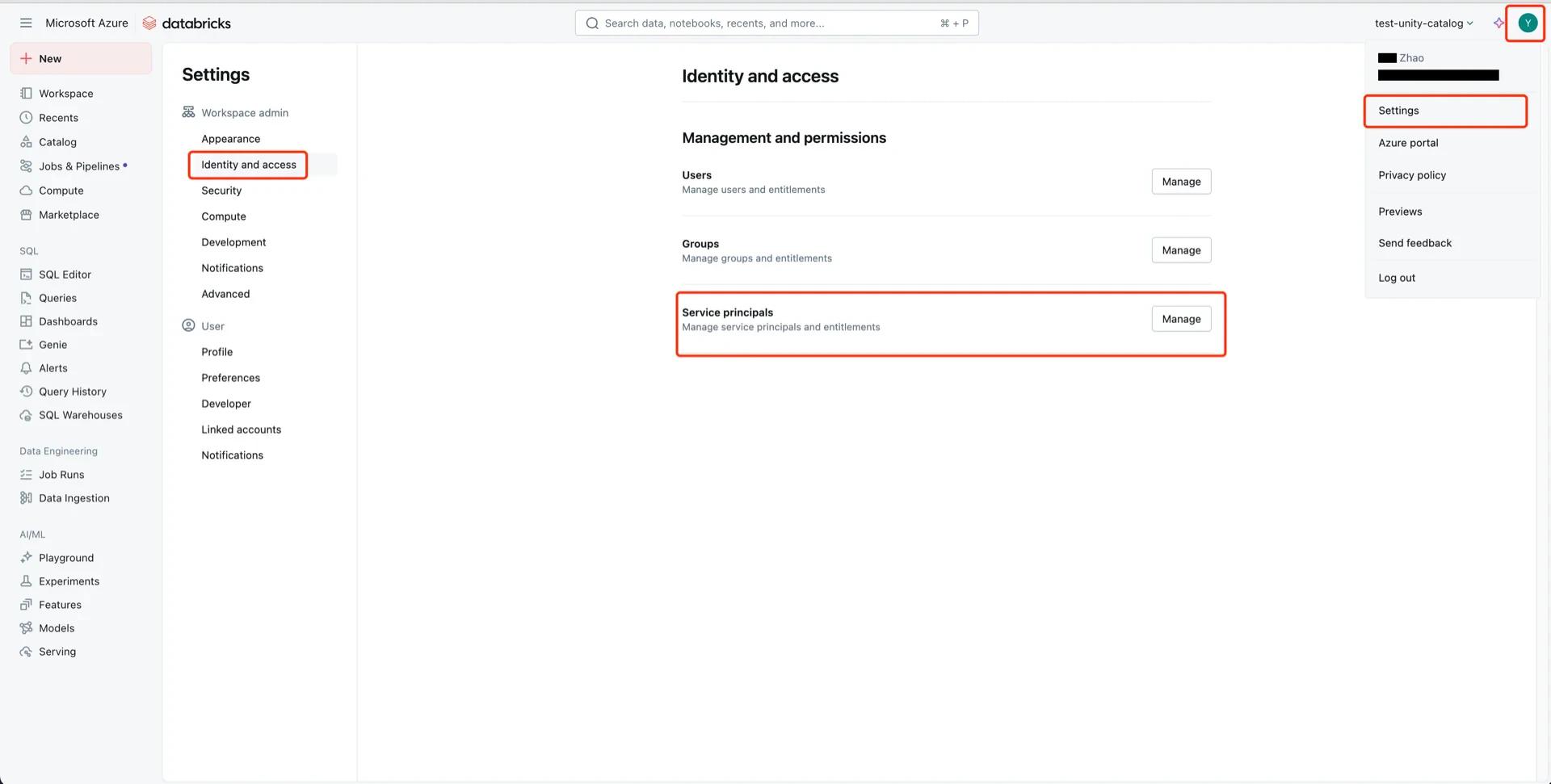

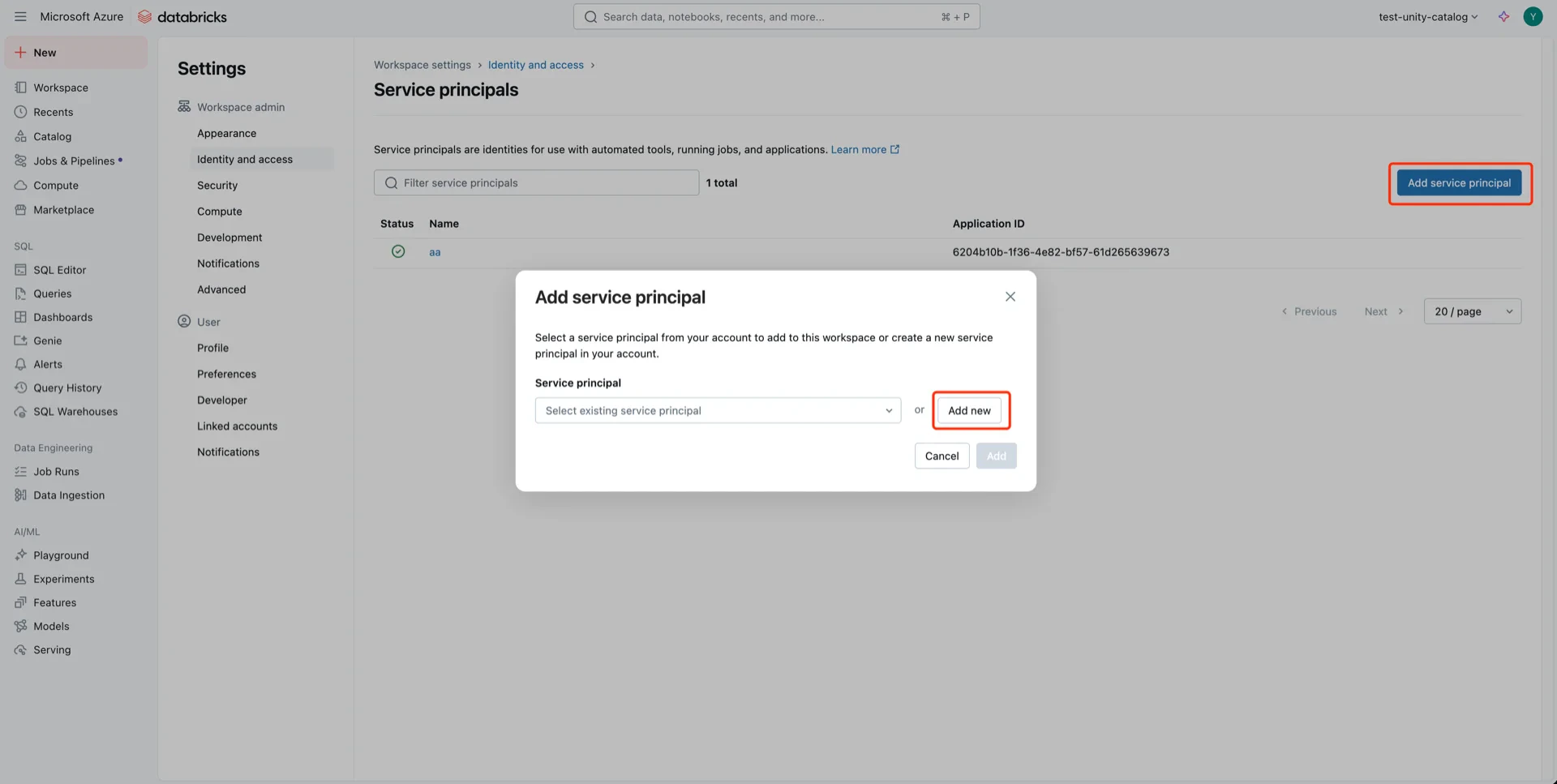

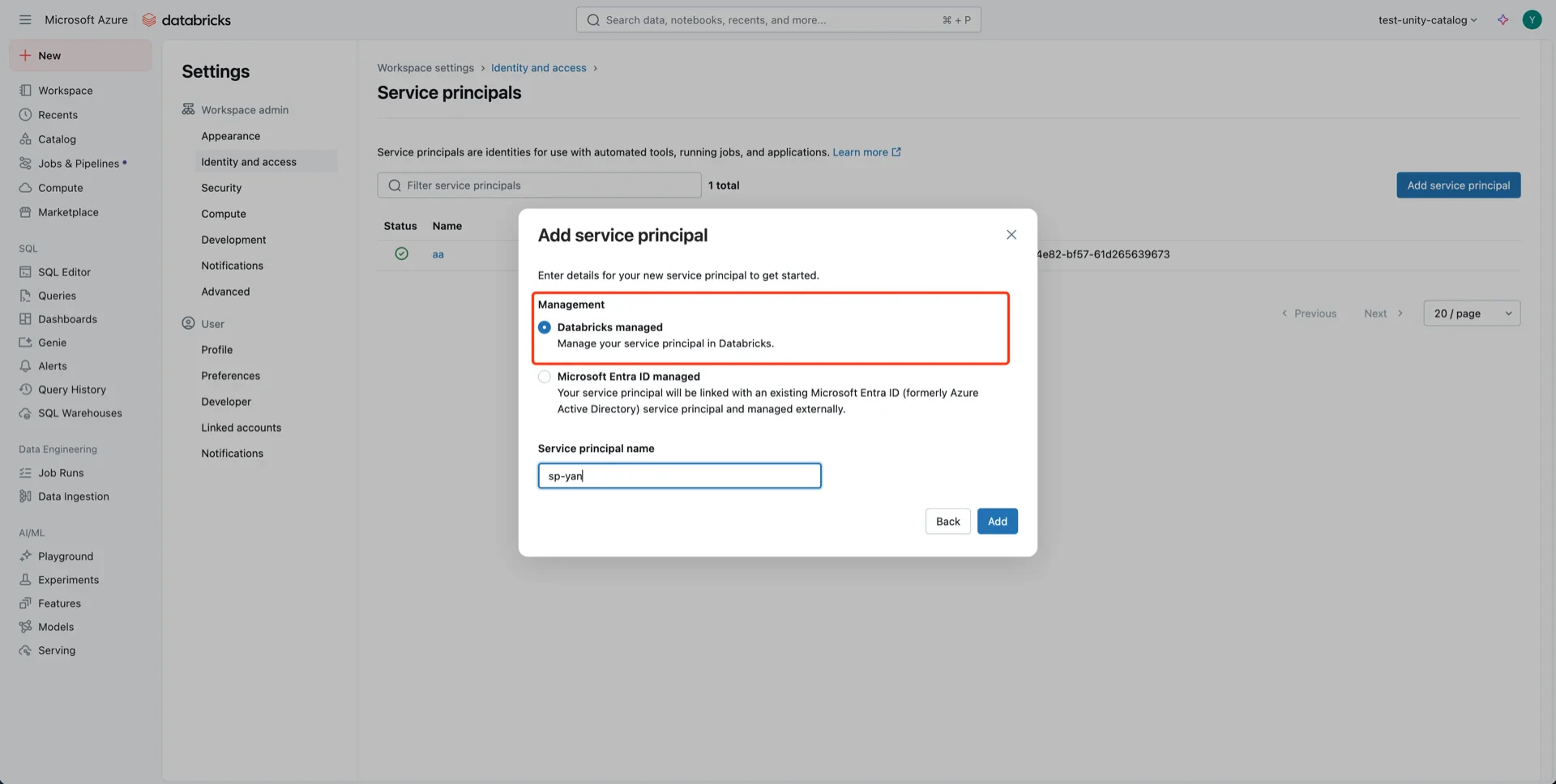

Navigate to User -> Settings -> Identity and access -> Service principals -> Manage.

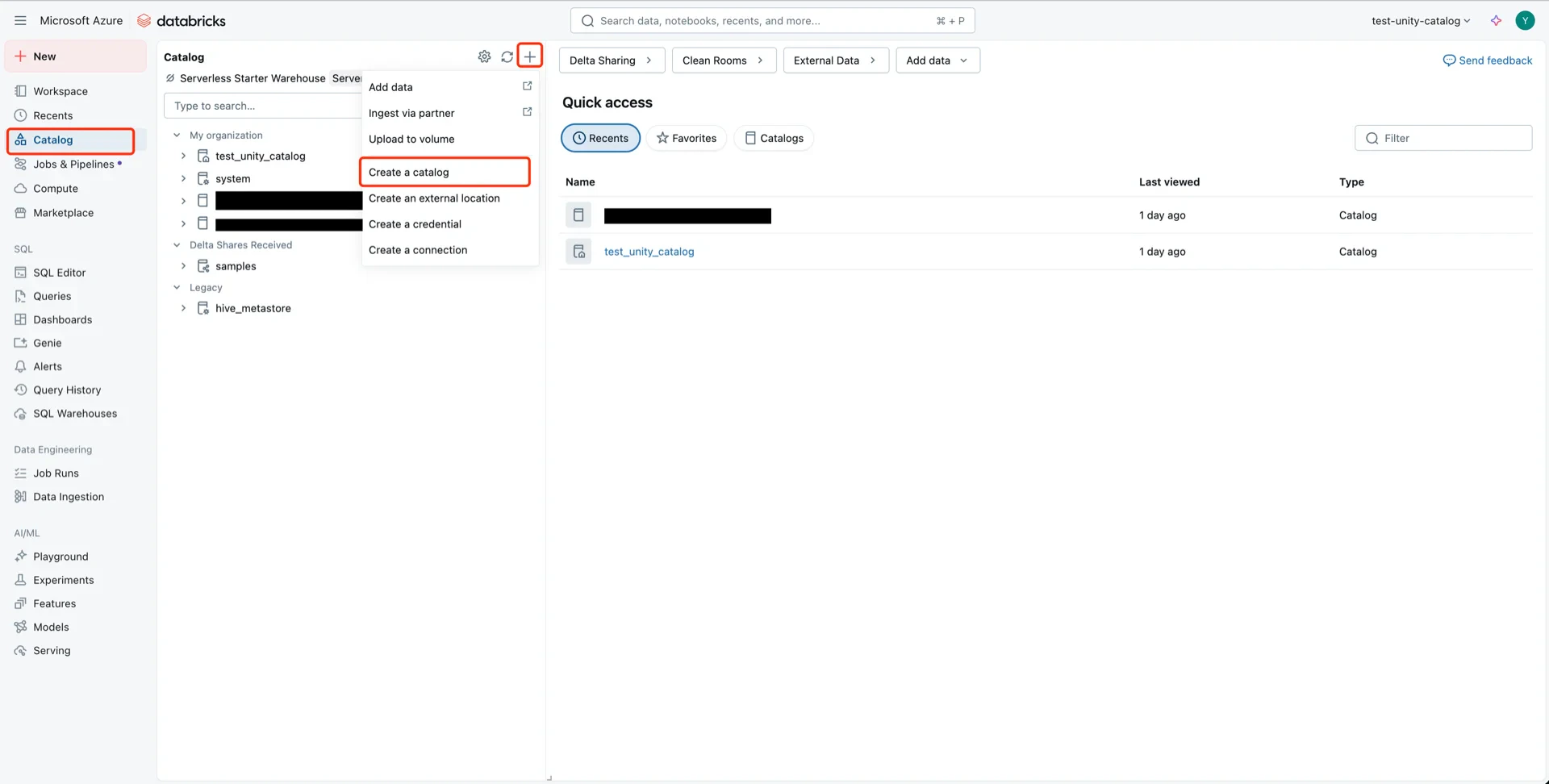

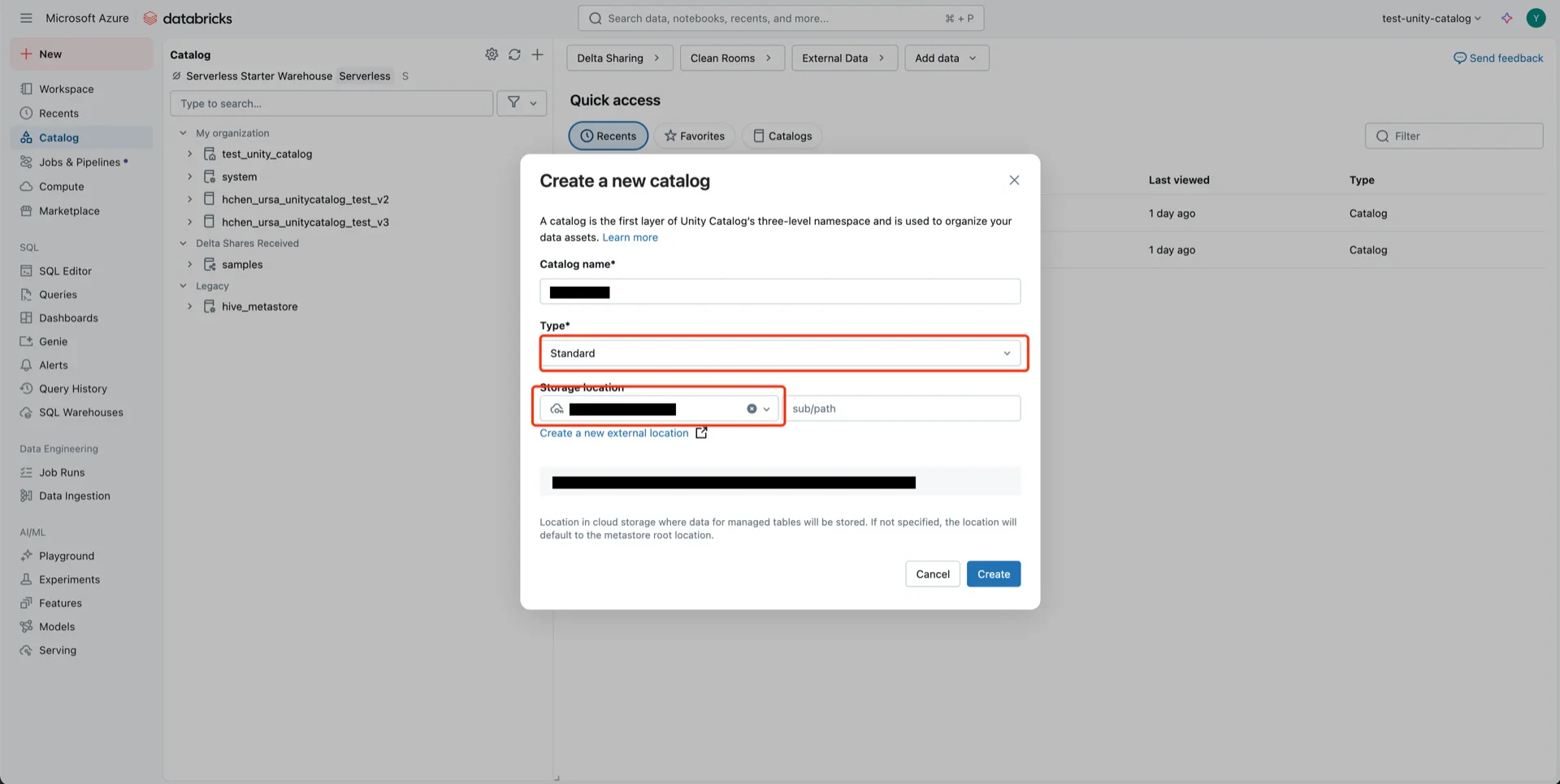

7. Create the Catalog

Create a new Catalog with Type: Standard and select the storage location created in step 5.

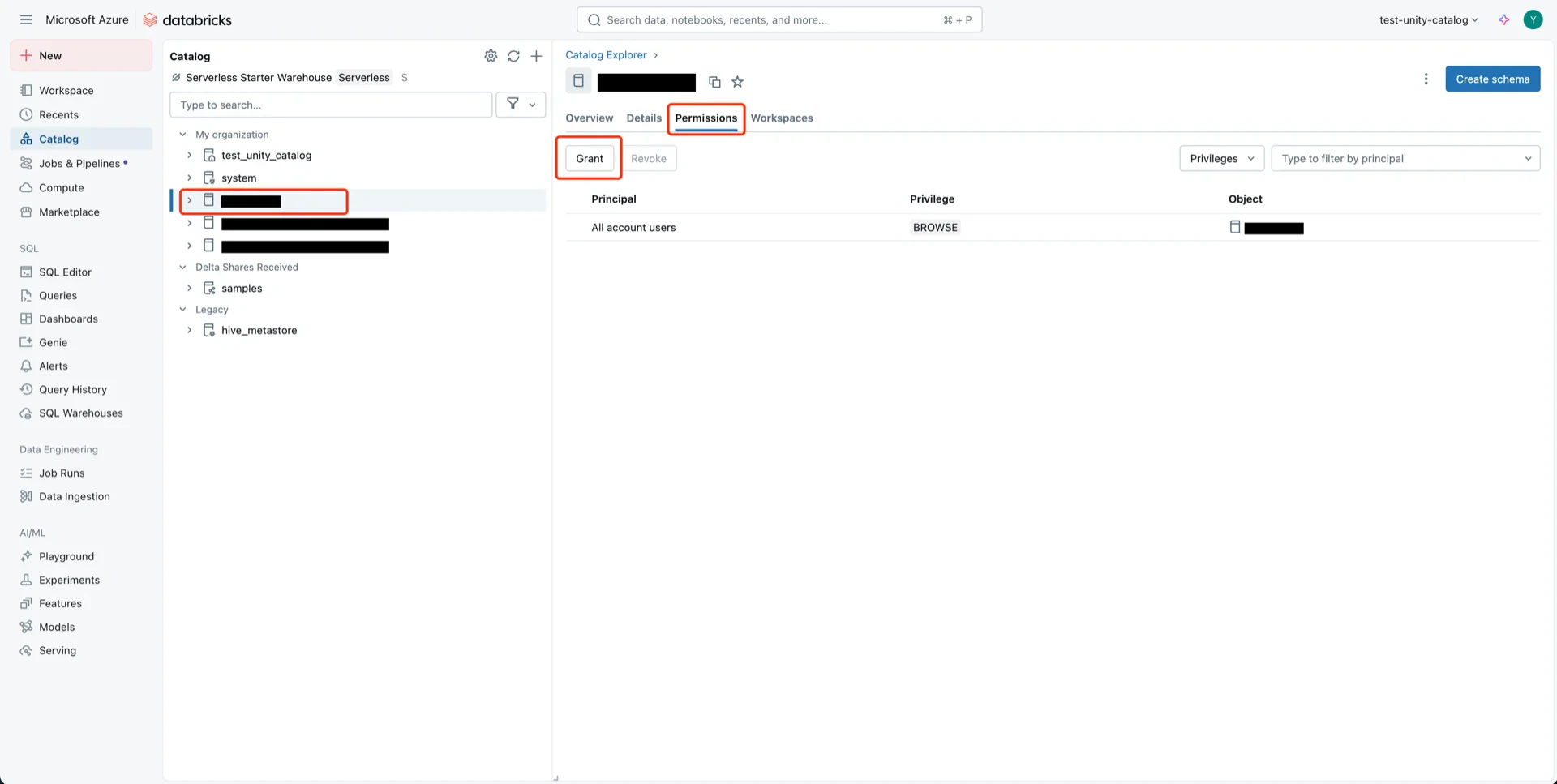

8. Grant Permissions to the Service Principal

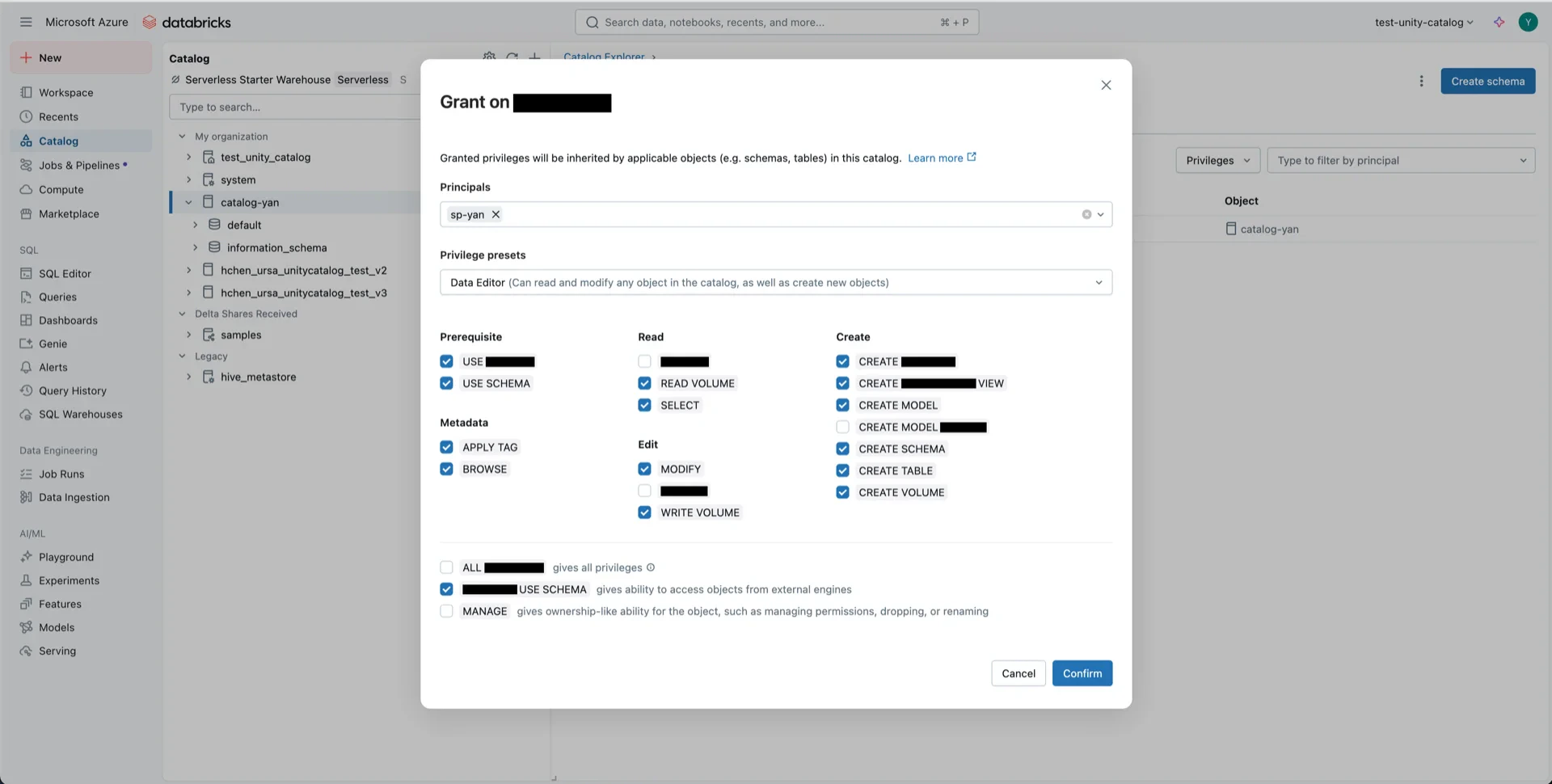

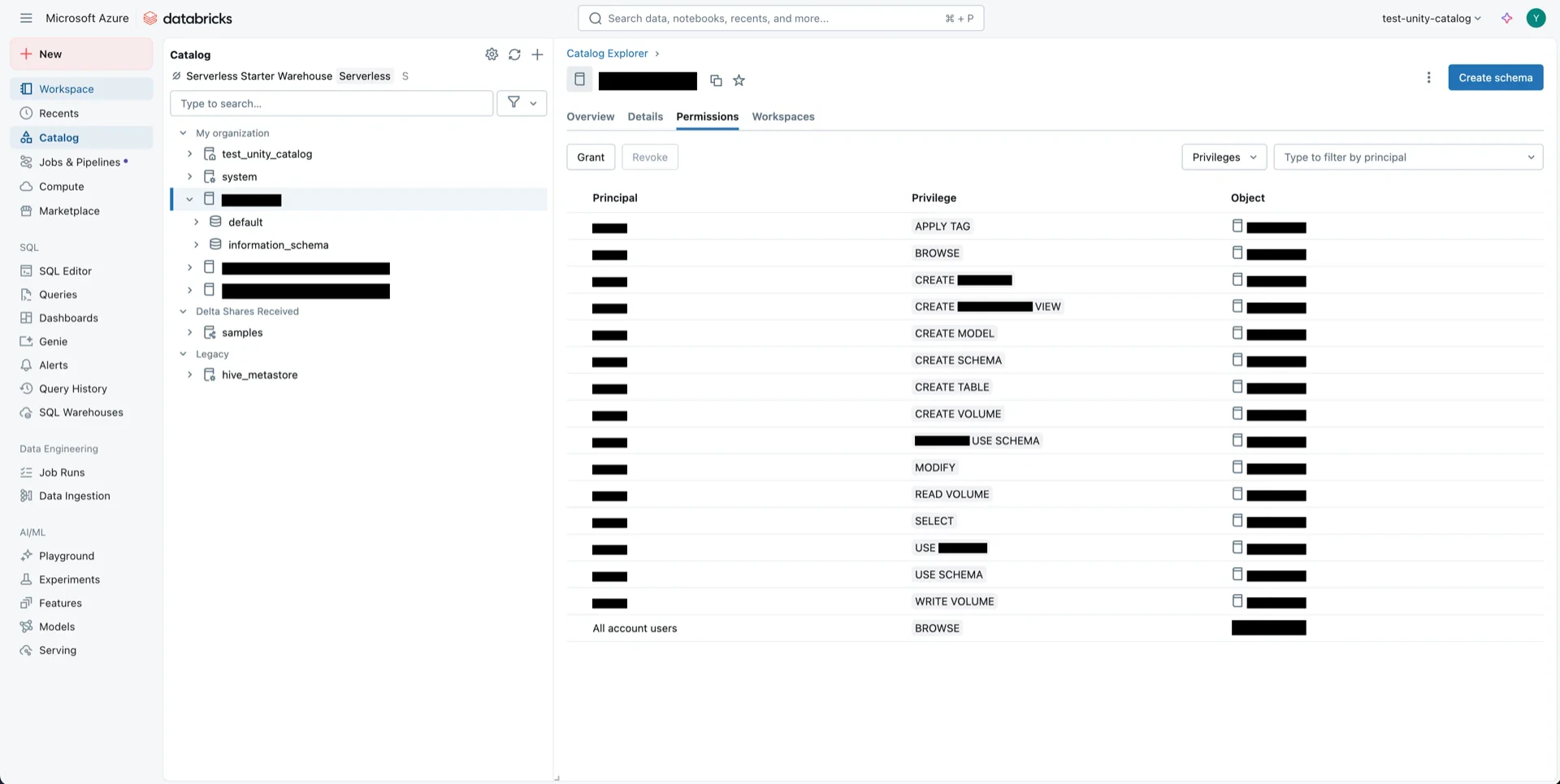

8.1 Catalog Permissions

Navigate to the new catalog and click Permissions -> Grant.

- Principals: the service principal from step 6

- Privilege presets: Data Editor

- EXTERNAL USE SCHEMA: Enabled

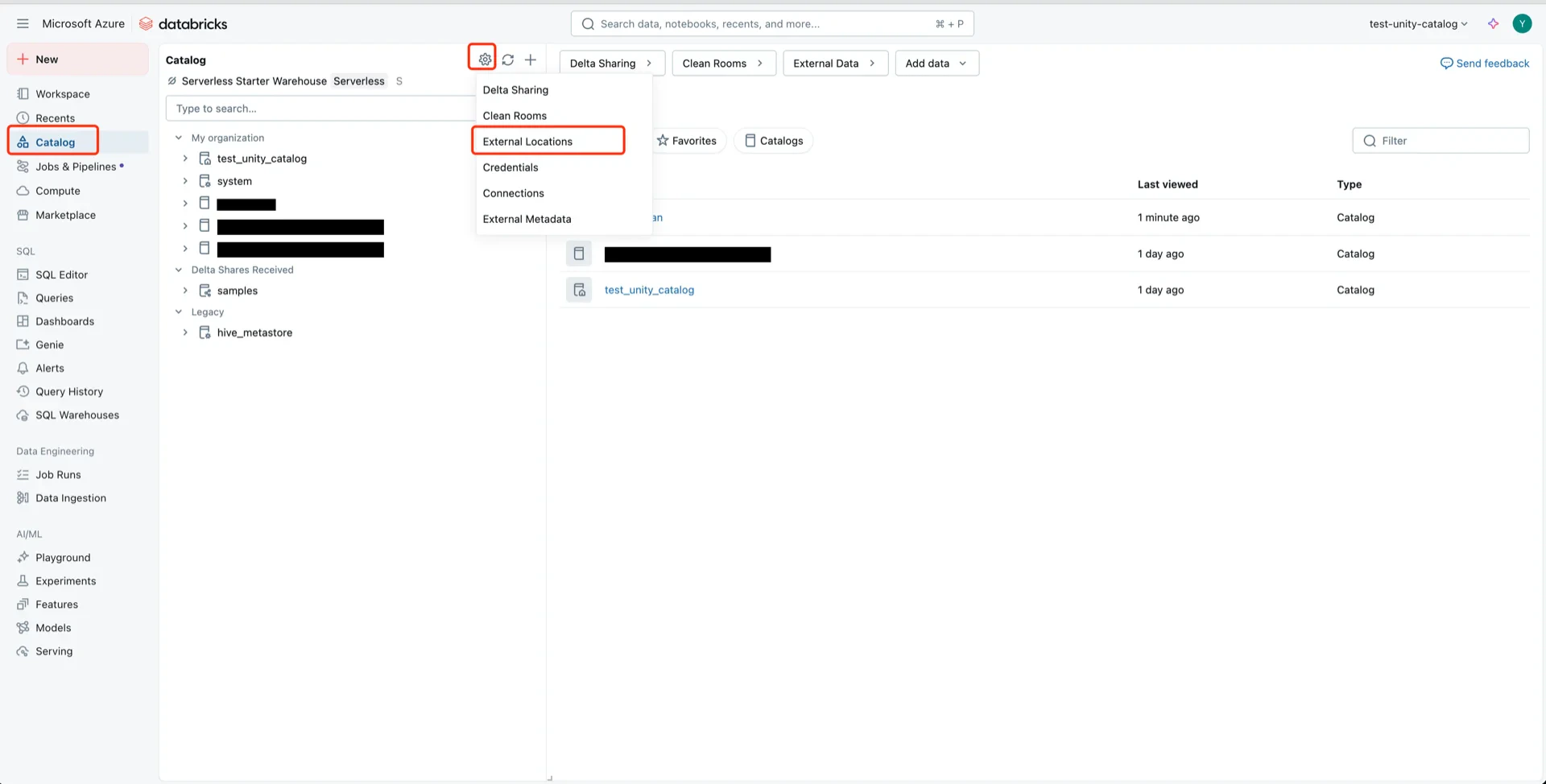

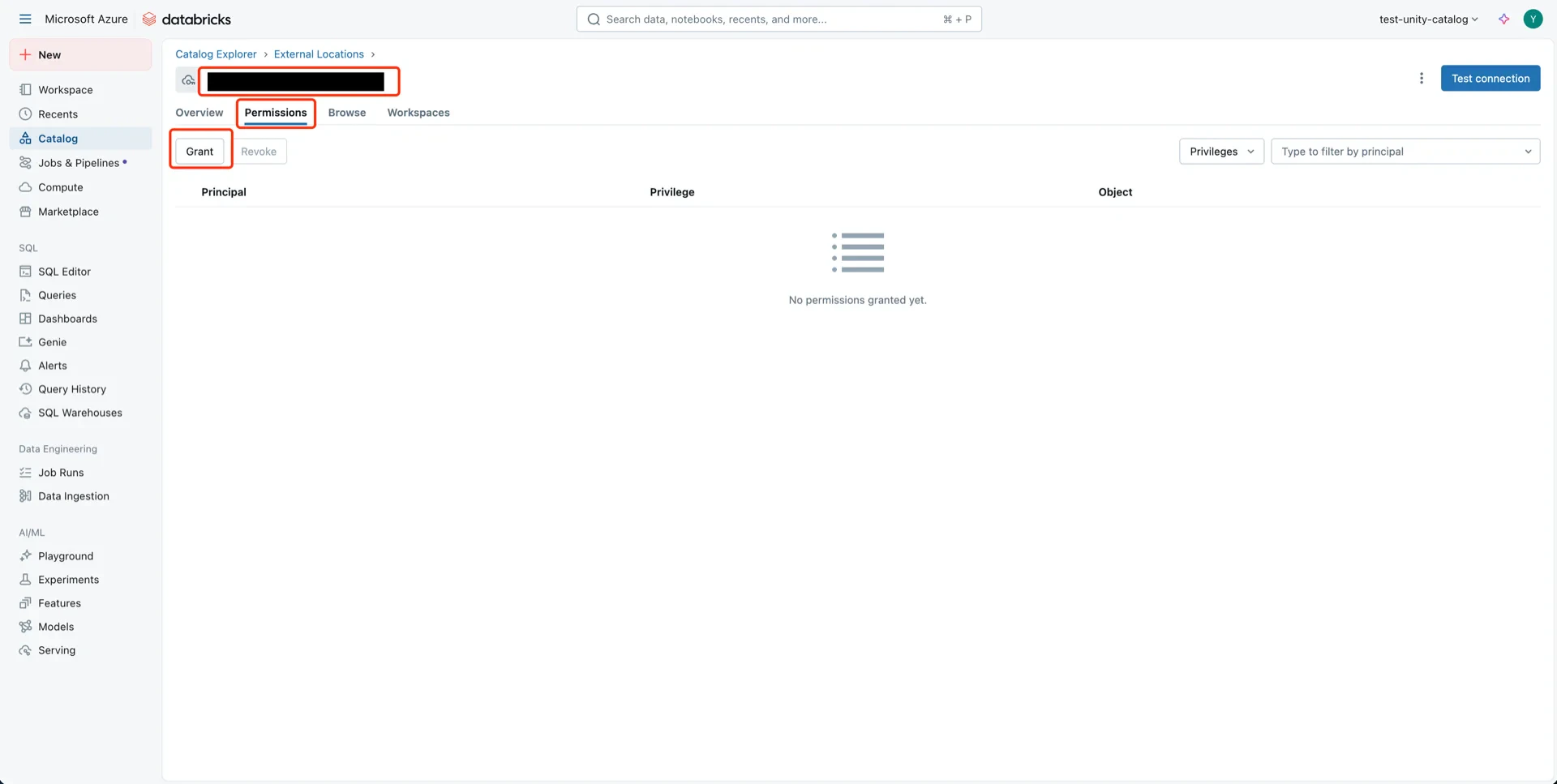

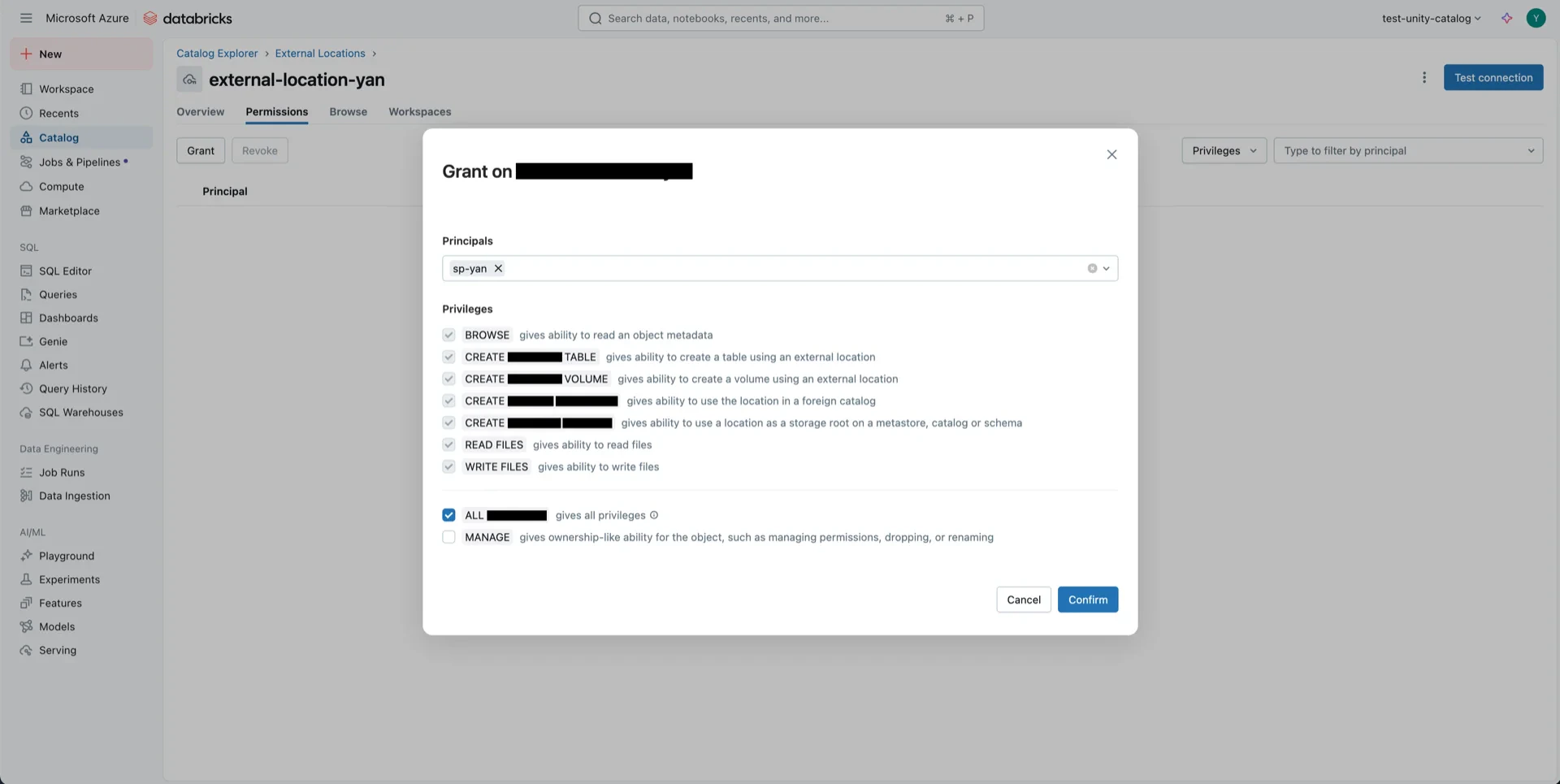

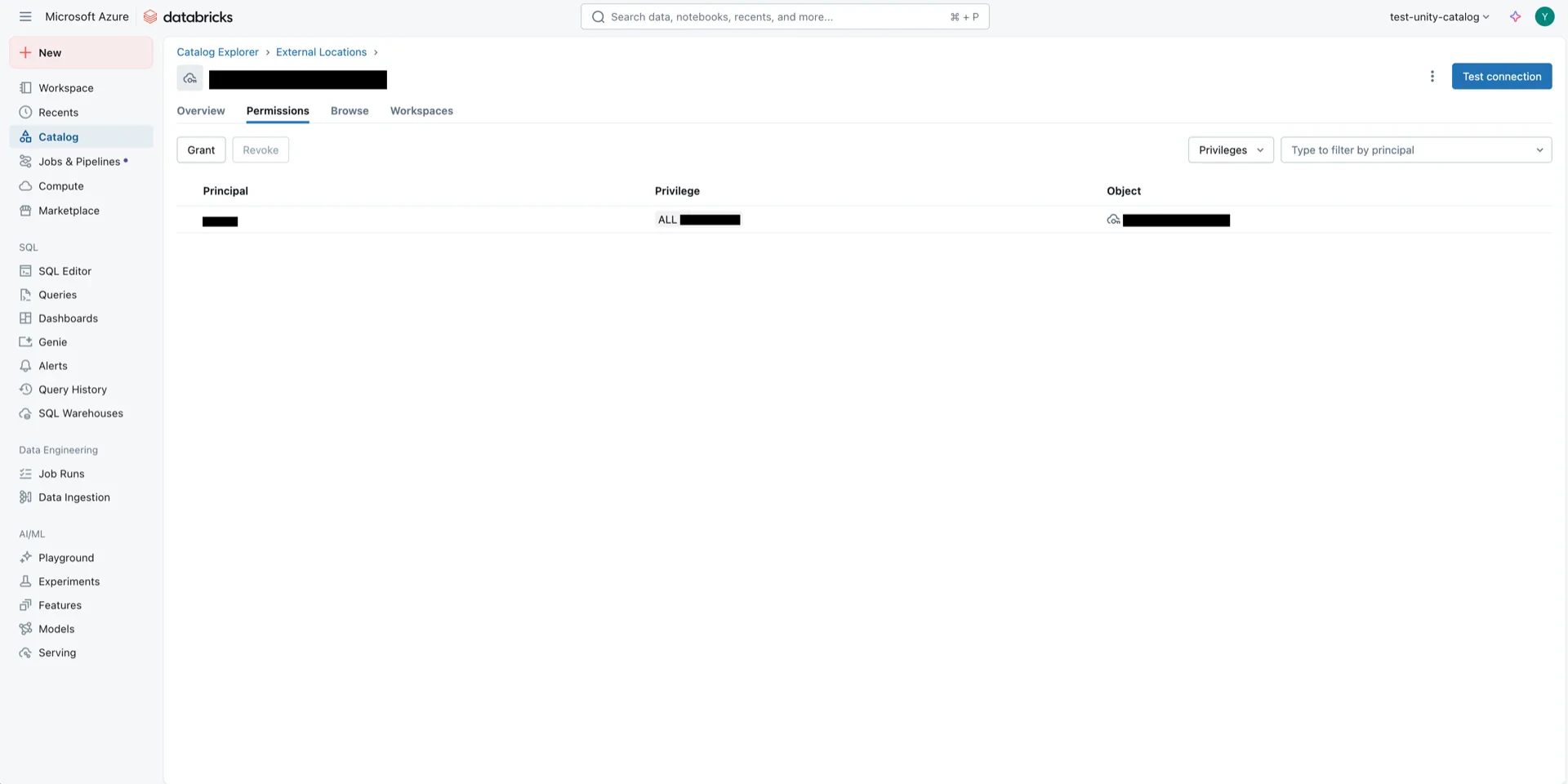

8.2 External Location Permissions

Open the external location from step 5.

Catalog Information Summary

When the steps above are complete, collect the following values for the StreamNative Ursa compaction service:| Value | Description |

|---|---|

unityCatalogUri | Databricks workspace URL (e.g., https://adb-<workspace-id>.azuredatabricks.net) |

unityCatalogName | The Unity Catalog name created in step 7 |

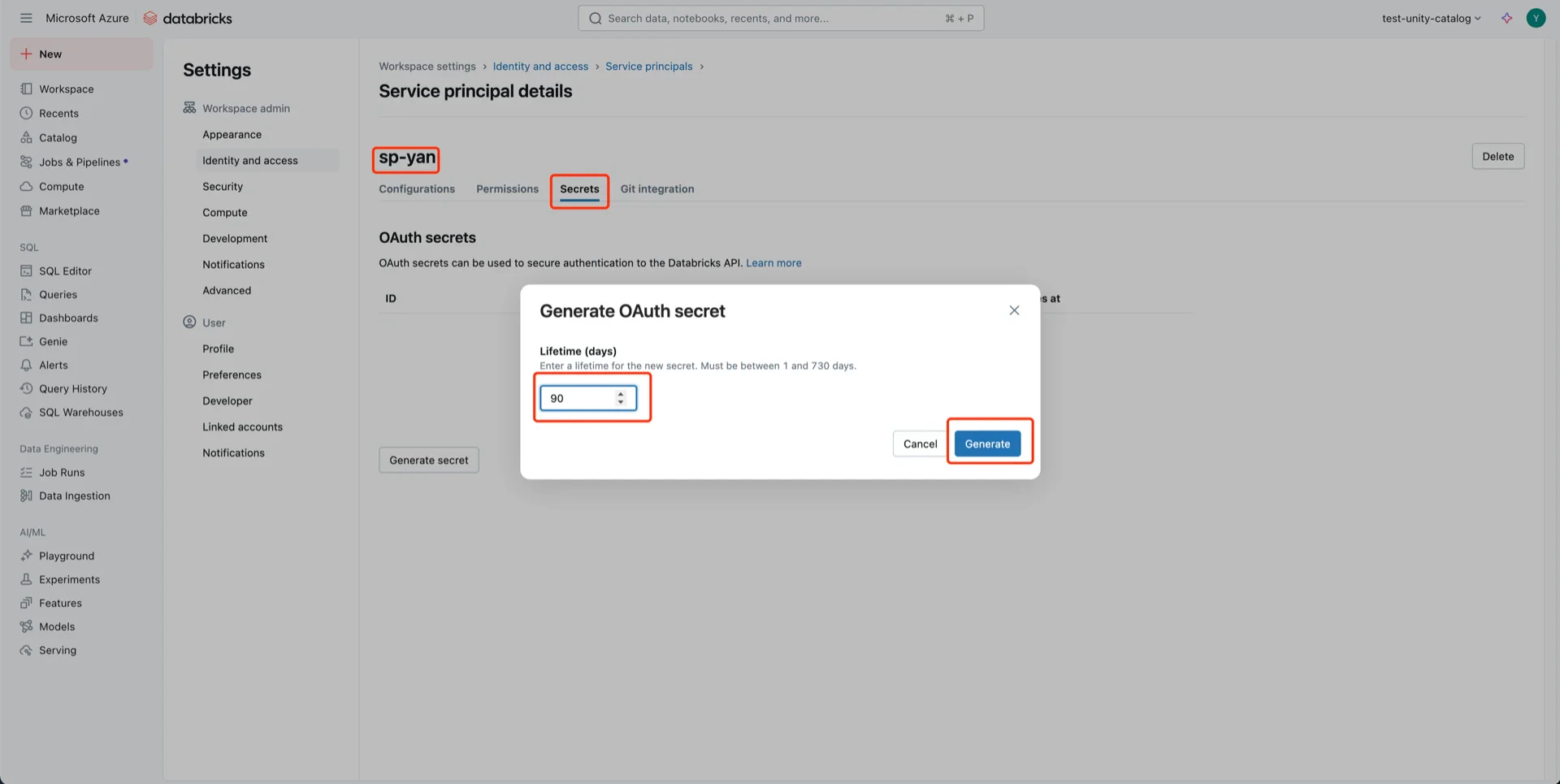

unityCatalogClientId / unityCatalogClientSecret | OAuth2 credentials from step 6 |