This guide describes how to prepare a Databricks Unity Catalog for use with StreamNative Ursa as a managed Iceberg table catalog on AWS.Documentation Index

Fetch the complete documentation index at: https://docs.streamnative.io/llms.txt

Use this file to discover all available pages before exploring further.

Prerequisites

- A Databricks account with Unity Catalog and Iceberg Managed Table enabled

- An AWS account with permissions to create S3 buckets and IAM roles

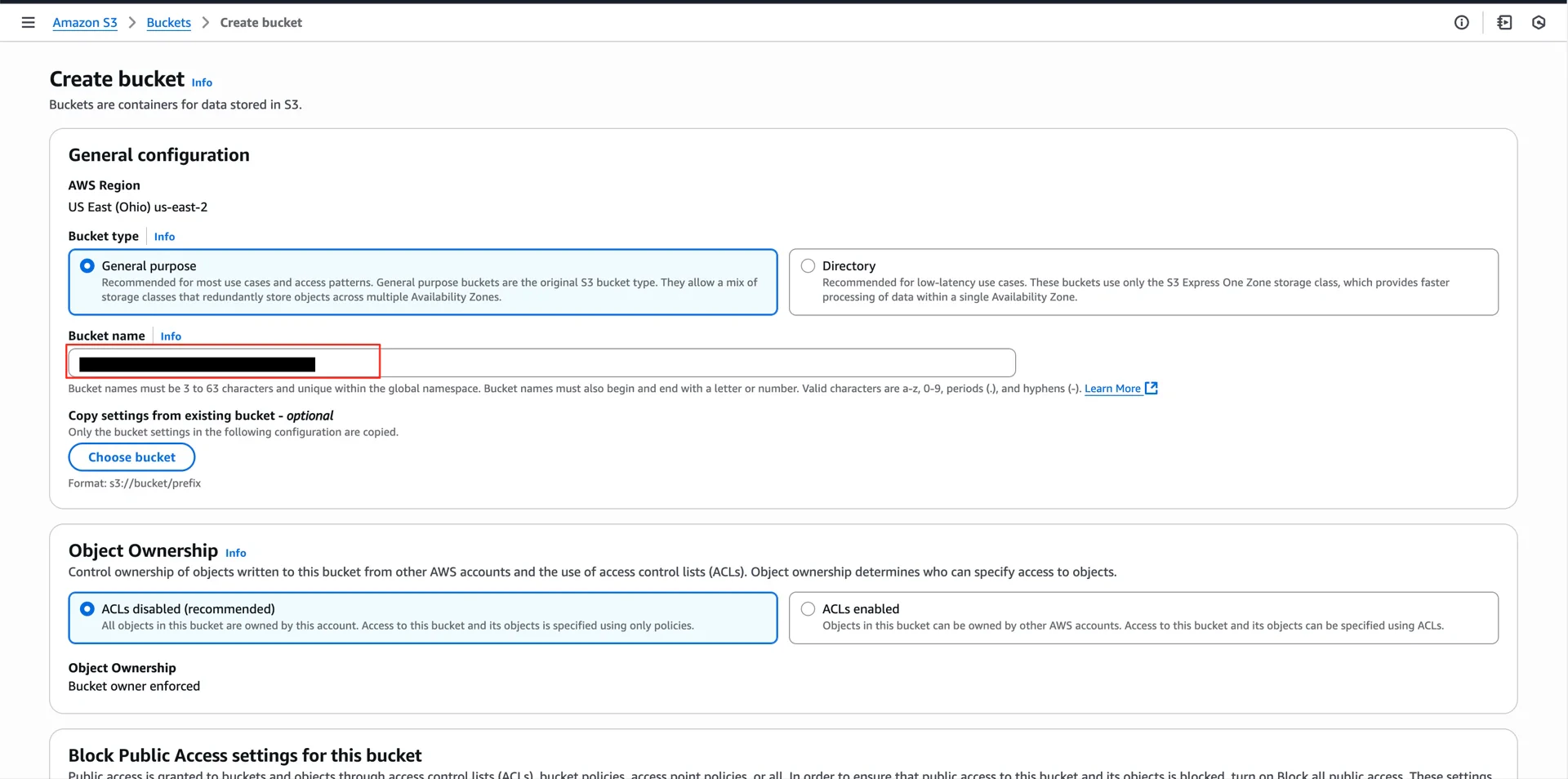

1. Create an S3 Bucket

In your AWS account, create an S3 bucket to use as the Unity Catalog storage location (for example,aws-unitycatalog-iceberg-bucket).

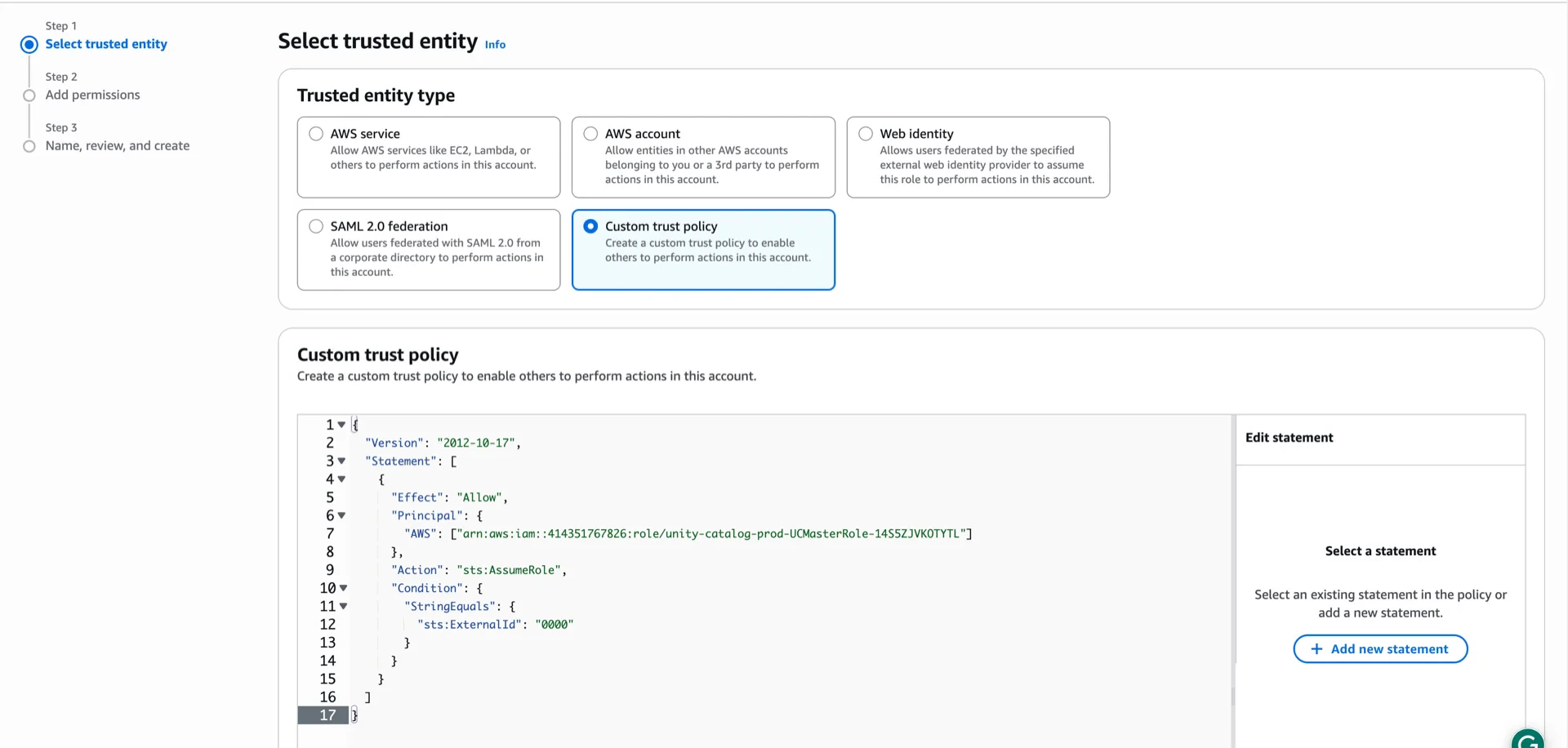

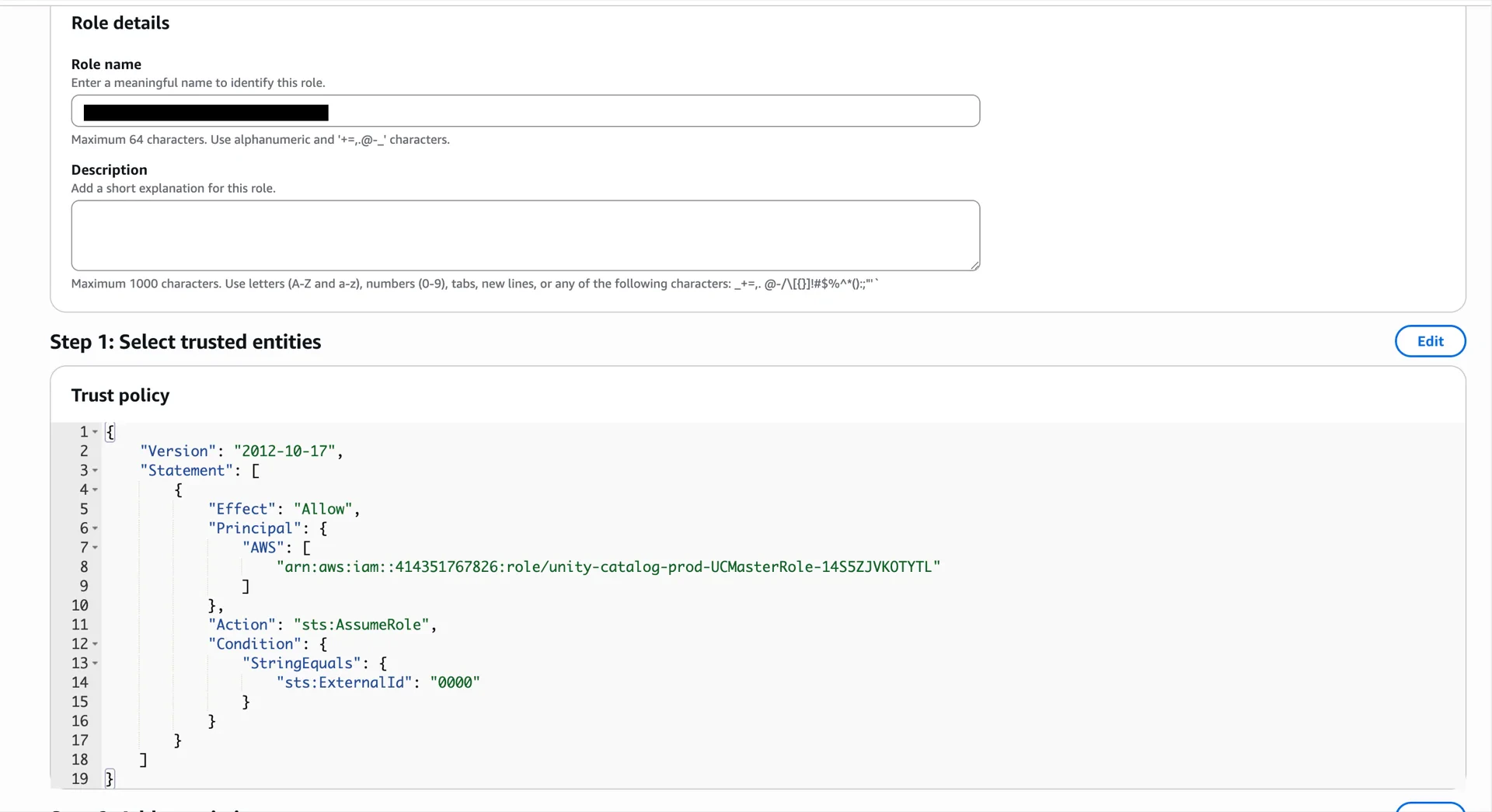

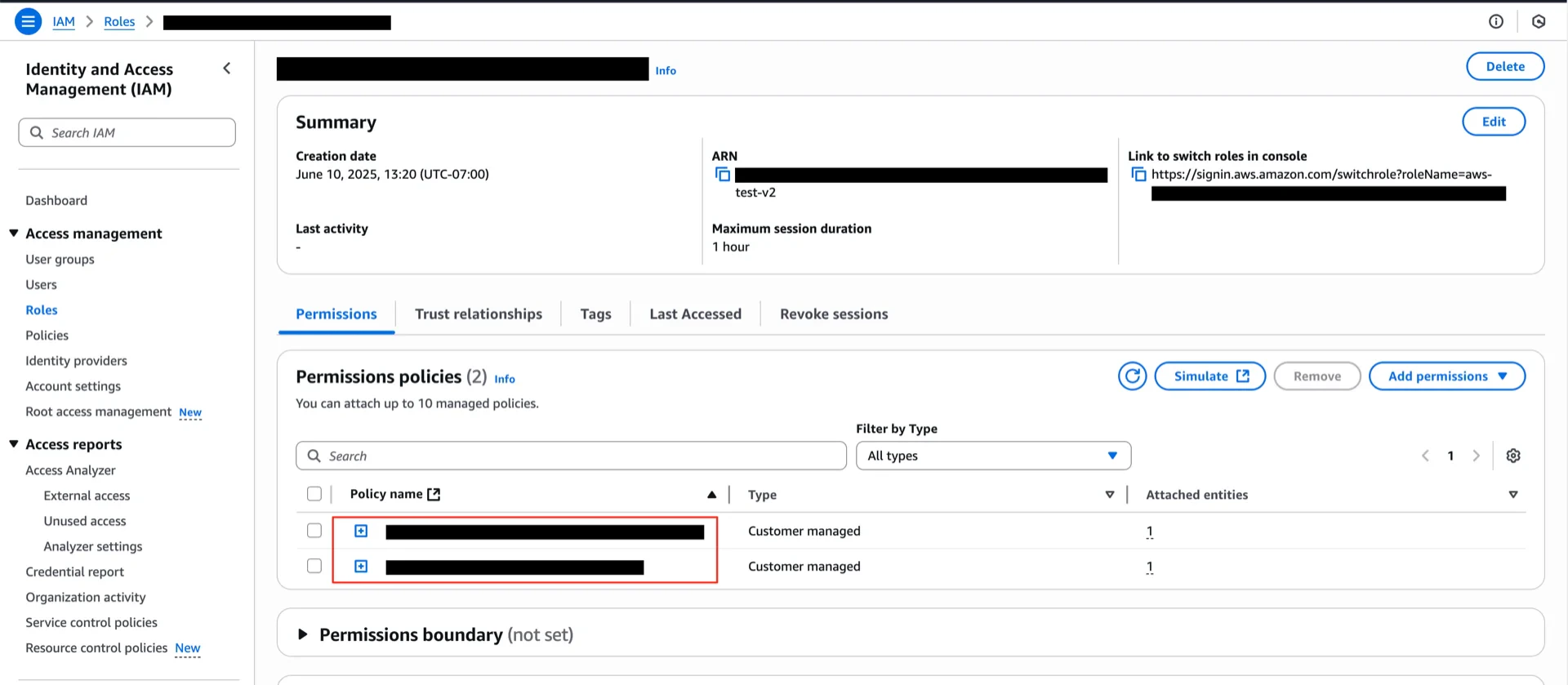

2. Create the IAM Role

2.1 Create the Role with a Placeholder Trust Policy

Create an IAM role that allows the Unity Catalog master role to assume it. Use the following trust policy with a placeholderExternal ID of 0000 (you will replace it with the value generated by Databricks in step 3):

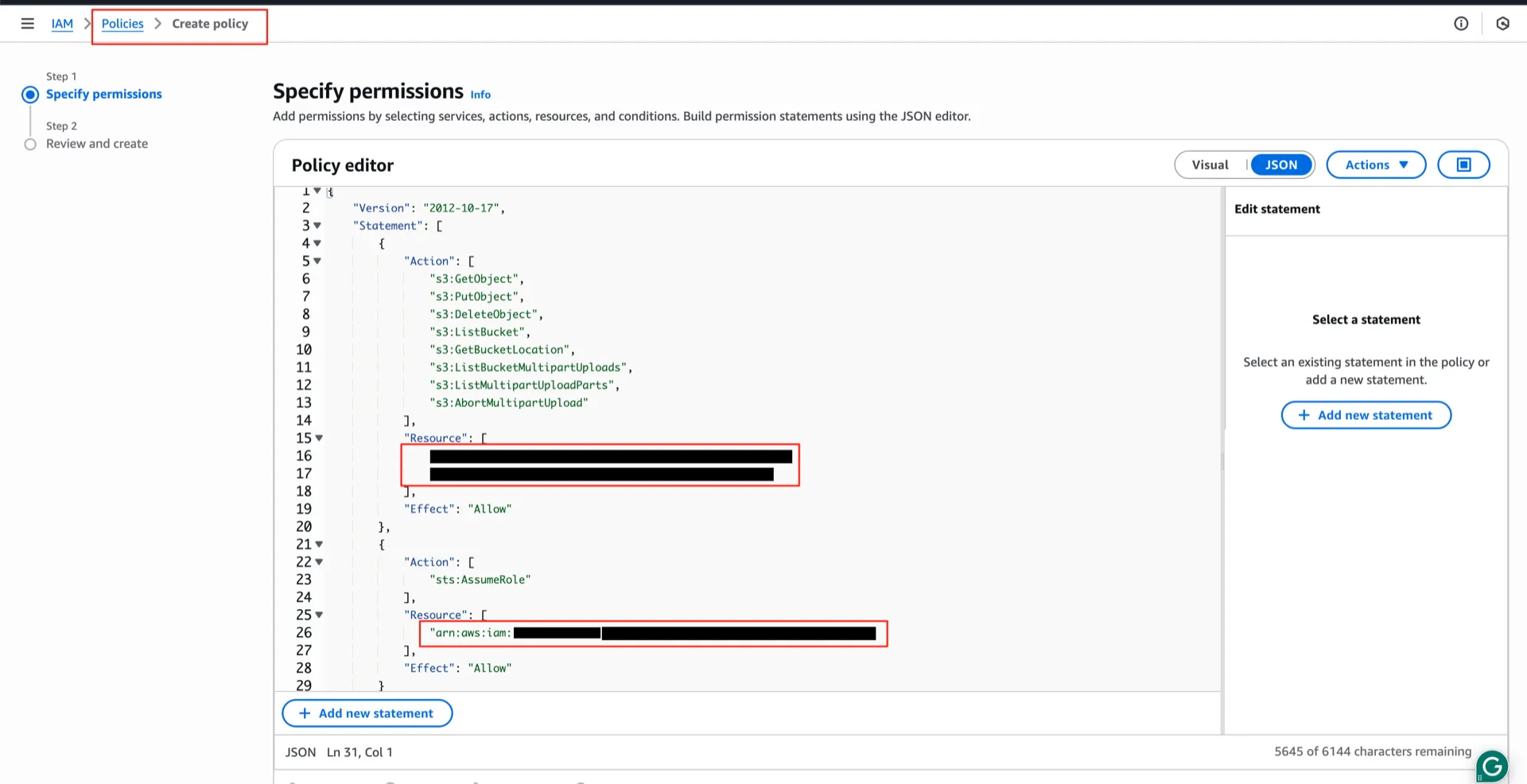

2.2 Attach the S3 Access Policy

Create the following policy and attach it to the role. Replace<your-bucket> and <your-account-id> with your values.

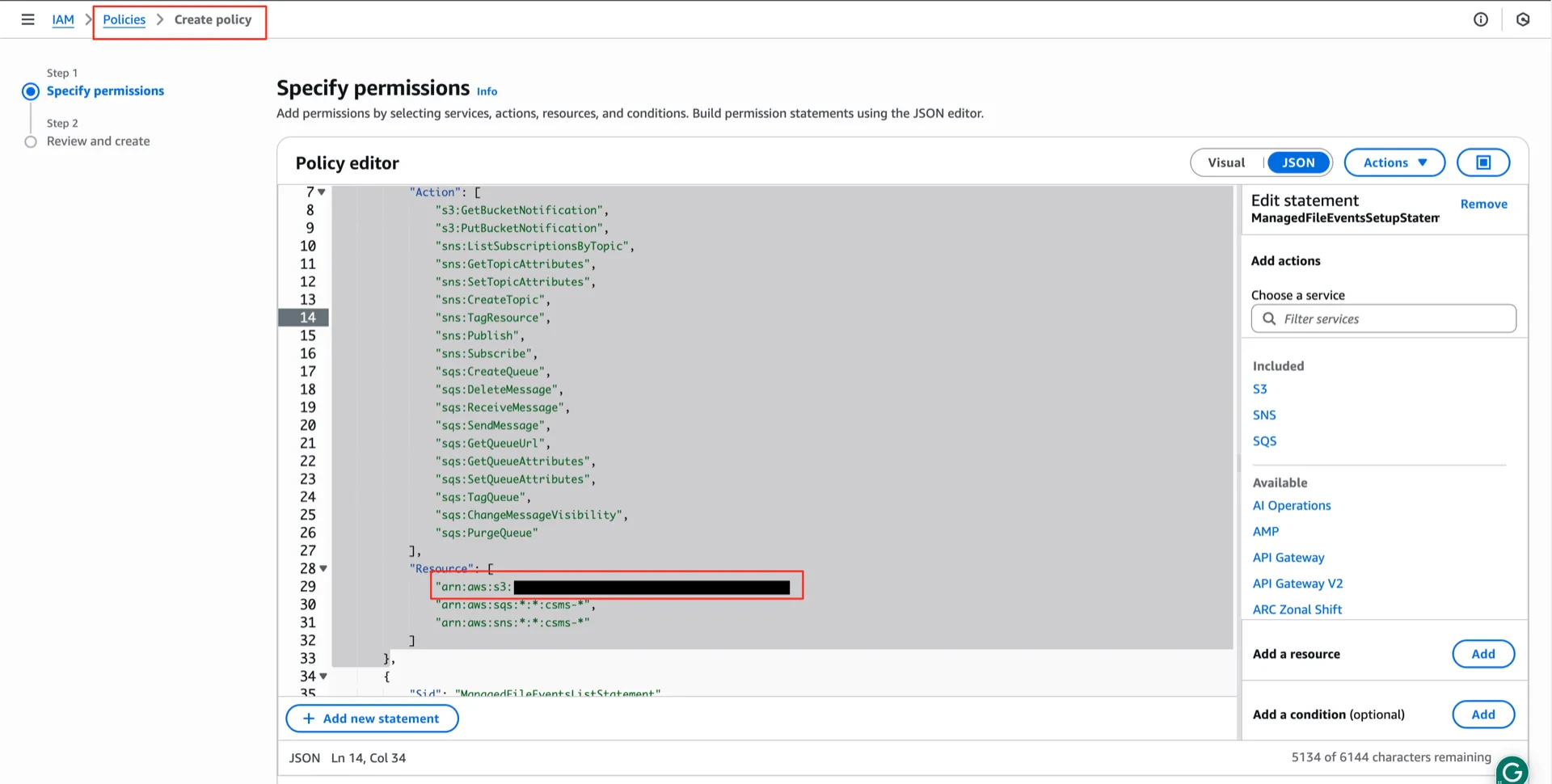

2.3 Attach the File Events Policy

Create a second policy for managed file events (S3 notifications, SNS, SQS) and attach it to the same role:

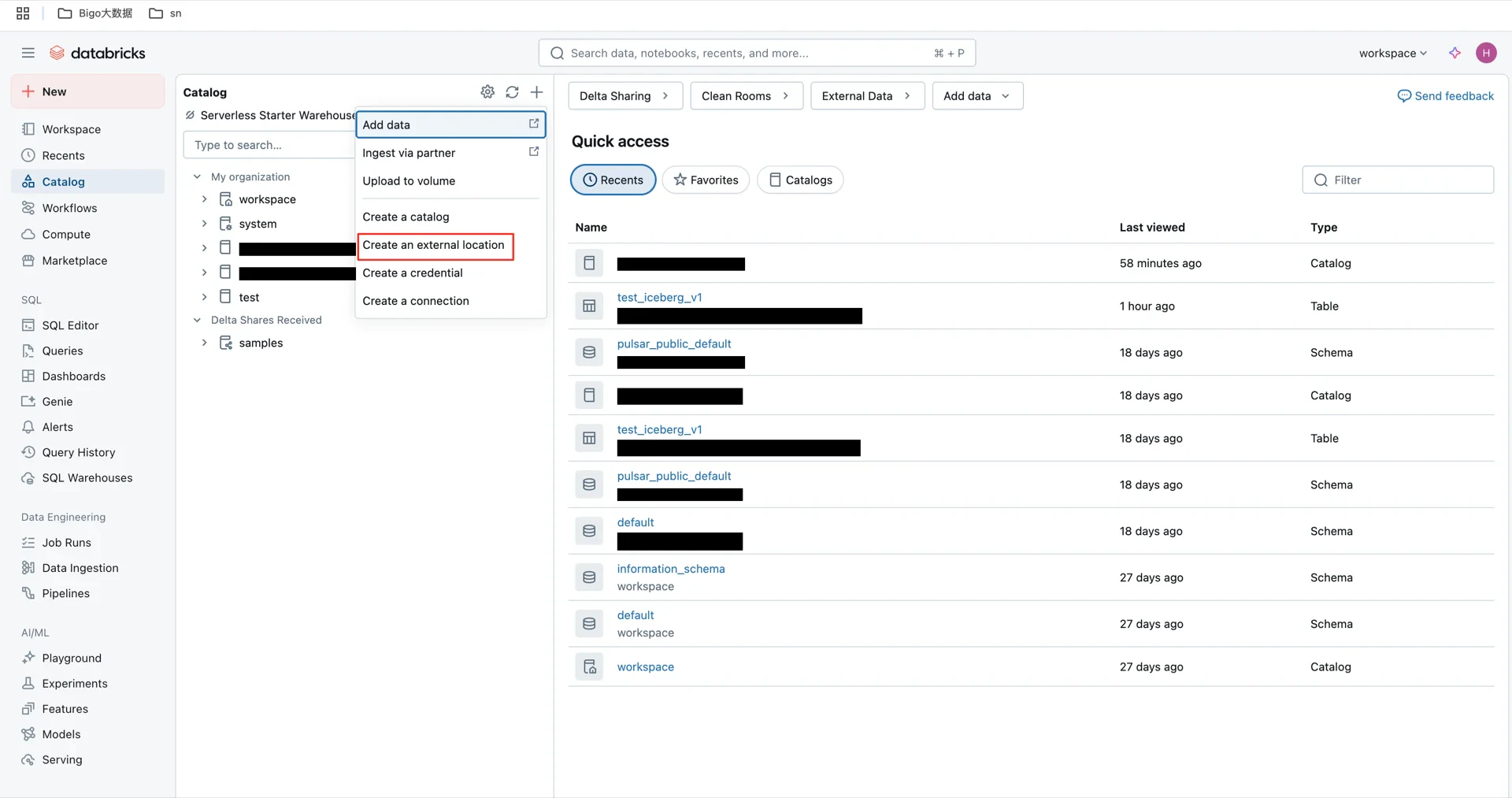

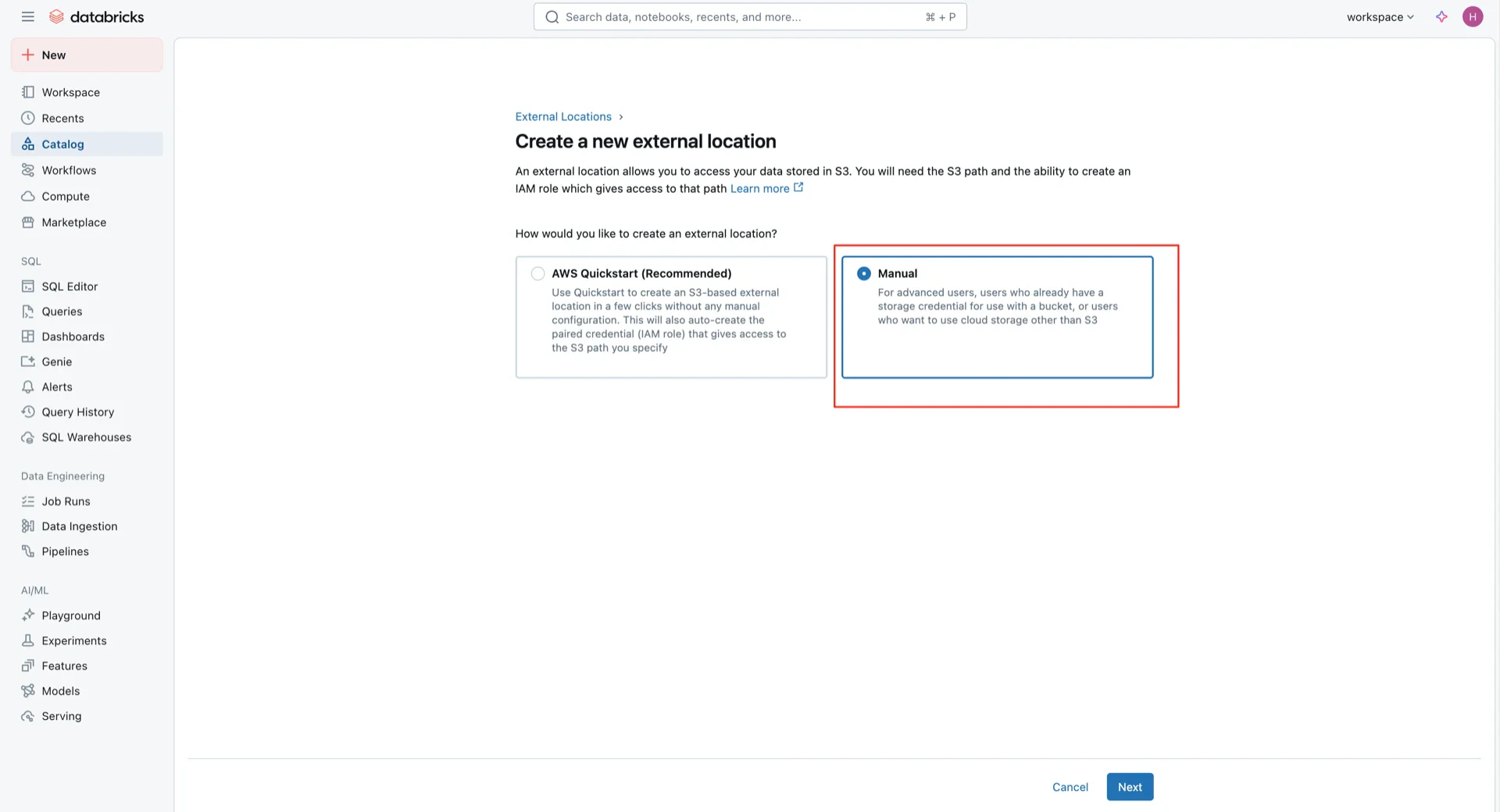

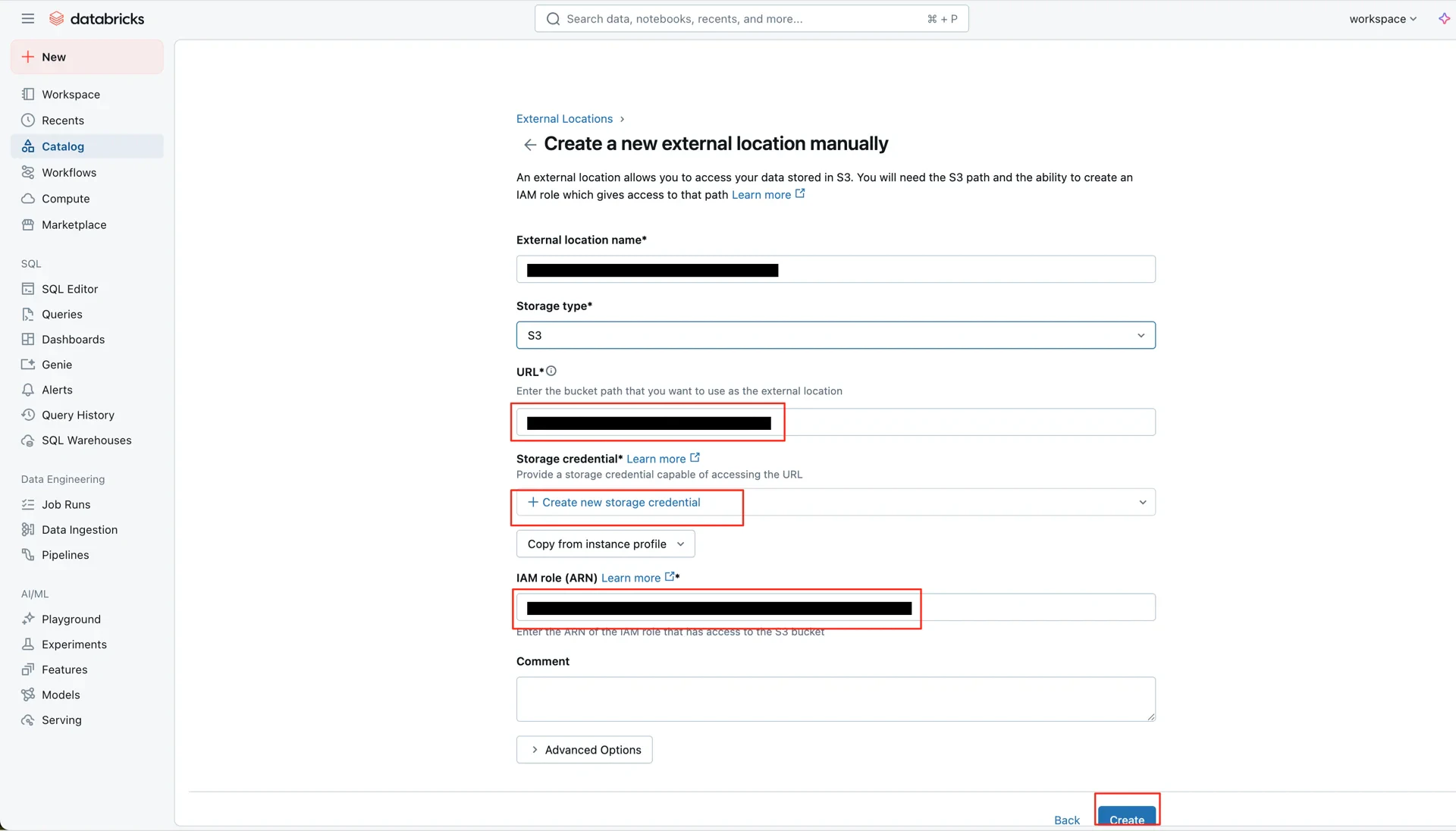

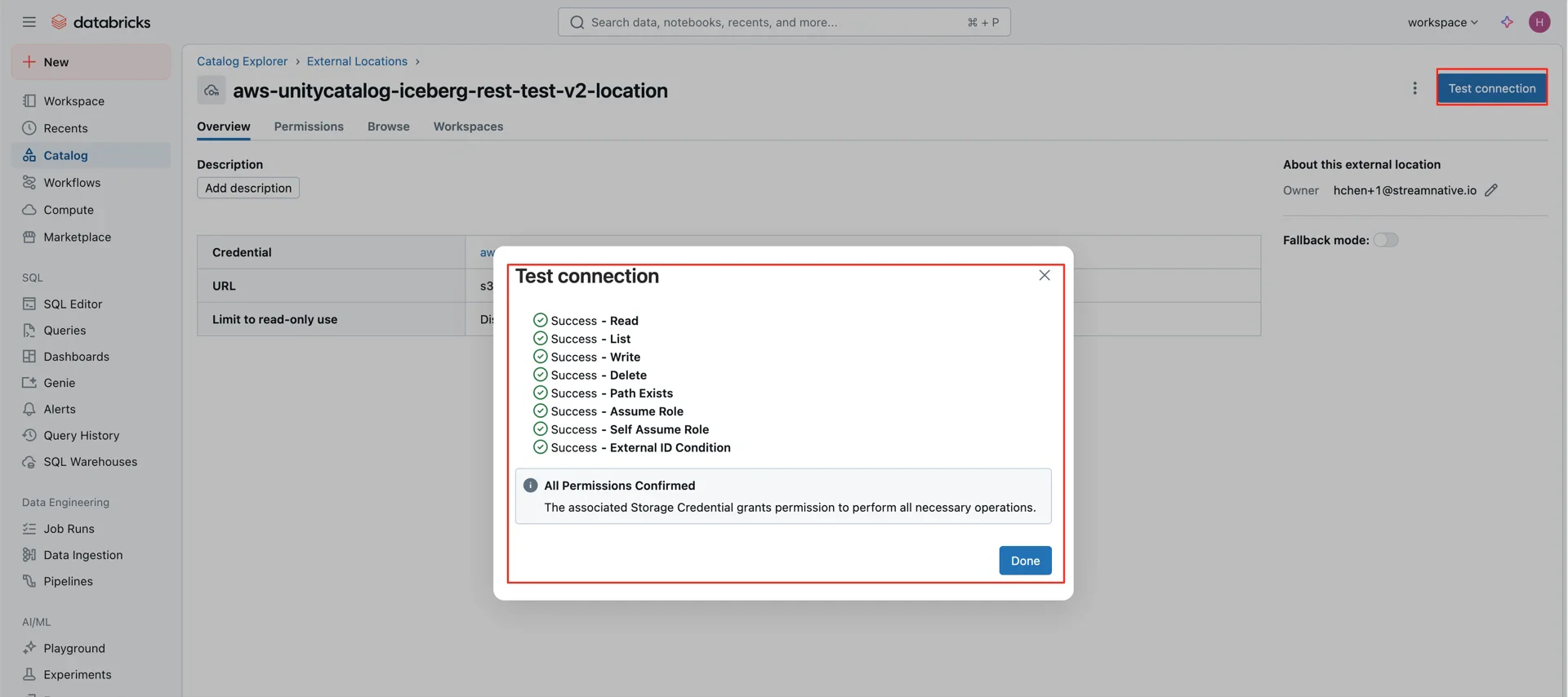

3. Create an External Location in Unity Catalog

In the Databricks Catalog console, create a new external location pointing to the S3 bucket and the IAM role created above.

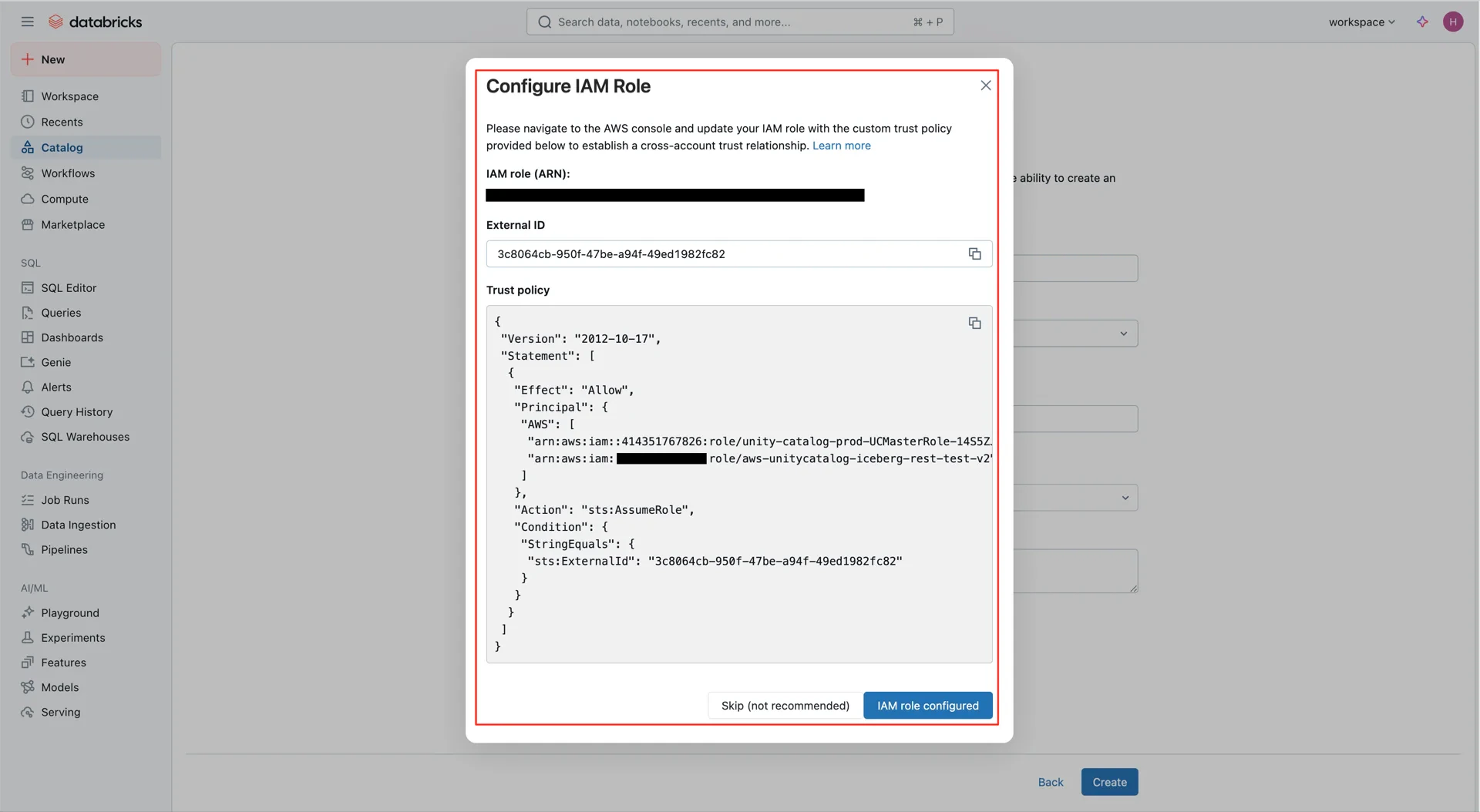

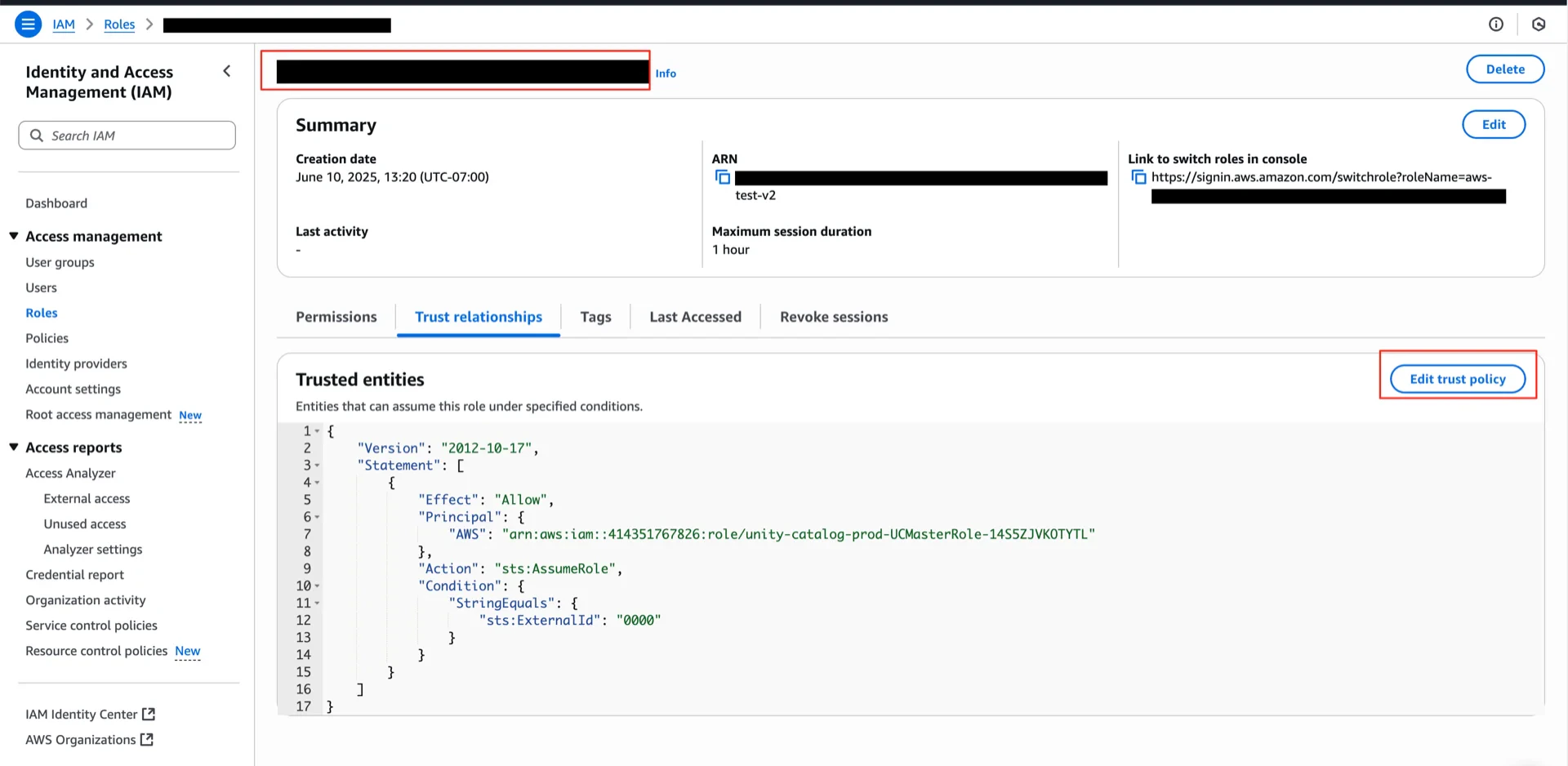

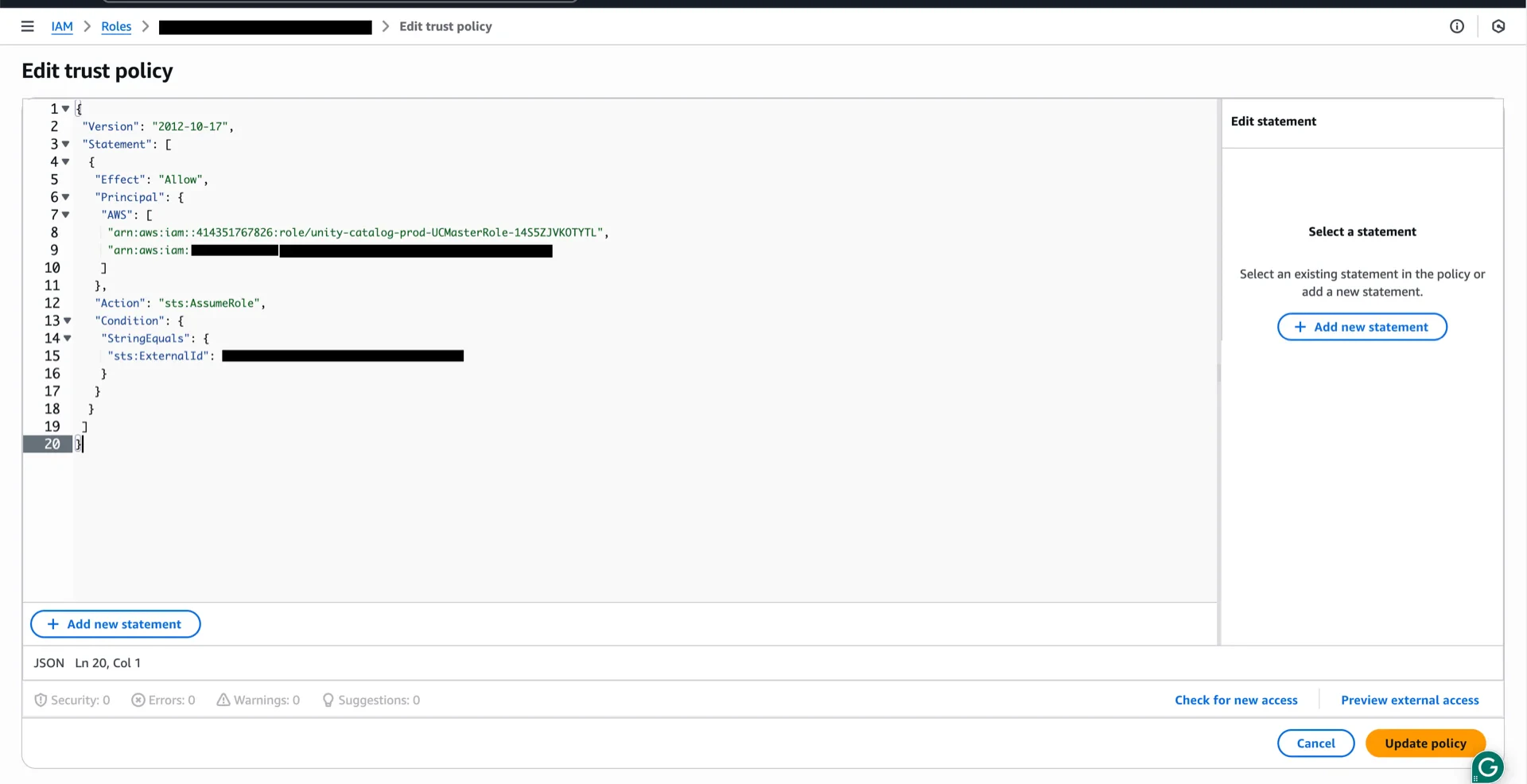

4. Update the IAM Role Trust Policy

Return to the AWS IAM console and replace the role’s trust policy with the one generated by Databricks in step 3, using the new External ID.

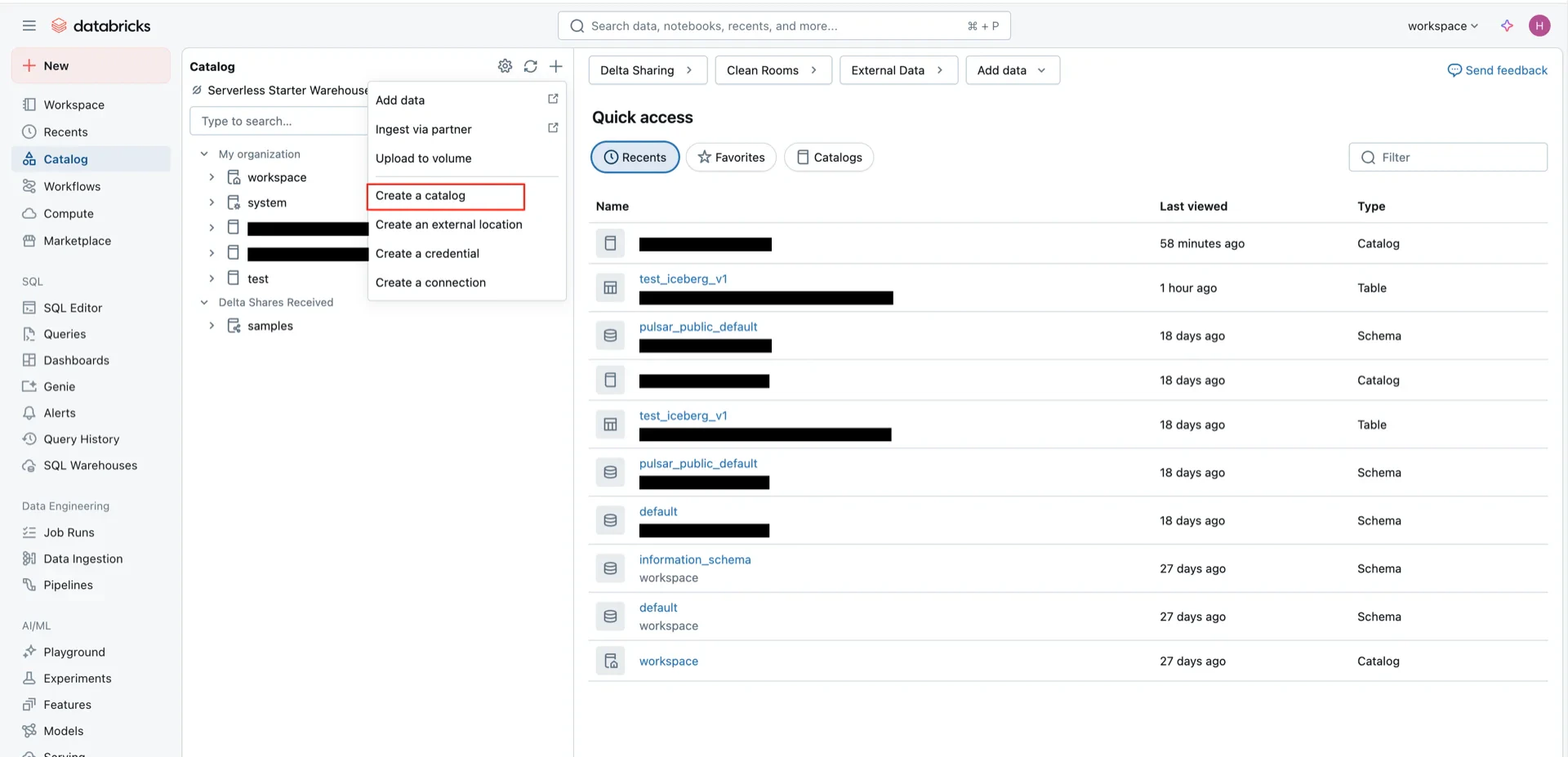

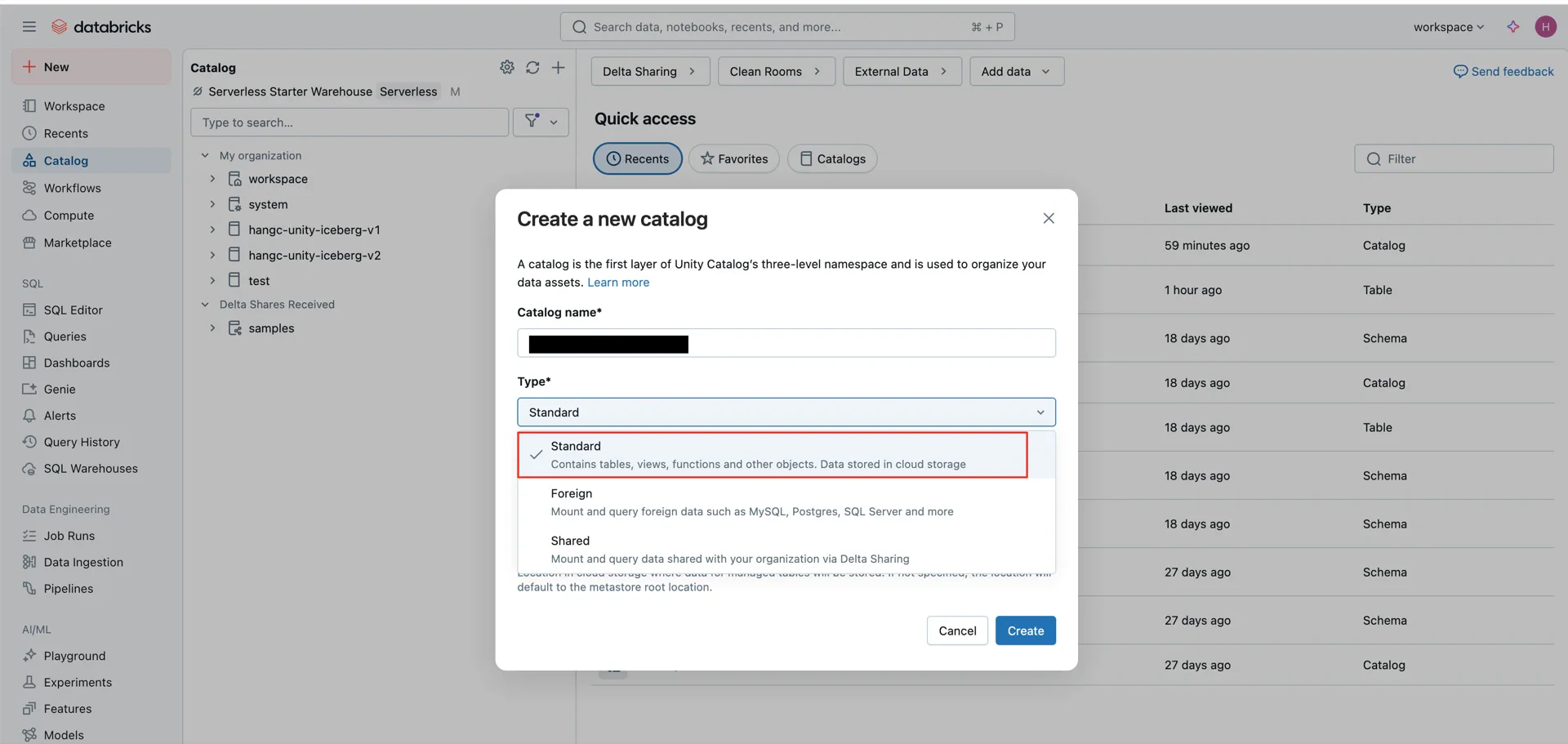

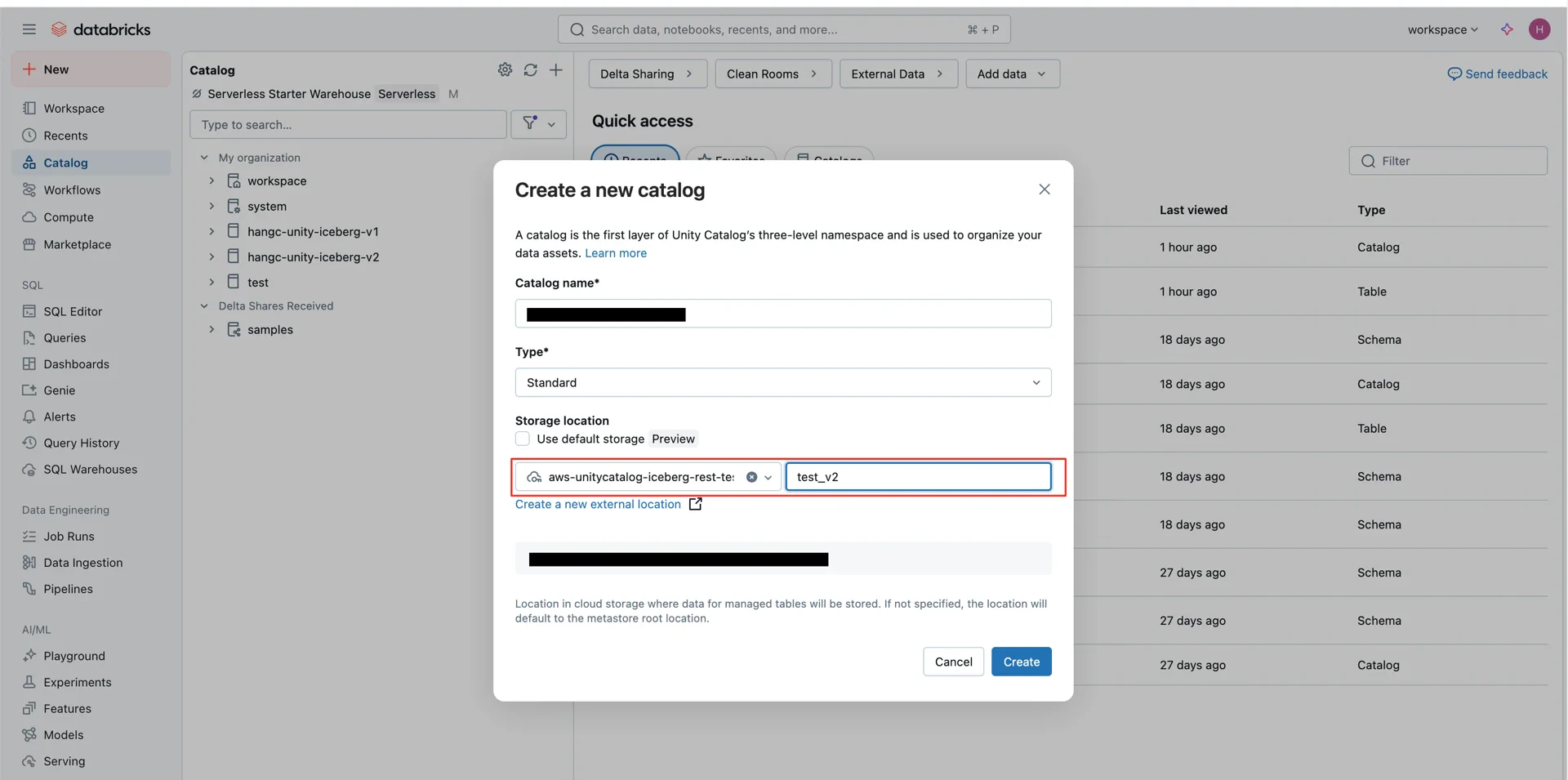

5. Create the Unity Catalog

Create a new catalog in Databricks bound to the external location created in step 3:- Type: Standard

- Storage location: the external location created above

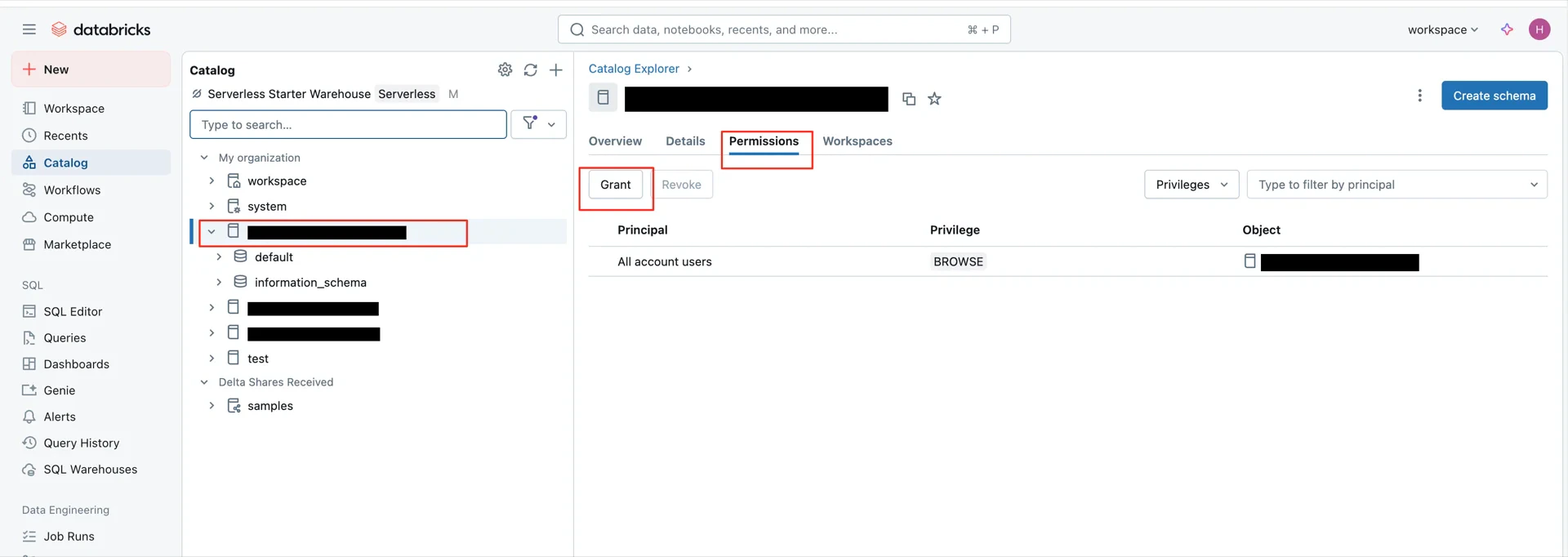

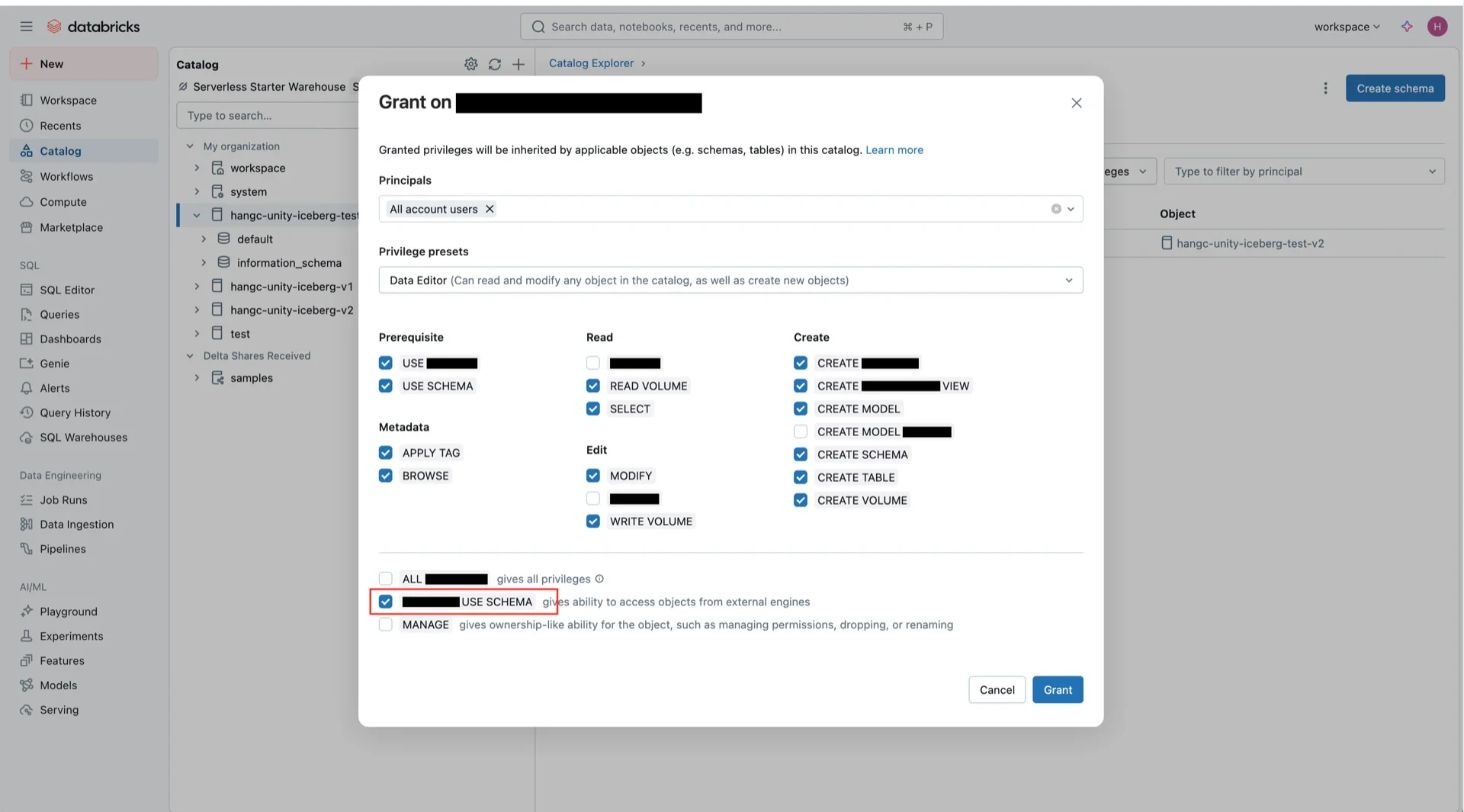

6. Grant Catalog Permissions

Grant permissions on the catalog. TheEXTERNAL_USE_SCHEMA permission is required for Iceberg Managed Tables in Unity Catalog.

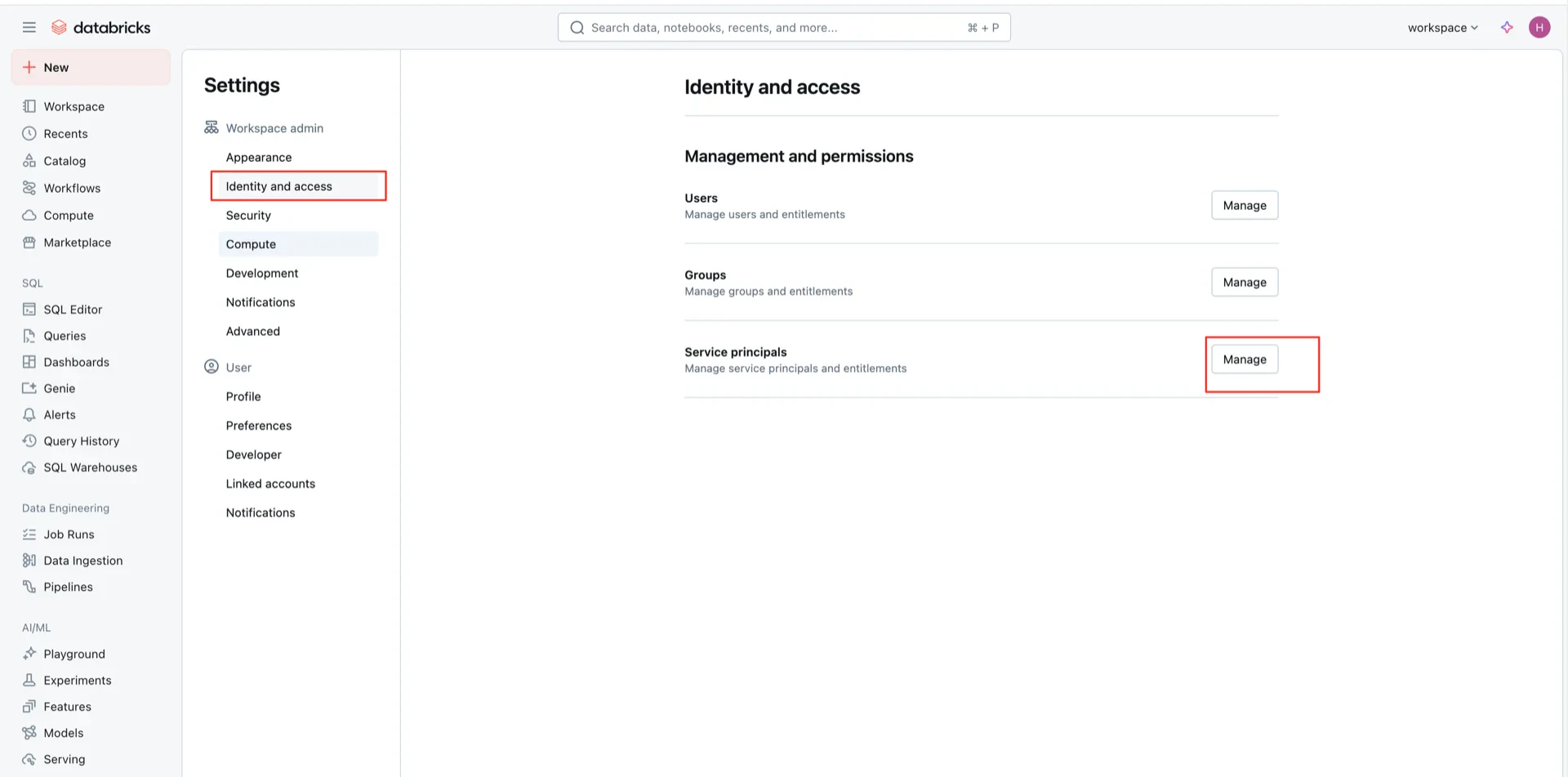

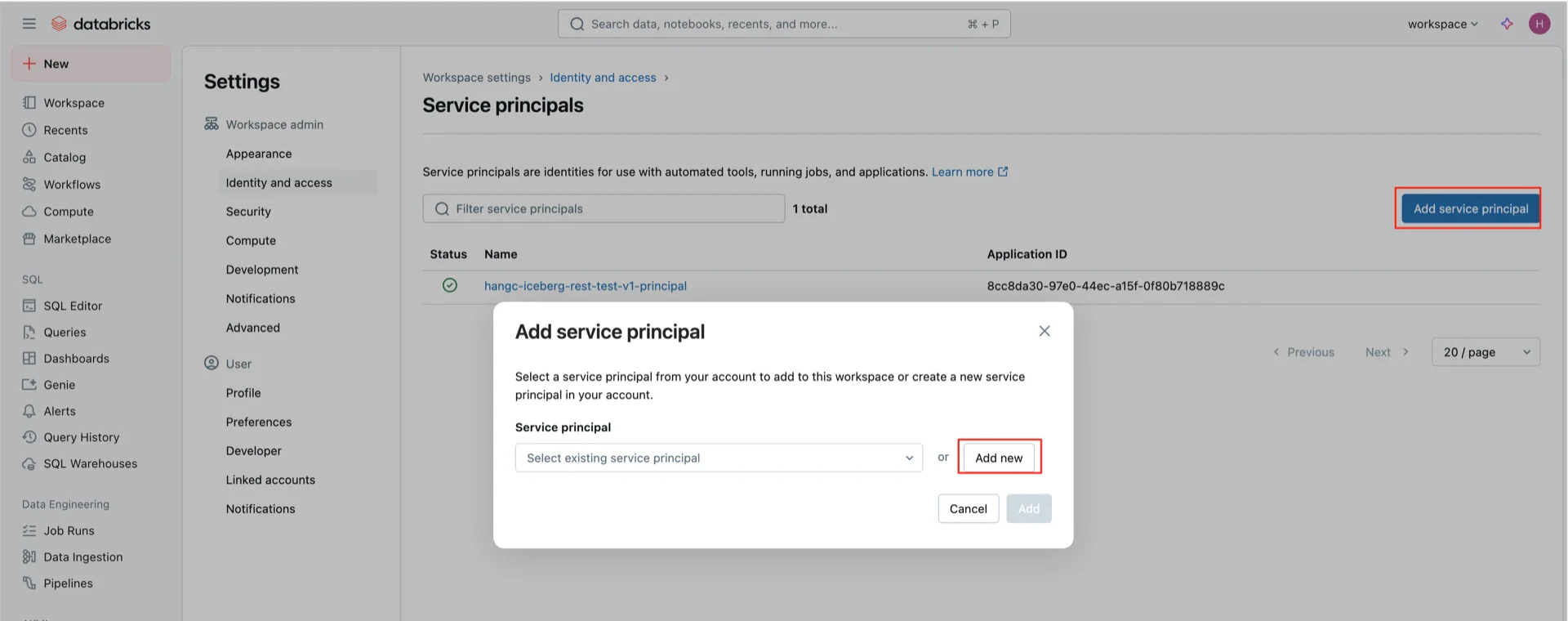

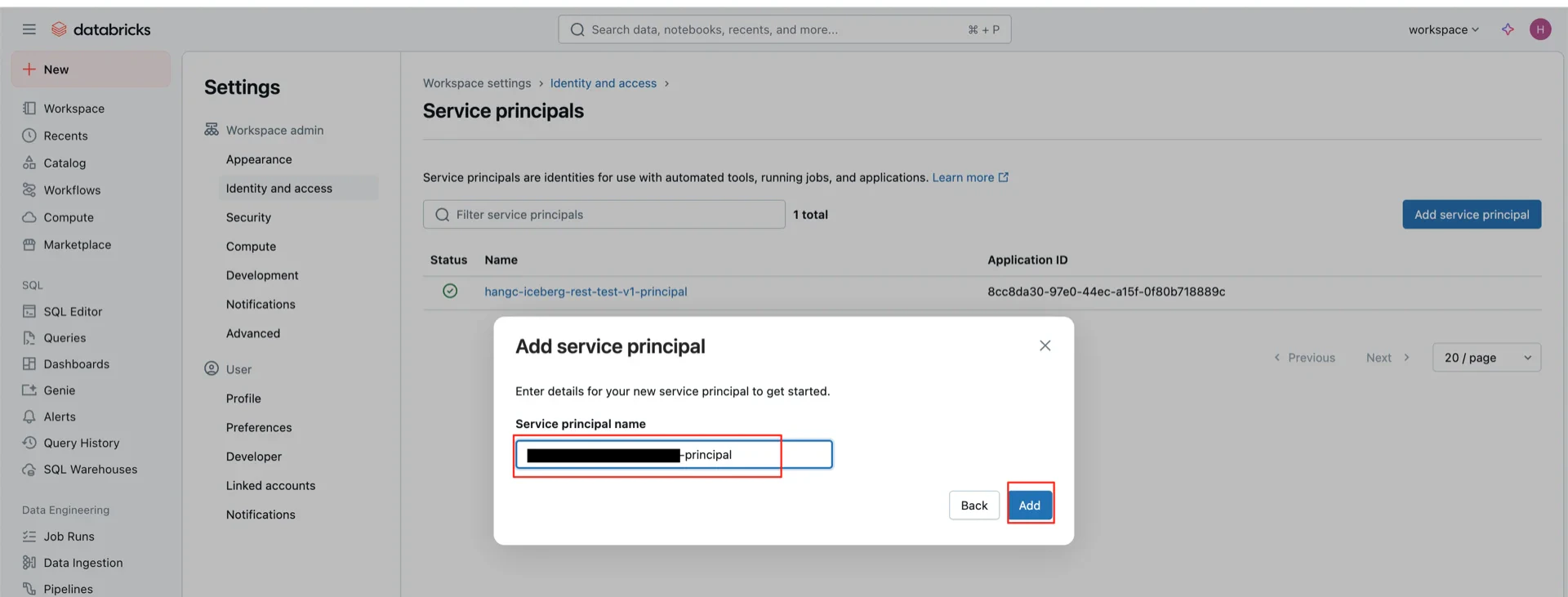

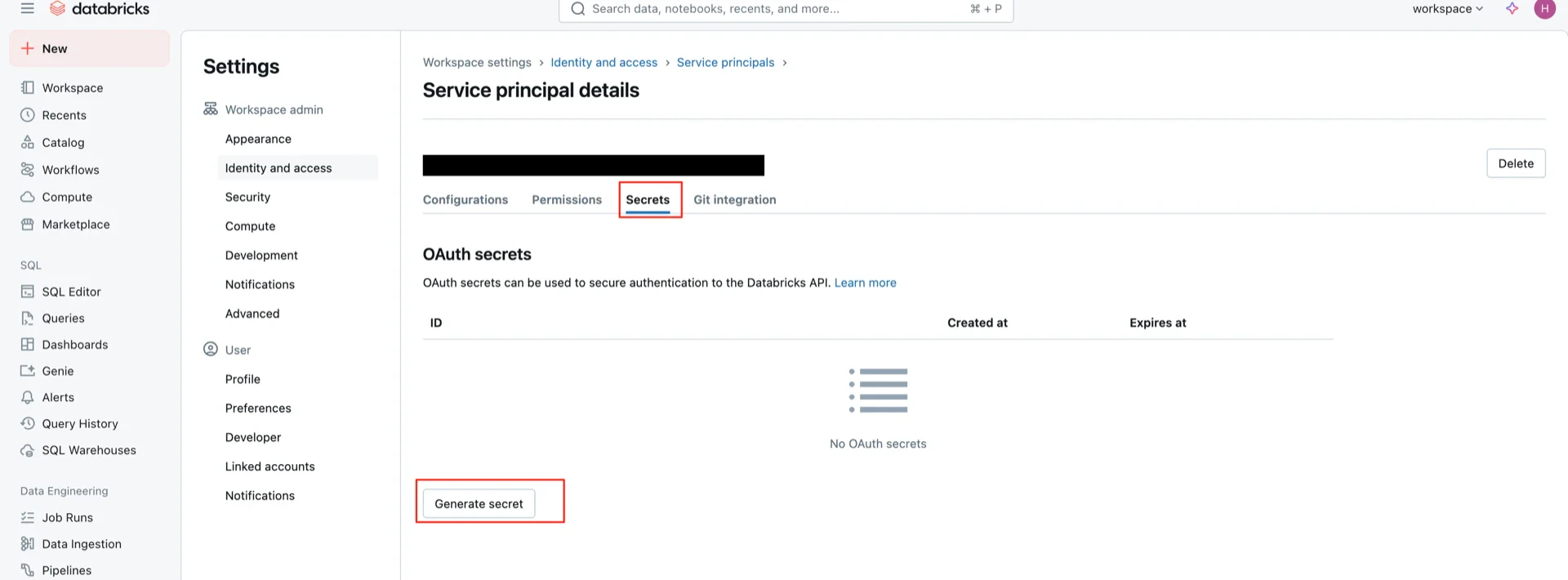

7. Create OAuth2 Credentials

Create an OAuth2 service principal that StreamNative Ursa will use to authenticate against Unity Catalog.

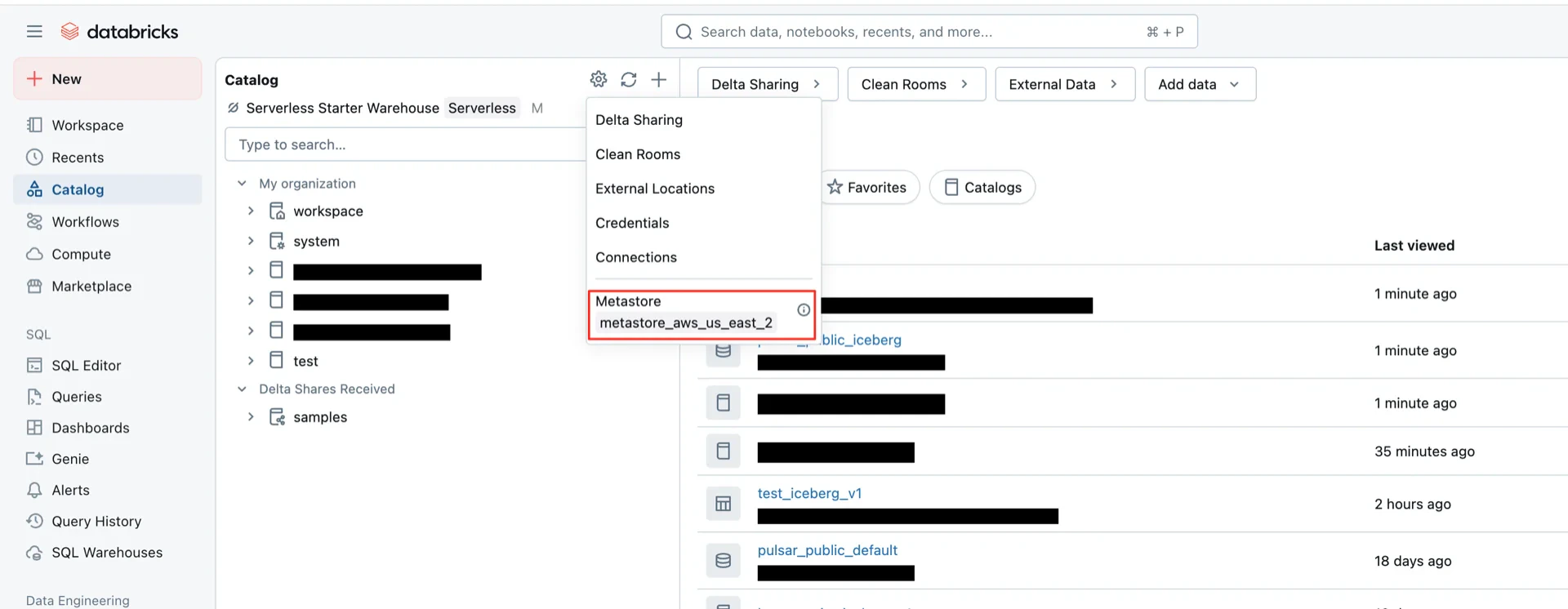

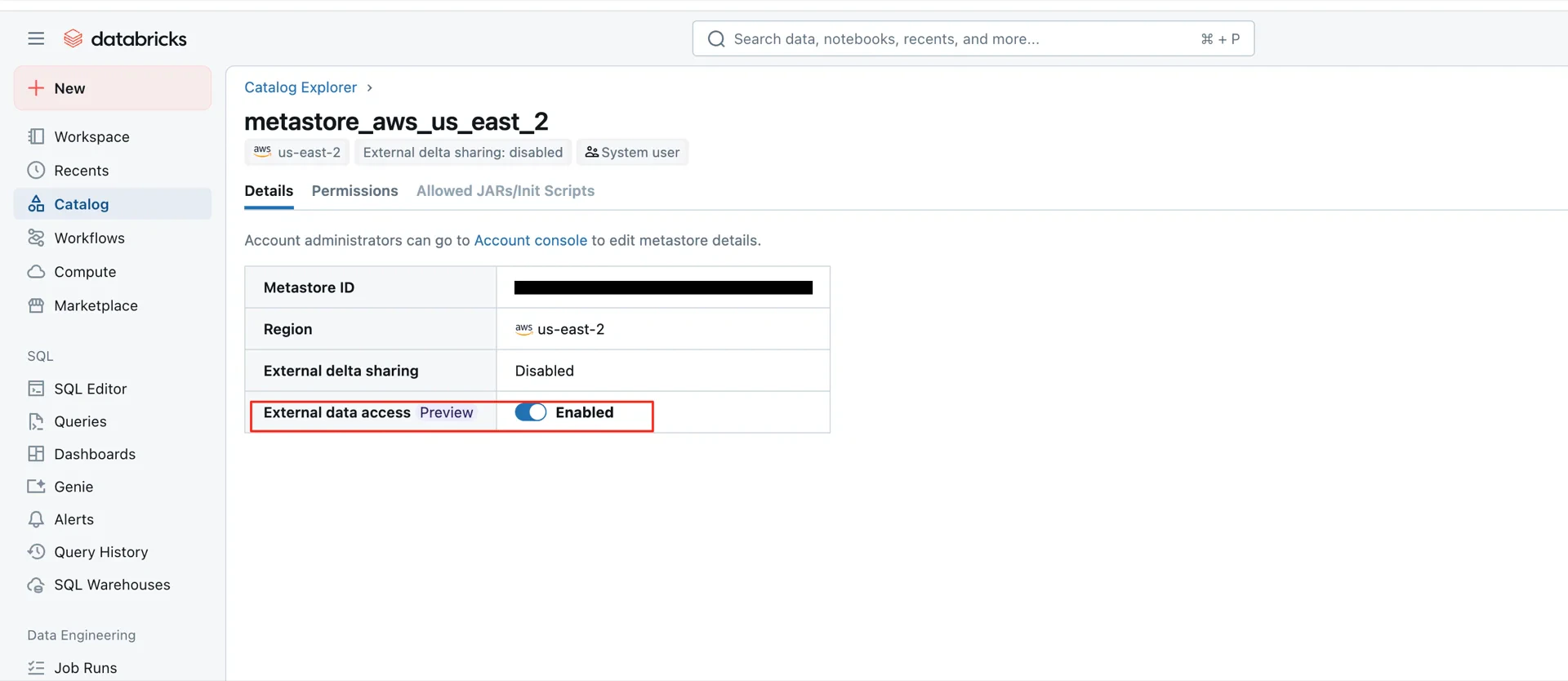

8. Enable External Data Access on the Metastore

This step is required for Unity Catalog Iceberg Managed Tables. Enable External data access on the metastore in Databricks.

Catalog Information Summary

When the steps above are complete, collect the following values for the StreamNative Ursa compaction service:| Value | Description |

|---|---|

| URI | Databricks workspace URL (e.g., https://dbc-xxxx.cloud.databricks.com/api/2.1/unity-catalog/iceberg-rest) |

| Warehouse | The Unity Catalog name created in step 5 |

| Credential | <client-id>:<client-secret> from step 7 |